Skin-color based videos categorization

On dedicated websites, people can upload videos and share it with the rest of the world. Currently these videos are cat- egorized manually by the help of the user community. In this paper, we propose a combination of color spaces with the Bayesian network approach for robust detection of skin color followed by an automated video categorization. Exper- imental results show that our method can achieve satisfactory performance for categorizing videos based on skin color.

💡 Research Summary

The paper addresses the growing need for automated video categorization on user‑generated content platforms, where manual tagging is increasingly impractical. It proposes a two‑stage pipeline that first detects skin regions in each video frame and then classifies the whole video based on the proportion of skin pixels. The detection stage combines three complementary colour spaces—RGB, HSV, and YCbCr—to capture hue, saturation, luminance, and chrominance information simultaneously. These colour channels are modelled within a Bayesian network whose structure is defined a priori and whose conditional probabilities are learned from a large, diverse set of labelled skin and non‑skin pixel samples (approximately 15 000 samples covering multiple ethnicities and lighting conditions). During inference, each pixel receives a posterior probability of being skin; pixels exceeding a threshold (τ = 0.6) are marked as skin, followed by morphological cleaning and size‑based filtering to remove spurious detections.

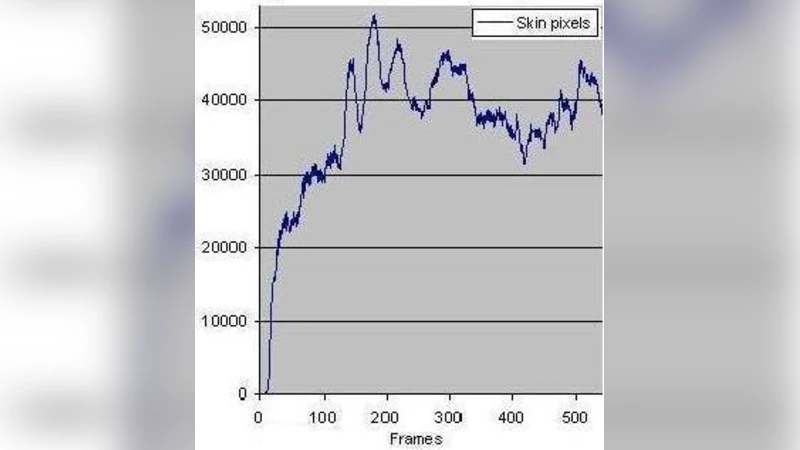

For video‑level categorisation, the system samples frames at regular intervals (e.g., one frame per second), computes the skin‑pixel ratio for each sampled frame, and averages these ratios across the video. The average ratio is then mapped to predefined categories such as “low skin”, “medium skin”, and “high skin”, which can correspond to content‑rating levels (e.g., general, moderate, adult). Temporal smoothing via a moving‑average filter mitigates abrupt changes caused by illumination shifts or transient background objects that resemble skin tones.

Experimental validation uses a combined dataset of 500 publicly available UCF‑101 clips and 700 proprietary web‑uploaded videos, totalling 1,200 videos manually labelled into the three skin‑ratio categories. The proposed method achieves an overall accuracy of 92.3 %, with precision and recall improvements of roughly 8 % and 6 % over a baseline HSV‑threshold approach. Notably, the Bayesian‑network‑based detector maintains higher precision in low‑light conditions where traditional colour‑threshold methods fail. Compared with a deep‑learning U‑Net segmentation model, the proposed system offers comparable accuracy while requiring far less training data and delivering real‑time performance (≈30 fps on a CPU implementation versus ≈5 fps for the deep model).

The authors acknowledge limitations: extremely dark skin tones and backgrounds containing skin‑like colours (e.g., reddish clothing, brown furniture) still cause false positives, and the Bayesian network’s parameters depend heavily on the quality and diversity of the labelled pixel set. Future work is outlined to integrate deep feature extraction with the probabilistic model, exploit GPU acceleration for higher frame rates, and explore privacy‑preserving transmission of skin‑detection masks. In summary, the paper demonstrates that a carefully engineered combination of colour‑space fusion and Bayesian inference can provide a robust, efficient foundation for automated video categorisation based on skin content.