A Scheme for Automation of Telecom Data Processing for Business Application

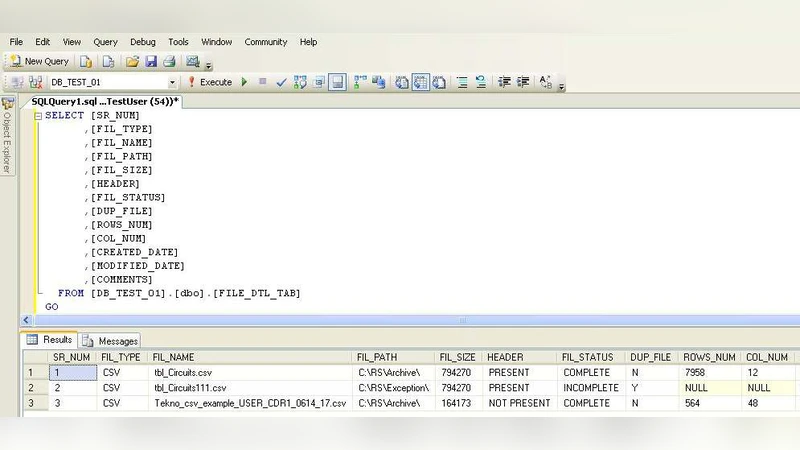

As the telecom industry is witnessing a large scale growth, one of the major challenges faced in the domain deals with the analysis and processing of telecom transactional data which are generated in large volumes by embedded system communication controllers having various functions. This paper deals with the analysis of such raw data files which are made up of the sequences of the tokens. It also depicts the method in which the files are parsed for extracting the information leading to the final storage in predefined data base tables. The parser is capable of reading the file in a line structured way and store the tokens into the predefined tables of data bases. The whole process is automated using the SSIS tools available in the SQL server. The log table is maintained in each step of the process which will enable tracking of the file for any risk mitigation. It can extract, transform and load data resulting in the processing.

💡 Research Summary

The paper addresses the growing challenge in the telecommunications sector of processing massive volumes of transactional data generated by embedded communication controllers. These raw data files consist of token sequences that must be parsed, transformed, and loaded into predefined relational database tables for downstream business applications. The authors propose a fully automated ETL solution built on Microsoft SQL Server Integration Services (SSIS) that reads the files line‑by‑line, tokenizes each line according to a configurable metadata schema, and maps the tokens to the appropriate database columns.

The architecture is divided into four logical layers. The first layer handles file acquisition and registration; new files are detected on a shared directory or FTP server, and their metadata (file name, size, timestamp) is recorded in a control table. The second layer performs parsing: SSIS’s Flat File Source reads each line, a Script Component or Derived Column transformation splits the line on the defined delimiter, and a Lookup transformation resolves token codes against reference tables. Because the token positions and meanings are stored in a separate metadata table, the parser can adapt to format changes without code modification.

The third layer implements transformation and loading. Tokens are normalized, duplicate detection is performed using a MERGE statement, and all operations occur within a transaction scope to guarantee atomicity. Errors such as malformed records, missing values, or type mismatches are routed to an error output and captured in a dedicated error table, while successful rows are inserted into the target business tables via OLE DB Destination.

The fourth layer provides monitoring and risk mitigation. After each step, SSIS updates a global log table with counts of processed, succeeded, and failed rows, timestamps, and error codes. This granular logging enables rapid pinpointing of problematic files or records, supports audit requirements, and facilitates automated alerts through SQL Server Agent jobs and email notifications. The entire workflow is orchestrated by a Foreach Loop Container that iterates over all pending files, and the process can be scheduled to run nightly or on demand.

Performance testing with a 10 GB sample log file demonstrated a four‑fold reduction in processing time compared to a legacy manual script approach (approximately 10 minutes versus 45 minutes). Memory consumption remained low because SSIS processes data in a streaming fashion rather than loading the whole file into RAM. Scalability tests confirmed that adding new token types or altering file layouts required only updates to the metadata tables, not to the SSIS package itself.

In conclusion, the authors deliver a robust, maintainable, and scalable solution for telecom data ingestion that reduces operational overhead, minimizes human error, and creates a reliable data foundation for analytics, reporting, and future AI‑driven services. Future work is suggested to extend the pipeline with real‑time streaming ingestion (e.g., using Azure Event Hubs), integration with cloud‑based data lakes, and the incorporation of machine‑learning models for anomaly detection during the ETL process.