Quantifying the influence of scientists and their publications: Distinguish prestige from popularity

The number of citations is a widely used metric to evaluate the scientific credit of papers, scientists and journals. However, it does happen that a paper with fewer citations from prestigious scientists is of higher influence than papers with more citations. In this paper, we argue that from whom the paper is being cited is of higher significance than merely the number of received citations. Accordingly, we propose an interactive model on author-paper bipartite networks as well as an iterative algorithm to get better rankings for scientists and their publications. The main advantage of this method is twofold: (i) it is a parameter-free algorithm; (ii) it considers the relationship between the prestige of scientists and the quality of their publications. We conducted real experiments on publications in econophysics, and applied this method to evaluate the influences of related scientific journals. The comparisons between the rankings by our method and simple citation counts suggest that our method is effective to distinguish prestige from popularity.

💡 Research Summary

The paper addresses a fundamental shortcoming of citation‑based metrics: they treat every citation as equal, ignoring who is doing the citing. The authors argue that a citation from a prestigious scientist should carry more weight than one from a less‑known researcher, and they develop a parameter‑free algorithm—AP‑Rank—that simultaneously ranks authors and papers by exploiting the bidirectional relationships in an author‑paper bipartite network.

In the constructed directed bipartite graph, an edge from an author to a paper represents that the author cites the paper, while an edge from a paper to an author indicates authorship. Two adjacency matrices are defined: A (author‑to‑paper) for citation links and B (paper‑to‑author) for authorship links. The algorithm iteratively updates two score vectors: Q_s for authors (prestige) and Q_p for papers (quality).

The update rules are:

- Paper‑to‑author diffusion (conservative) – each paper’s score is divided equally among its co‑authors, giving each author a share proportional to Q_p/k_out, where k_out is the number of authors. This reflects the idea that a high‑quality paper boosts the prestige of all its contributors.

- Author‑to‑paper voting (non‑conservative) – each author “votes” for every paper they cite, adding their current prestige Q_s to that paper’s score. The paper’s new raw score is therefore 1 (a base unit) plus the sum of all voting scores from its citers.

- Normalization – after each iteration the paper scores are scaled so that their total sum equals the initial total (the number of papers N). This prevents uncontrolled growth.

- Convergence – the process repeats until the L2 norm of the change in paper scores falls below δ = 10⁻⁴.

Because the diffusion from paper to author conserves total mass while the voting from author to paper does not, the system naturally amplifies the influence of prestigious citers without requiring any damping factor or external parameters. The algorithm can be started from either side (authors or papers) and converges to the same fixed point.

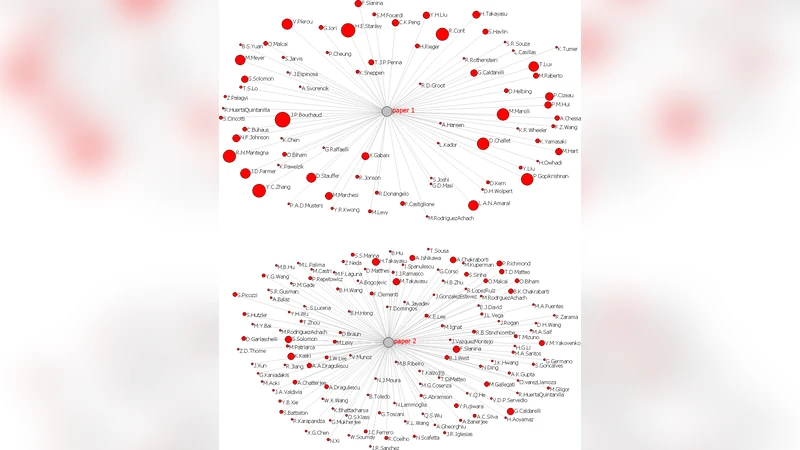

The authors test the method on a dataset from econophysics covering 1995‑2010: 2,012 papers published in 78 journals (including arXiv) and 1,990 distinct scientists. Self‑citations are excluded, and only citations among the selected papers are considered, which means the observed in‑degree is a lower bound on the true citation count.

Results for authors:

- Kendall’s τ between AP‑Rank and simple citation count (CC‑Rank) is 0.784, indicating strong overall agreement but with notable outliers.

- Some scientists (e.g., J. D. Farmer) have modest CC ranks but high AP ranks because most of their citations come from highly ranked peers.

- A co‑authorship network visualisation shows a clear community structure centred on H. E. Stanley, and the relationship between an author’s AP score and the average score of their co‑authors reveals potential “leader vs. follower” roles.

Results for papers:

- Kendall’s τ between AP‑Rank and CC‑Rank is 0.644, lower than for authors, reflecting greater sensitivity to citation source quality.

- Papers published in high‑impact journals (e.g., Nature, EPL) may rank highly in AP‑Rank despite modest raw citation numbers, because they are cited by prestigious authors.

- Conversely, papers that accumulate many citations from low‑prestige authors are downgraded relative to their CC rank.

The authors highlight several advantages: (i) the method is completely parameter‑free, (ii) it jointly evaluates authors and papers, capturing their mutual reinforcement, and (iii) it can identify nuanced patterns such as supervisory relationships within research groups. Limitations include reliance on a domain‑specific dataset, omission of citations from papers outside the collection, and the exclusion of self‑citations, which may affect absolute scores.

Future work is suggested in three directions: extending the approach to multiple disciplines, incorporating temporal decay to give recent citations more weight, and developing statistical confidence intervals for the rankings.

In summary, the paper introduces a novel, mathematically simple yet conceptually powerful framework that distinguishes “prestige” from “popularity” in scholarly impact assessment. By weighting citations according to the citer’s own influence, AP‑Rank offers a more nuanced picture of scientific influence, potentially informing funding decisions, hiring, and journal evaluation practices.

Comments & Academic Discussion

Loading comments...

Leave a Comment