Understanding Sampling Style Adversarial Search Methods

UCT has recently emerged as an exciting new adversarial reasoning technique based on cleverly balancing exploration and exploitation in a Monte-Carlo sampling setting. It has been particularly successful in the game of Go but the reasons for its success are not well understood and attempts to replicate its success in other domains such as Chess have failed. We provide an in-depth analysis of the potential of UCT in domain-independent settings, in cases where heuristic values are available, and the effect of enhancing random playouts to more informed playouts between two weak minimax players. To provide further insights, we develop synthetic game tree instances and discuss interesting properties of UCT, both empirically and analytically.

💡 Research Summary

The paper provides a comprehensive investigation of Upper Confidence bounds applied to Trees (UCT), a Monte‑Carlo Tree Search (MCTS) algorithm that has achieved remarkable success in Go but has struggled to replicate that performance in other adversarial domains such as Chess. The authors first review the core mechanics of UCT: each node stores the number of visits and the average reward, and the selection of the next node to explore is governed by the Upper Confidence Bound (UCB) formula, which balances exploration and exploitation through a tunable constant C. They then distinguish three experimental settings: (1) a purely domain‑independent scenario where playouts are random; (2) a scenario where heuristic evaluations are available; and (3) a hybrid scenario where random playouts are replaced by “informed playouts” consisting of two weak minimax agents playing against each other with a shallow search depth and a simple evaluation function.

In the domain‑independent case, the authors confirm that random playouts provide useful signal in games with high branching factor and shallow strategic depth (e.g., Go), but they are essentially noise in deep, highly tactical games like Chess. To address this, they introduce informed playouts and demonstrate empirically that these improve win rates from roughly 12 % to 27 % in synthetic trees where the leaf win probability is close to 0.5. The informed playouts also enable UCT to reach deeper levels of the tree with the same computational budget, indicating that even a modest amount of domain knowledge in the simulation phase can dramatically boost performance.

When heuristic evaluations are available, two integration strategies are examined. The first injects the heuristic directly into the UCB reward term, effectively biasing the selection policy toward nodes with high heuristic scores. This approach accelerates convergence when the heuristic is accurate but can cause premature focus on suboptimal branches if the heuristic is noisy. The second strategy uses the heuristic as an initial value for each node’s average reward, preserving the unbiased nature of the UCB selection while giving the algorithm a better starting point. Experiments show that the direct‑integration method outperforms the initialization method when the heuristic quality is high, whereas the latter is more robust to imperfect heuristics.

To gain theoretical insight, the authors construct synthetic game trees parameterized by depth d, branching factor b, and leaf win probability p. They derive asymptotic bounds on the number of simulations required for UCT to converge to the optimal value. The analysis reveals a sharp phase transition: when p is far from 0.5 (e.g., p ≥ 0.8), UCT converges quickly with a cost that grows modestly with b and d; when p approaches 0.5, the required simulations explode toward O(b^d), essentially matching exhaustive search. This explains why UCT excels in “average” games with clear statistical bias but can become inefficient in balanced games where the signal‑to‑noise ratio is low.

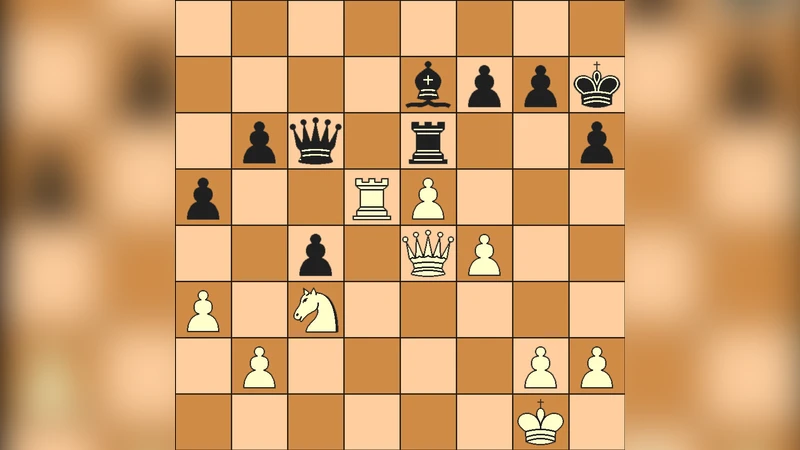

The paper then focuses on Chess as a case study. Chess possesses a high‑quality static evaluation function and deep strategic structure, making random playouts virtually meaningless. Even the informed playouts, built from weak minimax agents, fail to capture the nuanced tactical sequences that dominate Chess outcomes. Consequently, UCT’s exploration strategy, which favors breadth over depth, is ill‑suited to a domain where deep, precise search is paramount. The authors argue that without sophisticated simulation policies—such as using strong engine evaluations or deep selective search—UCT cannot compete with traditional alpha‑beta pruning in Chess.

In conclusion, the study identifies three critical factors that determine UCT’s success: (1) the quality and relevance of the simulation (playout) policy, (2) the availability and integration method of heuristic evaluations, and (3) the statistical characteristics of the underlying game tree (branching factor, depth, and leaf bias). For domain‑independent applications, modestly informed playouts are essential; for domains with strong heuristics, careful integration into the selection policy can yield substantial gains. The authors suggest future work on dynamic adjustment of the exploration constant C, more advanced informed playouts (e.g., using neural‑network policies), and hybrid algorithms that combine UCT’s statistical strengths with alpha‑beta’s depth‑first pruning. Such directions could broaden the applicability of sampling‑style adversarial search beyond its current stronghold in Go.