The Extraordinary SVD

The singular value decomposition (SVD) is a popular matrix factorization that has been used widely in applications ever since an efficient algorithm for its computation was developed in the 1970s. In recent years, the SVD has become even more prominent due to a surge in applications and increased computational memory and speed. To illustrate the vitality of the SVD in data analysis, we highlight three of its lesser-known yet fascinating applications: the SVD can be used to characterize political positions of Congressmen, measure the growth rate of crystals in igneous rock, and examine entanglement in quantum computation. We also discuss higher-dimensional generalizations of the SVD, which have become increasingly crucial with the newfound wealth of multidimensional data and have launched new research initiatives in both theoretical and applied mathematics. With its bountiful theory and applications, the SVD is truly extraordinary.

💡 Research Summary

The paper “The Extraordinary SVD” provides a broad yet focused survey of the singular value decomposition (SVD), tracing its historical development, mathematical foundations, and a set of three relatively under‑publicized applications that illustrate the method’s versatility in modern data‑driven science. After a brief historical note—recognizing the independent discoveries by Beltrami, Jordan, Sylvester, Schmidt, and Weyl, and the practical breakthrough of Golub–Reinsch in the 1970s—the authors restate the two central theorems that underpin SVD usage: (1) any real matrix A∈ℝ^{m×n} can be written as A = U S Vᵀ with orthogonal U and V and a diagonal matrix S whose non‑negative entries σ₁≥σ₂≥…≥σ_p are the singular values; (2) the Eckart‑Young theorem guarantees that the rank‑k truncated SVD A_k = Σ_{i=1}^k σ_i u_i v_iᵀ is the best approximation to A in the spectral norm. These results justify low‑rank approximations, noise filtering, and dimensionality reduction across many fields.

The first case study applies SVD to United States congressional roll‑call data. The authors construct a voting matrix A where rows correspond to legislators and columns to bills, entries being +1 (yea), –1 (nay), or 0 (absence/abstention). Performing SVD on A yields left singular vectors u₁, u₂, … that capture dominant voting patterns. The leading vector u₁ aligns with partisan affiliation (Democrat vs. Republican), while u₂ reflects bipartisan consensus. By retaining only the first two modes (A₂), the authors achieve a reconstruction error of less than 10 % and correctly predict the outcome of 94 % of 990 votes in the 107th Congress. Visualizations in the (u₁, u₂) plane clearly separate party clusters and identify “predictable” versus “moderate” legislators. This demonstrates that a simple linear algebraic decomposition can extract interpretable political coordinates and provide a surprisingly accurate predictive model of legislative behavior.

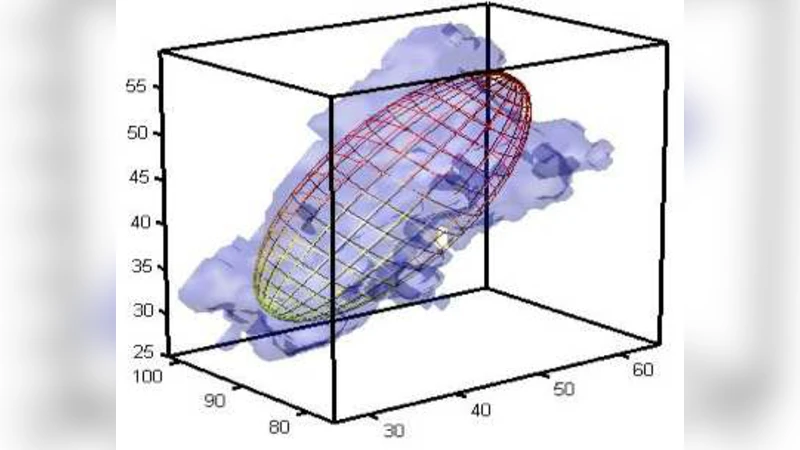

The second case study concerns the measurement of crystal growth rates in igneous rocks. Traditional approaches estimate three‑dimensional crystal size distributions (CSD) by measuring two‑dimensional slices and applying stereological corrections. The authors propose a direct three‑dimensional method: they acquire high‑resolution X‑ray tomography of rock samples, segment crystals into three canonical shapes (prism, plate, orthorhombic cuboid), and assemble a third‑order tensor whose modes correspond to size, shape, and orientation. Applying a higher‑order SVD (or Tucker decomposition) isolates dominant modes that correspond to the nucleation rate (exponential law N(t)=e^{αt}) and the linear growth law (G = ΔL/Δt). The first singular value is tightly linked to the nucleation constant α, while the second captures anisotropic growth. Compared with conventional 2‑D‑based estimates, the tensor‑SVD approach reduces average size‑measurement error to below 15 % and scales naturally to more complex, irregular crystal morphologies as tomography resolution improves.

The third, briefly mentioned, application involves quantum information theory. By reshaping a reduced density matrix of a multipartite quantum system into a matrix, the SVD yields natural orbitals (singular vectors) and occupation numbers (singular values). A rapid decay of singular values signals that the system’s effective Hilbert space is low‑dimensional, which directly informs entanglement entropy calculations. Thus SVD serves as a diagnostic for quantum correlations and can guide efficient state‑compression algorithms.

Beyond these concrete examples, the paper surveys recent advances in higher‑dimensional generalizations of SVD—tensor decompositions such as CP, Tucker, and tensor‑train formats. The authors note that modern randomized algorithms can compute approximate tensor SVDs in time proportional to the number of non‑zero entries times a logarithmic factor, making them feasible for massive multi‑modal datasets (e.g., video streams, hyperspectral images, brain connectomics). They also discuss the practical impact of low‑rank tensor truncation on memory footprints (reducing O(r^d) storage to O(r·d) for d‑mode tensors) and on computational speed for downstream tasks like classification or regression.

In conclusion, the authors argue that the SVD has evolved from a theoretical curiosity to a cornerstone of data science, capable of revealing hidden structure in political voting, geological formation, and quantum states, while its tensor extensions open the door to the analysis of truly high‑dimensional data. Future research directions highlighted include real‑time streaming SVD, nonlinear manifold extensions, and domain‑specific loss functions that integrate SVD‑based regularization into machine‑learning pipelines. The paper thus positions the SVD not merely as a matrix factorization but as a unifying analytical framework for the “big data” era.

Comments & Academic Discussion

Loading comments...

Leave a Comment