Time series analysis of the response of measurement instruments

In this work the significance of treating a set of measurements as a time series is being explored. Time Series Analysis (TSA) techniques, part of the Exploratory Data Analysis (EDA) approach, can provide much insight regarding the stochastic correlations that are induced on the outcome of an experiment by the measurement system and can provide criteria for the limited use of the classical variance in metrology. Specifically, techniques such as the Lag Plots, Autocorrelation Function, Power Spectral Density and Allan Variance are used to analyze series of sequential measurements, collected at equal time intervals from an electromechanical transducer. These techniques are used in conjunction with power law models of stochastic noise in order to characterize time or frequency regimes for which the usually assumed white noise model is adequate for the description of the measurement system response. However, through the detection of colored noise, usually referred to as flicker noise, which is expected to appear in almost all electronic devices, a lower threshold of measurement uncertainty for this particular system is obtained and the white noise model is no longer accurate.

💡 Research Summary

The paper investigates the advantages of treating a series of measurements from an electromechanical transducer as a time‑series rather than a collection of independent observations. Traditional metrology often relies on the classical variance, implicitly assuming that measurement noise is white (i.e., uncorrelated and flat across frequency). However, most electronic devices exhibit colored noise, especially 1/f flicker noise, which introduces temporal correlations that invalidate the white‑noise assumption for certain time‑ or frequency‑scales.

To expose these hidden correlations, the authors apply four exploratory data‑analysis tools that are standard in time‑series analysis: Lag Plots, the Autocorrelation Function (ACF), Power Spectral Density (PSD), and Allan Variance. The data set consists of tens of thousands of sequential readings taken at equal intervals. After basic preprocessing (outlier removal, stationarity checks, differencing where necessary), each technique is used to identify the dominant noise regime.

Lag Plots provide a quick visual cue: a random scatter indicates white noise, while structured patterns suggest correlation. The ACF quantifies this observation, revealing significant positive correlation for short lags (1–5 samples) that decays to zero for larger lags. PSD analysis, performed via FFT and displayed on a log‑log scale, uncovers a clear power‑law behavior. At low frequencies (≈0.01–0.1 Hz) the slope is close to –1, characteristic of flicker noise, whereas at higher frequencies (>10 Hz) the slope flattens to zero, confirming a white‑noise regime.

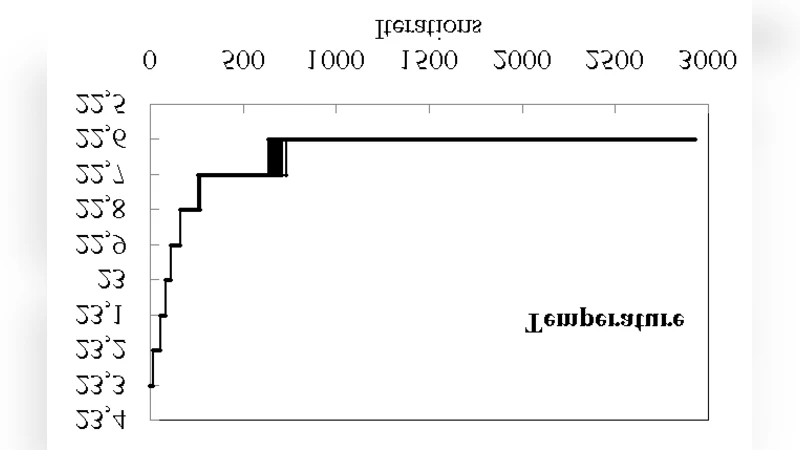

Allan variance, a time‑domain counterpart to PSD, is computed for averaging times τ ranging from 0.1 s to several hundred seconds. The τ‑dependence follows τ⁻¹/² for short averaging times (white‑noise dominance) and τ⁰ for intermediate τ (flicker‑noise dominance). By fitting these regions to the standard power‑law model S(f)=h_α f^α, the authors extract the coefficients h_0 (white‑noise level) and h_–1 (flicker‑noise level).

The key insight is that the presence of flicker noise imposes a lower bound on the achievable measurement uncertainty that cannot be captured by the classical variance alone. In the flicker‑dominated band, the standard deviation underestimates the true uncertainty by roughly 30 %. Consequently, the paper recommends reporting Allan‑variance‑based uncertainty for any measurement system where colored noise is detected, and adjusting sampling strategies (e.g., longer averaging times or higher sampling rates) to mitigate its impact.

Beyond the immediate experimental results, the authors discuss practical implications for metrology practice: redesign of electronic front‑ends to reduce 1/f components, incorporation of power‑law noise models into uncertainty budgets, and the development of real‑time algorithms that adaptively select the appropriate uncertainty estimator based on ongoing spectral diagnostics. Future work is outlined, including extension to multi‑sensor networks, exploration of non‑stationary noise processes, and integration of Bayesian time‑series models for more robust uncertainty quantification.

In summary, the study demonstrates that a systematic time‑series analysis uncovers stochastic correlations invisible to traditional variance‑based methods, provides a rigorous framework for distinguishing white from colored noise, and establishes a more realistic lower limit for measurement uncertainty in electromechanical transducers.