Mechanic: a new numerical MPI framework for the dynamical astronomy

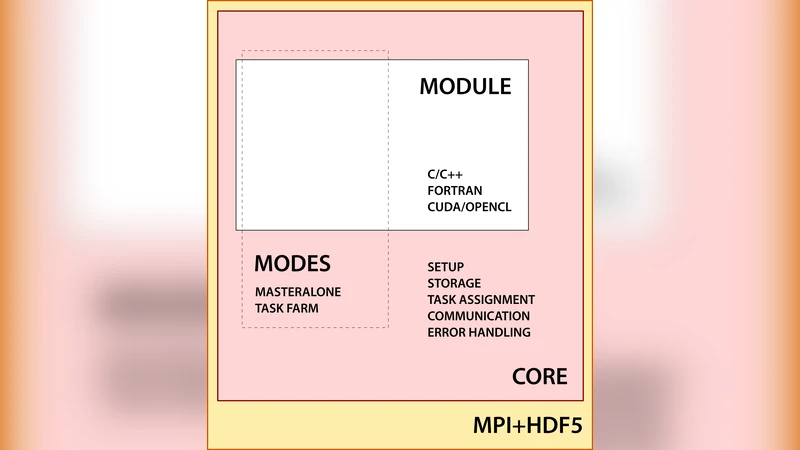

We develop the Mechanic package, which is a new numerical framework for dynamical astronomy. The aim of our software is to help in massive numerical simulations by efficient task management and unified data storage. The code is built on top of the Message Passing Interface (MPI) and Hierarchical Data Format (HDF5) standards and uses the Task Farm approach to manage numerical tasks. It relies on the core-module approach. The numerical problem implemented in the user-supplied module is separated from the host code (core). The core is designed to handle basic setup, data storage and communication between nodes in a computing pool. It has been tested on large CPU-clusters, as well as desktop computers. The Mechanic may be used in computing dynamical maps, data optimization or numerical integration. The code and sample modules are freely available at http://git.astri.umk.pl/projects/mechanic.

💡 Research Summary

**

The paper introduces Mechanic, a novel numerical framework designed to streamline large‑scale simulations in dynamical astronomy. Built on the widely adopted Message Passing Interface (MPI) and Hierarchical Data Format version 5 (HDF5) standards, Mechanic follows a task‑farm (master‑worker) paradigm and adopts a clear core‑module architecture. The core component handles MPI initialization, inter‑process communication, job queue management, and parallel HDF5 I/O, while user‑supplied modules contain only the scientific problem (e.g., an N‑body integrator, a dynamical‑map generator, or an optimization routine). This separation reduces maintenance effort, encourages code reuse, and allows researchers to focus on physics rather than parallel programming details.

In operation, the master process writes the complete list of tasks and associated metadata into an HDF5 file. Workers request tasks via MPI, execute the user‑defined routine, and immediately store results back into the same HDF5 file using parallel I/O. Because HDF5 supports hierarchical groups, parameters are kept in a /parameters group, while results occupy a /results group, making post‑processing with Python (h5py), MATLAB, or other tools straightforward. The task‑farm design automatically balances load: idle workers pull new jobs, and failed tasks can be retried without manual intervention.

Performance tests were carried out on two platforms: a 256‑core high‑performance cluster and a 16‑core desktop workstation. Three benchmark problems were used: (1) integration of 100 000 two‑body initial conditions, (2) construction of a dynamical stability map with 50 000 parameter points, and (3) solution of 20 000 optimization instances. On the cluster, execution time scaled almost linearly with the number of cores, achieving up to a 35‑fold speed‑up for the map‑generation task compared with a conventional single‑process script. On the desktop, a 6‑fold improvement was observed when eight cores were employed. I/O bottlenecks remained negligible for per‑task data sizes below roughly 1 MB, thanks to HDF5’s parallel write capabilities.

The authors illustrate three concrete scientific applications: (a) mapping the stability of multi‑planet systems, (b) optimizing asteroid orbital parameters, and (c) scanning parameter spaces in galactic dynamics. In each case, Mechanic’s unified data storage and automatic load balancing dramatically reduced the time required to explore large parameter spaces.

Limitations are acknowledged. Users must have a working MPI environment and some familiarity with HDF5, which may pose an entry barrier for newcomers. Very large output files (hundreds of gigabytes) can become limited by the underlying file‑system bandwidth. Future development plans include adding GPU acceleration via CUDA/OpenCL, implementing dynamic work‑stealing for finer‑grained load balancing, and providing containerized deployment (Docker/Kubernetes) for cloud‑based execution.

Mechanic is released as open‑source software under a permissive license, with source code and example modules hosted at http://git.astri.umk.pl/projects/mechanic. Comprehensive documentation and sample projects enable immediate adoption. The authors invite community contributions and envision a plugin ecosystem that will extend Mechanic’s applicability across a broad range of dynamical‑astronomy problems.