Empirical estimation of entropy functionals with confidence

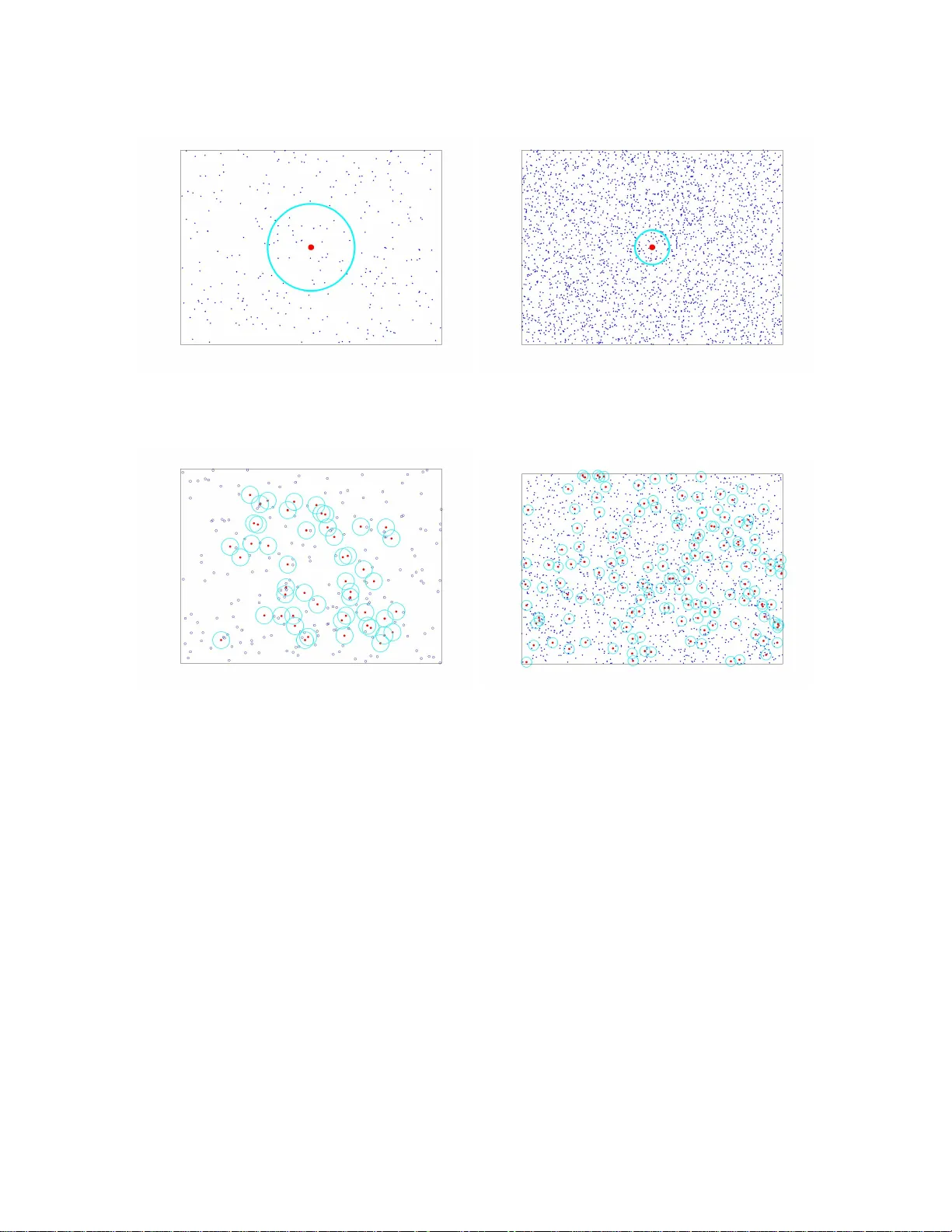

This paper introduces a class of k-nearest neighbor ($k$-NN) estimators called bipartite plug-in (BPI) estimators for estimating integrals of non-linear functions of a probability density, such as Shannon entropy and R\'enyi entropy. The density is a…

Authors: Kumar Sricharan, Raviv Raich, Alfred O. Hero III