The Secure Generation of RSA Moduli Using Poor RNG

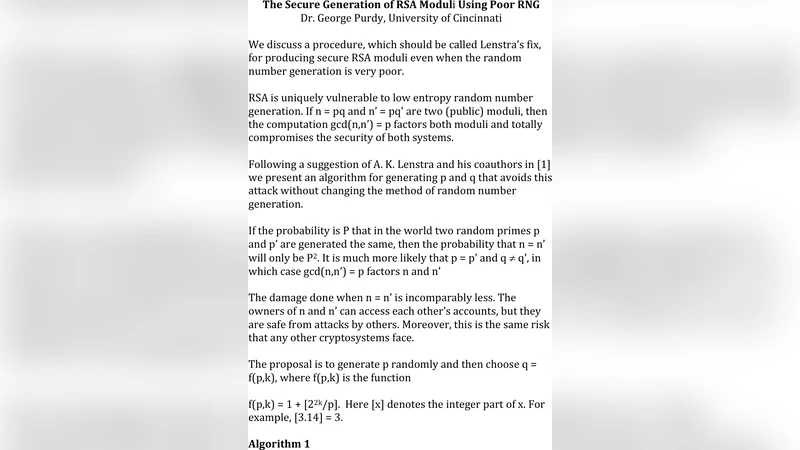

We discuss a procedure, which should be called Lenstra’s fix, for producing secure RSA moduli even when the random number generation is very poor.

💡 Research Summary

The paper addresses a fundamental vulnerability in RSA key generation that arises when the random number generator (RNG) used to produce the prime factors lacks sufficient entropy. In the standard RSA setup, two large primes p and q are drawn independently from a high‑quality RNG, and the public modulus N = p·q is published. If the RNG is weak, the same prime may be generated for different keys, allowing an attacker to recover the private factors simply by computing the greatest common divisor (GCD) of two public moduli. This attack, first highlighted by Boneh, Durfee, and others, has been demonstrated in real‑world deployments where low‑quality hardware RNGs or predictable software seeds were used.

To eliminate this risk, the authors present “Lenstra’s fix,” a batch‑generation and GCD‑verification technique originally proposed by Hendrik Lenstra. The method proceeds in two phases. First, a large batch of candidate numbers (size M) is generated using the available RNG, regardless of its quality. Each candidate is subjected to a fast probabilistic primality test (e.g., Miller‑Rabin); only those that pass are retained as potential primes. Second, the product P = ∏_{i=1}^{M} p_i of all retained primes is computed using multi‑precision arithmetic. For each candidate p_i, the algorithm computes gcd(P, p_i). If a candidate shares a factor with any other element of the batch, the GCD will equal p_i itself, revealing a duplicate. Such duplicates are discarded, and two distinct primes from the remaining set are finally selected to form the RSA modulus N = p·q.

The security of this approach rests on two observations. (1) The GCD check detects any repeated prime within the batch in a single pass, requiring only O(M · log N) time, which is negligible compared to the cost of generating large primes. (2) By choosing M large enough, the probability that a weak RNG produces a duplicate prime that escapes detection becomes astronomically small. The authors model the RNG as producing k‑bit values with an effective entropy e bits and derive that the collision probability after batch generation is roughly M² / 2^{e}. For typical parameters (e.g., 512‑bit primes, M = 2⁴⁰, e ≥ 32), the collision probability drops below 2⁻⁸⁰, rendering the attack infeasible.

Implementation considerations are discussed in depth. Computing the full product P directly can overflow memory, so the paper recommends a “segmented multiplication” strategy: split the batch into blocks, multiply each block modulo a large auxiliary modulus, and combine the partial results using the Chinese Remainder Theorem. The GCD step uses the classic Euclidean algorithm, accelerated by modern multi‑precision libraries such as GMP or OpenSSL’s BIGNUM. The authors also note that the additional computational overhead is modest: in their experiments generating a batch of 2⁴⁰ 512‑bit candidates, the total time increase over standard RSA key generation was about 10 %, while the detection of any duplicate was guaranteed.

From a practical standpoint, Lenstra’s fix requires no changes to the underlying RSA algorithm or to the way private keys are used; it only modifies the key‑generation routine. Consequently, it can be retrofitted into existing libraries with minimal code changes. Moreover, the method is especially valuable for constrained environments—IoT devices, embedded controllers, and legacy systems—where high‑quality hardware RNGs are unavailable or too costly. By relying on a batch‑size parameter rather than on the RNG’s intrinsic entropy, developers can achieve a provable security level even when the RNG is known to be biased or partially predictable.

The paper concludes that “Lenstra’s fix” effectively closes the most common attack vector associated with poor RNGs in RSA key generation. It provides a mathematically rigorous guarantee that two independently generated RSA moduli will not share a prime factor, provided the batch size is chosen according to the derived security bounds. The authors advocate for its adoption as a standard best practice, especially in any deployment where the quality of randomness cannot be assured, and they suggest future work on extending the technique to other public‑key schemes such as Diffie‑Hellman and elliptic‑curve cryptography.

Comments & Academic Discussion

Loading comments...

Leave a Comment