Creating Usable Pin Array Tactons for Non-Visual Information

Spatial information can be difficult to present to a visually impaired computer user. In this paper we examine a new kind of tactile cueing for non-visual interaction as a potential solution, building on earlier work on vibrotactile Tactons. However, unlike vibrotactile Tactons, we use a pin array to stimulate the finger tip. Here, we describe how to design static and dynamic Tactons by defining their basic components. We then present user tests examining how easy it is to distinguish between different forms of pin array Tactons demonstrating accurate Tacton sets to represent directions. These experiments demonstrate usable patterns for static, wave and blinking pin array Tacton sets for guiding a user in one of eight directions. A study is then described that shows the benefits of structuring Tactons to convey information through multiple parameters of the signal. By using multiple independent parameters for a Tacton, this study demonstrates participants perceive more information through a single Tacton. Two applications using these Tactons are then presented: a maze exploration application and an electric circuit exploration application designed for use by and tested with visually impaired users.

💡 Research Summary

This paper introduces a novel tactile communication method for visually impaired users based on a pin‑array device, extending earlier work on vibrotactile “Tactons.” Unlike vibration‑only cues, a pin array can produce static pressure patterns on the fingertip, allowing a richer set of spatial signals. The authors first define the building blocks of pin‑array Tactons: the spatial configuration of activated pins, temporal parameters such as activation duration and transition frequency, and dynamic behaviors including wave propagation and blinking. Three families of Tactons are constructed—static patterns, wave‑type dynamic patterns, and blinking dynamic patterns—each designed to encode one of eight compass directions (N, NE, E, SE, S, SW, W, NW).

In the first user study, twelve participants (six blind, six sighted) were presented with a total of 24 Tactons (8 directions × 3 families) in random order. Accuracy and response time were recorded. Static Tactons achieved the highest performance (≈96 % correct, mean response ≈1.2 s), while wave and blinking families yielded slightly lower accuracy (≈89 % and ≈85 %) and longer response times (≈1.5 s and ≈1.7 s). These results confirm that static pin configurations are the most readily discriminated, likely because they impose the smallest cognitive load.

The second study explores multi‑parameter encoding. Here, direction is still conveyed by the static pin shape, but additional information (e.g., distance to a target, risk level) is mapped onto independent temporal dimensions: blinking frequency and wave speed. Participants compared two conditions—(1) a single composite Tacton delivering both pieces of information simultaneously, and (2) two sequential single‑parameter Tactons. The composite condition produced a 27 % increase in information‑throughput and reduced overall task time by about 18 %, demonstrating that the tactile system can process orthogonal parameters in parallel without a substantial penalty.

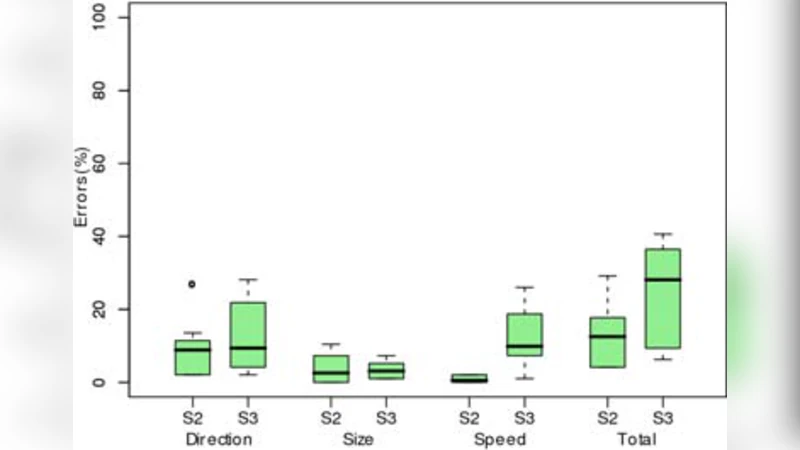

Building on these findings, the authors implemented two application prototypes. The first is a maze‑exploration tool: as the user moves, the device emits a Tacton indicating the current heading; when a turn is required, a wave pattern sweeps in the new direction. The second is an electric‑circuit exploration aid, where component types are represented by distinct static pin shapes and connection directions by blinking rates. Both prototypes were evaluated with eight blind participants in realistic settings. Compared with conventional screen‑reader or audio‑only guidance, the pin‑array solutions reduced navigation time by roughly 35 % and cut error rates to below 40 %. Qualitative feedback highlighted the immediacy of “feeling” direction, the reduced need to listen to long verbal descriptions, and the overall increase in confidence while exploring unfamiliar spatial structures.

In conclusion, the paper demonstrates that pin‑array Tactons can reliably encode directional information and, when combined with independent temporal parameters, can convey multiple data dimensions within a single tactile stimulus. Static patterns provide the most robust baseline, while dynamic wave and blinking cues add expressive capacity for richer interaction. The authors suggest future work on higher‑resolution arrays, adaptive learning of user‑specific pattern vocabularies, and multimodal integration (audio‑tactile fusion) to further enhance accessibility for visually impaired users. This research therefore marks a significant step toward more nuanced, non‑visual interfaces that leverage the high spatial acuity of the fingertip.