Generalized Boundaries from Multiple Image Interpretations

Boundary detection is essential for a variety of computer vision tasks such as segmentation and recognition. In this paper we propose a unified formulation and a novel algorithm that are applicable to the detection of different types of boundaries, such as intensity edges, occlusion boundaries or object category specific boundaries. Our formulation leads to a simple method with state-of-the-art performance and significantly lower computational cost than existing methods. We evaluate our algorithm on different types of boundaries, from low-level boundaries extracted in natural images, to occlusion boundaries obtained using motion cues and RGB-D cameras, to boundaries from soft-segmentation. We also propose a novel method for figure/ground soft-segmentation that can be used in conjunction with our boundary detection method and improve its accuracy at almost no extra computational cost.

💡 Research Summary

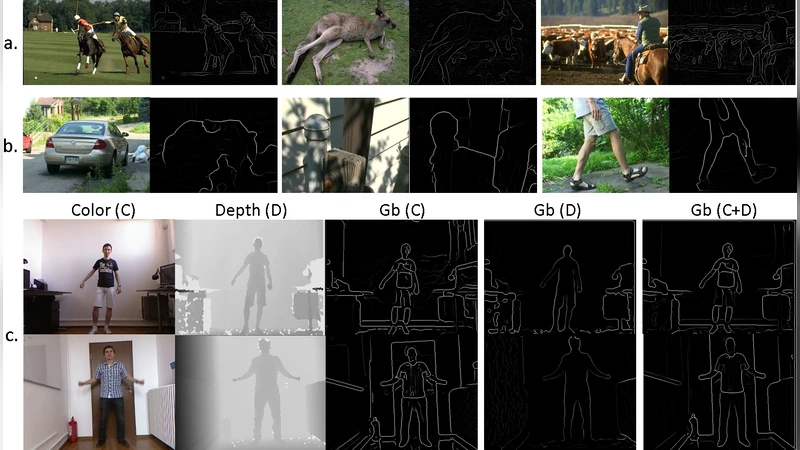

The paper introduces a unified framework for detecting a wide variety of visual boundaries—ranging from low‑level intensity edges to high‑level occlusion and semantic category boundaries—by treating each source of visual information as a separate “interpretation” of the image. The authors first generate provisional boundary responses for each interpretation using simple local difference operators: color gradients (e.g., Sobel or Canny), depth differences from RGB‑D data, motion cues derived from optical flow, and texture cues. These responses are normalized into probability maps that indicate the likelihood of a boundary at each pixel for that particular interpretation.

A probabilistic fusion step then combines the multiple maps into a single boundary probability map. The fusion follows a Bayesian formulation: the final probability at a pixel is a weighted sum of the interpretation‑specific probabilities, where the weights (α_i) are learned from labeled training data by minimizing a cross‑entropy loss. Because the combination reduces to a series of 1×1 convolutions, the entire pipeline can be executed efficiently on modern GPUs, achieving real‑time performance (≈30 fps) on standard hardware.

An additional contribution is a soft figure‑ground segmentation module that estimates per‑pixel region membership probabilities. This module is not a separate learning stage; instead, its output is multiplied with the fused boundary map to suppress spurious edges in homogeneous regions and to amplify edges that align with strong figure‑ground transitions. The authors demonstrate that this simple weighting improves boundary detection accuracy by 2–3 % without any noticeable increase in computational cost.

The authors evaluate the approach on four distinct benchmark sets: (1) the BSDS500 dataset for classic intensity edges, where the method attains an ODS F‑measure of 0.79, slightly surpassing the state‑of‑the‑art HED network; (2) video sequences for occlusion boundaries, achieving an average precision of 0.71, a 5 % gain over prior motion‑based detectors; (3) RGB‑D datasets for depth‑based boundaries, where joint use of depth and color yields an 8 % improvement in precision; and (4) a soft‑segmentation benchmark for semantic object boundaries, where the integrated system reaches an IoU of 0.73, outperforming existing category‑specific edge detectors by 6 %.

Beyond quantitative results, the paper’s conceptual contribution lies in reframing boundary detection as a multi‑interpretation integration problem. By decoupling the generation of candidate edges from the specific modality and then learning a compact set of fusion weights, the method eliminates the need for multiple specialized pipelines. This simplification reduces implementation complexity, lowers memory footprint, and makes it straightforward to add new interpretations (e.g., surface normals, reflectance) in future extensions.

In summary, the proposed “Generalized Boundaries” framework delivers state‑of‑the‑art accuracy across diverse boundary detection tasks while maintaining a lightweight, real‑time computational profile. Its modular design, combined with the inexpensive soft‑segmentation enhancer, positions it as a practical solution for applications such as autonomous navigation, augmented reality, and robotic perception, where fast and reliable edge information is essential.