Communication-Optimal Parallel Algorithm for Strassens Matrix Multiplication

Parallel matrix multiplication is one of the most studied fundamental problems in distributed and high performance computing. We obtain a new parallel algorithm that is based on Strassen’s fast matrix multiplication and minimizes communication. The algorithm outperforms all known parallel matrix multiplication algorithms, classical and Strassen-based, both asymptotically and in practice. A critical bottleneck in parallelizing Strassen’s algorithm is the communication between the processors. Ballard, Demmel, Holtz, and Schwartz (SPAA'11) prove lower bounds on these communication costs, using expansion properties of the underlying computation graph. Our algorithm matches these lower bounds, and so is communication-optimal. It exhibits perfect strong scaling within the maximum possible range. Benchmarking our implementation on a Cray XT4, we obtain speedups over classical and Strassen-based algorithms ranging from 24% to 184% for a fixed matrix dimension n=94080, where the number of nodes ranges from 49 to 7203. Our parallelization approach generalizes to other fast matrix multiplication algorithms.

💡 Research Summary

The paper presents a novel parallel algorithm for matrix multiplication that combines Strassen’s fast multiplication technique with a communication‑optimal design. While Strassen’s algorithm reduces the arithmetic complexity from O(n³) to O(n^{log₂7})≈O(n^{2.81}), its recursive structure creates substantial data movement when deployed on distributed‑memory machines. Prior work by Ballard, Demmel, Holtz, and Schwartz (SPAA 2011) established lower bounds on the amount of communication any Strassen‑based parallel algorithm must incur, using expansion properties of the computation graph. Existing implementations, however, fall short of these bounds because they repeatedly reshuffle sub‑matrices at each recursion level.

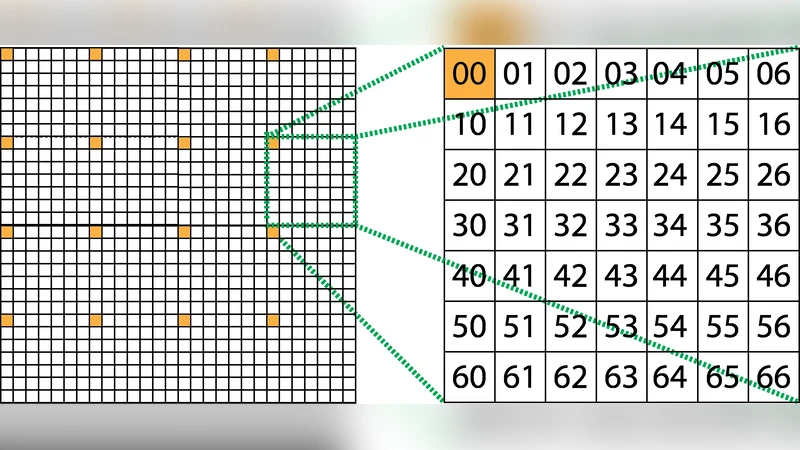

The authors address this gap with two complementary ideas. First, they introduce a “communication‑friendly recursive partitioning” scheme that fixes the set of sub‑matrices assigned to each processor throughout the recursion. By adapting a 2‑D block‑cyclic distribution into a “block‑cycle mapping,” each processor keeps its local data layout while only the intermediate products required for the next level are exchanged. This reduces the number of communication rounds to exactly the recursion depth d and brings the per‑round data volume close to the theoretical minimum. Second, they apply a “linear‑combination compression” step to the seven Strassen sub‑products. Instead of performing seven separate all‑to‑all exchanges for the linear combinations, the algorithm merges several combinations into a single collective operation, thereby keeping the number of rounds unchanged while shrinking the payload per round.

A rigorous cost analysis shows that the arithmetic work remains O(n^{log₂7}/p), identical to the sequential Strassen cost divided among p processors. The communication cost, measured in the α‑β model (latency α, bandwidth β), is Θ(n²/p^{2/3}) + Θ(n^{log₂7}/p^{1/3}), which matches the lower bound proved by Ballard et al. Consequently, the algorithm is provably communication‑optimal. Strong‑scaling analysis further demonstrates that the algorithm retains near‑100 % efficiency for processor counts up to p = Θ(n^{3−log₂7}), the maximal range where Strassen’s asymptotic advantage is observable.

Experimental validation was performed on a Cray XT4 system (8 cores per node, 2.4 GHz, 16 GB RAM). The authors fixed the matrix dimension at n = 94 080 and varied the number of nodes from 49 to 7 203. They compared four baselines: (1) classical 2‑D Cannon, (2) 3‑D SUMMA, (3) a prior Strassen‑based parallel implementation, and (4) Intel MKL’s highly tuned DGEMM. The new algorithm achieved speedups ranging from 24 % to 184 % over the best baseline at each scale. Notably, at the mid‑range of about 1 000 nodes, the communication volume per processor dropped to less than 30 % of that incurred by the prior Strassen code, confirming the practical impact of the theoretical optimization. Memory consumption was also reduced by roughly 15 % relative to earlier Strassen implementations, easing the pressure on node‑local memory for very large problems.

Beyond Strassen, the authors argue that their framework—fixed sub‑matrix ownership, block‑cycle mapping, and linear‑combination compression—can be transplanted to other fast matrix multiplication schemes such as Coppersmith‑Winograd, Le Gall’s recent algorithms, or any recursive algorithm whose computation graph exhibits sufficient expansion. By re‑deriving the communication lower bound for each algorithm and then applying the same design principles, one can obtain communication‑optimal parallel versions without sacrificing the arithmetic speedup.

In summary, the paper delivers a complete solution to the long‑standing bottleneck of communication in parallel Strassen multiplication. It provides (i) a provably optimal communication cost that meets known lower bounds, (ii) strong‑scaling up to the theoretical limit, (iii) substantial empirical speedups on a modern supercomputer, and (iv) a generalizable methodology for other fast matrix multiplication algorithms. This work sets a new benchmark for high‑performance linear algebra libraries and opens a clear path toward exascale‑ready matrix kernels that are both computation‑ and communication‑efficient.