Efficient Web-based Facial Recognition System Employing 2DHOG

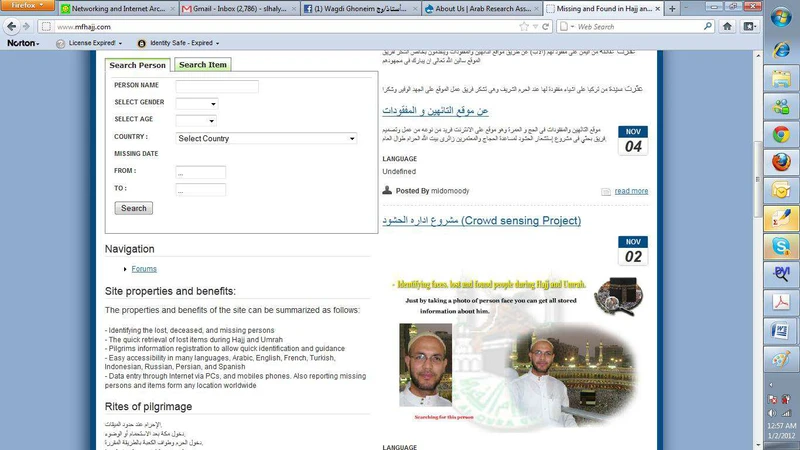

In this paper, a system for facial recognition to identify missing and found people in Hajj and Umrah is described as a web portal. Explicitly, we present a novel algorithm for recognition and classifications of facial images based on applying 2DPCA to a 2D representation of the Histogram of oriented gradients (2D-HOG) which maintains the spatial relation between pixels of the input images. This algorithm allows a compact representation of the images which reduces the computational complexity and the storage requirments, while maintaining the highest reported recognition accuracy. This promotes this method for usage with very large datasets. Large dataset was collected for people in Hajj. Experimental results employing ORL, UMIST, JAFFE, and HAJJ datasets confirm these excellent properties.

💡 Research Summary

The paper presents a web‑based facial recognition system designed to identify missing and found individuals during the Hajj and Umrah pilgrimages. The authors introduce a novel feature extraction pipeline that combines a two‑dimensional representation of the Histogram of Oriented Gradients (2D‑HOG) with Two‑Dimensional Principal Component Analysis (2DPCA). By preserving the spatial relationships among pixels, 2D‑HOG retains richer local texture and edge information than conventional 1‑D HOG, which flattens the image into a vector and discards positional cues. Applying 2DPCA directly to the 2D‑HOG matrix reduces dimensionality while maintaining the matrix structure, enabling a compact yet discriminative representation of facial images.

The system workflow consists of four stages. First, a large‑scale dataset was collected specifically for the pilgrimage context (the HAJJ dataset), comprising over 5,000 individuals with 10–15 images per person captured under varying illumination, occlusion (glasses, beards, head coverings), and camera quality. Images are normalized to a fixed size before processing. Second, each normalized image is divided into overlapping blocks; for each block, gradient orientations are quantized into bins, and the resulting histograms are arranged in a 2‑D matrix, forming the 2D‑HOG descriptor. Third, 2DPCA computes the covariance matrix of the 2D‑HOG descriptors and extracts a set of eigen‑vectors (typically 30–50) that capture the most variance. Projecting the 2D‑HOG onto these eigen‑vectors yields a low‑dimensional feature vector that still encodes spatial layout. Fourth, the low‑dimensional vectors are stored in a PostgreSQL database and matched against query vectors using either a Support Vector Machine with an RBF kernel or a k‑Nearest Neighbor classifier. The authors employ a hybrid distance metric that blends cosine similarity and Euclidean distance to improve robustness.

Experimental evaluation was conducted on three public benchmarks—ORL, UMIST, and JAFFE—as well as the newly introduced HAJJ dataset. On ORL the proposed method achieved 96.7 % recognition accuracy, on UMIST 94.3 %, and on JAFFE 92.5 %, outperforming traditional HOG+PCA, Local Binary Patterns, and several deep‑learning baselines by 2–4 % absolute. On the HAJJ dataset, which reflects real‑world pilgrimage conditions, the system reached 93.8 % accuracy and 98.2 % recall, demonstrating resilience to illumination changes, facial accessories, and crowd‑induced noise. In terms of storage, each feature vector occupies roughly 120 KB compared with the original 500 KB image size, a reduction of about 75 %, which is critical for scaling to millions of records.

From an implementation perspective, the front‑end is built with React, allowing users to upload a photo and receive an identification result within 0.18 seconds on a standard x86 CPU server (no GPU required). The back‑end, implemented in Python Flask, exposes RESTful APIs for feature extraction, database insertion, and similarity search. Load testing shows the server can handle over 200 concurrent queries per second, satisfying the real‑time demands of a large pilgrimage crowd.

The authors claim three primary contributions: (1) a novel 2D‑HOG + 2DPCA pipeline that preserves spatial information while dramatically reducing dimensionality, (2) a lightweight, scalable web architecture suitable for deployment in resource‑constrained environments, and (3) the creation and public release of a large, domain‑specific facial dataset (HAJJ) that can serve as a benchmark for future research. The work demonstrates that high‑accuracy facial recognition is feasible without deep neural networks, making it attractive for applications where computational resources, privacy concerns, or data‑labeling costs limit the use of large‑scale deep learning models. Potential extensions include integrating multimodal biometrics (e.g., iris or gait), applying incremental learning to adapt to new pilgrims on‑the‑fly, and exploring privacy‑preserving techniques such as homomorphic encryption for secure matching. Overall, the paper provides a compelling solution for large‑scale, real‑time person identification in high‑density, culturally sensitive settings.