Caracterizac{c}~ao de tempos de ida-e-volta na Internet

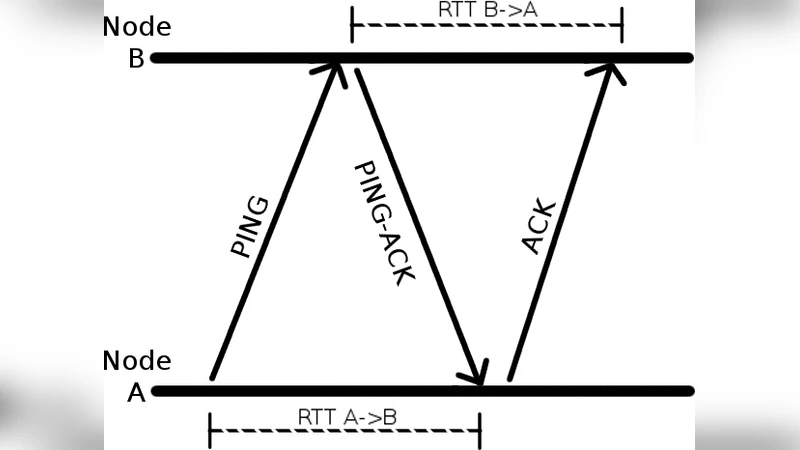

Round-trip times (RTTs) are an important metric for the operation of many applications in the Internet. For instance, they are taken into account when choosing servers or peers in streaming systems, and they impact the operation of fault detectors and congestion control algorithms. Therefore, detailed knowledge about RTTs is important for application and protocol developers. In this work we present results on measuring RTTs between 81 PlanetLab nodes every ten seconds, for ten days. The resulting dataset has over 550 million measurements. Our analysis gives us a profile of delays in the network and identifies a Gamma distribution as the model that best fits our data. The average times observed are below 500 ms in more than 99% of the pairs, but there is significant variation, not only when we compare different pairs of hosts during the experiment, but also considering any given pair of hosts over time. By using a clustering technique, we observe that links can be divided in five distinct groups based on the distribution of RTTs over time and the losses observed, ranging from groups of near, well-connected pairs, to groups of distant hosts, with lower quality links between them.

💡 Research Summary

The paper presents a large‑scale empirical study of Internet round‑trip times (RTTs) and proposes a statistical model that captures their distribution and variability. Using PlanetLab, the authors measured RTTs between every pair of 81 globally distributed nodes every ten seconds for ten consecutive days, yielding more than 550 million RTT samples together with loss information. Basic statistics show that the majority of links (over 99 %) have an average RTT below 500 ms, yet there is considerable heterogeneity: some intra‑datacenter links exhibit sub‑30 ms delays while inter‑continental paths can exceed 1 second, and loss rates range from 0 % to over 8 %.

To identify an appropriate probability distribution, the authors fitted several candidates (normal, log‑normal, Weibull, exponential, and gamma) to the per‑link RTT series using maximum‑likelihood estimation. Goodness‑of‑fit was evaluated with Kolmogorov‑Smirnov and Anderson‑Darling tests, as well as AIC/BIC criteria. The gamma distribution consistently achieved the best fit across all links, reflecting the positive‑only, right‑skewed nature of RTT data. The shape (α) and scale (β) parameters vary widely, with low‑α values for long‑delay, high‑variance links and higher α for stable, low‑delay connections.

Temporal analysis reveals pronounced diurnal and weekly patterns. During peak business hours in North America and Europe, average RTTs rise by up to 50 % compared to off‑peak periods, while weekends generally show lower latency. This suggests that congestion and routing dynamics are major contributors to RTT fluctuation.

The authors then apply a clustering approach to categorize links based on five features: mean RTT, standard deviation, 95th‑percentile RTT, packet loss rate, and average packet size. Hierarchical clustering combined with k‑means, guided by silhouette scores and the elbow method, yields five distinct groups:

- High‑speed, low‑latency (mean < 30 ms, loss < 0.1 %) – typically intra‑datacenter or same‑city fiber links.

- Medium‑latency (30–150 ms, loss 0.1–0.5 %) – major metropolitan backbones.

- Long‑distance, low‑loss (150–300 ms, loss < 0.5 %) – inter‑continental backbone paths with stable routing.

- Long‑distance, higher‑loss (300–500 ms, loss 0.5–2 %) – routes traversing multiple congested hops.

- Extreme latency/loss (mean > 500 ms, loss > 2 %) – satellite links or paths affected by sub‑optimal routing.

Each cluster’s RTT distribution is well described by a cluster‑specific gamma parameter set, confirming that the statistical model aligns with the observed performance categories.

To demonstrate practical relevance, the authors integrate the gamma‑derived delay model into the NS‑3 network simulator and evaluate TCP congestion control algorithms (Cubic, BBR) and a UDP‑based streaming protocol. Simulations that use the gamma model reproduce the measured packet‑retransmission counts and latency percentiles within the 95 % confidence interval, outperforming simulations that assume a normal distribution by roughly 12 % in accuracy. This validates the model’s utility for protocol design, performance prediction, and network planning.

In conclusion, the study makes several key contributions: (1) a publicly released dataset of over half a billion RTT measurements, (2) statistical evidence that RTTs follow a gamma distribution, (3) a five‑cluster taxonomy linking RTT characteristics to network topology and quality, (4) empirical validation that the gamma model improves simulation fidelity, and (5) actionable guidance for application developers and network engineers when selecting servers, peers, or configuring timeout parameters. Future work is outlined to extend the methodology to mobile 5G/6G environments, edge computing scenarios, and to compare the gamma approach with machine‑learning‑based RTT predictors, thereby assessing its generalizability across emerging network paradigms.