Open Data: Reverse Engineering and Maintenance Perspective

Open data is an emerging paradigm to share large and diverse datasets – primarily from governmental agencies, but also from other organizations – with the goal to enable the exploitation of the data for societal, academic, and commercial gains. There are now already many datasets available with diverse characteristics in terms of size, encoding and structure. These datasets are often created and maintained in an ad-hoc manner. Thus, open data poses many challenges and there is a need for effective tools and techniques to manage and maintain it. In this paper we argue that software maintenance and reverse engineering have an opportunity to contribute to open data and to shape its future development. From the perspective of reverse engineering research, open data is a new artifact that serves as input for reverse engineering techniques and processes. Specific challenges of open data are document scraping, image processing, and structure/schema recognition. From the perspective of maintenance research, maintenance has to accommodate changes of open data sources by third-party providers, traceability of data transformation pipelines, and quality assurance of data and transformations. We believe that the increasing importance of open data and the research challenges that it brings with it may possibly lead to the emergence of new research streams for reverse engineering as well as for maintenance.

💡 Research Summary

The paper positions open data—publicly released, large‑scale, heterogeneous datasets from governments, agencies, and private entities—as a novel software artifact that presents unique challenges for collection, transformation, and long‑term upkeep. While the open‑data movement promises societal, academic, and commercial benefits, the reality is that most datasets are published in an ad‑hoc fashion, using a wide variety of encodings (CSV, JSON, XML, PDF, raster images, GIS formats, etc.) and are subject to frequent, undocumented changes by the data providers. Consequently, data consumers face high entry barriers and ongoing maintenance costs.

From a reverse engineering perspective, the authors argue that open data becomes the input for classic reverse‑engineering techniques, albeit with domain‑specific twists. Three core reverse‑engineering problems are identified:

-

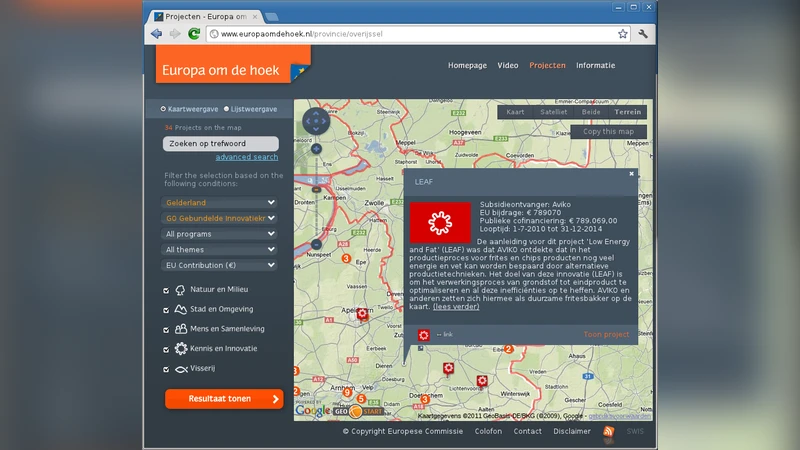

Document Scraping – Automated extraction from web portals or APIs requires analysis of HTML/JavaScript structures, handling of dynamic content, authentication, and anti‑scraping measures. Because providers often redesign pages without notice, scrapers must incorporate change‑detection and self‑reconstruction capabilities.

-

Image and PDF Processing – Many public datasets are delivered as scanned PDFs, satellite imagery, or other raster formats. Effective use demands OCR pipelines, image pre‑processing (de‑skewing, noise removal), and layout analysis. Variability in fonts, resolutions, and languages makes a static OCR configuration insufficient; adaptive, learning‑based approaches are needed to correct systematic errors.

-

Structure/Schema Recognition – When data appear in semi‑structured or unstructured forms, reverse engineers must infer column semantics, data types, and relational models. Techniques such as statistical profiling, naming‑pattern analysis, and alignment with external ontologies become essential for automatically generating usable schemas.

From a software maintenance standpoint, the paper highlights three complementary challenges:

-

Accommodating Provider Changes – Data publishers may alter formats, licensing terms, or access mechanisms at any time. Effective maintenance requires versioning of data sources, automated monitoring for breaking changes, and mechanisms to trigger regeneration or adaptation of transformation scripts.

-

Traceability of Transformation Pipelines – End‑to‑end data workflows (raw → cleaned → analysis‑ready) must be documented with explicit input‑output relationships, script versions, and configuration parameters. Storing this metadata in a version‑controlled repository enables impact analysis, debugging, and regulatory auditing.

-

Quality Assurance – Data quality spans accuracy, completeness, consistency, timeliness, and provenance. The authors propose integrating automated test suites into continuous integration/continuous deployment (CI/CD) pipelines: schema validation, range checks, duplicate detection, and temporal continuity tests run automatically whenever a dataset is refreshed. Test results are reported through dashboards, providing immediate feedback to data engineers and downstream analysts.

The authors synthesize these observations into a unified data‑management framework that blends reverse‑engineering automation with maintenance best practices. The framework consists of:

- A collection module that combines web‑scraping, API harvesting, and image‑to‑text conversion, equipped with change‑monitoring agents.

- A schema‑extraction module that employs statistical profiling, pattern mining, and external metadata linking to produce machine‑readable schemas.

- A pipeline‑management module that records each transformation step as a first‑class artifact, version‑controls scripts, and triggers re‑generation when upstream changes are detected.

- A quality‑assurance module that runs a battery of automated tests, logs failures, and visualizes metrics for stakeholders.

Future research directions are outlined: (i) leveraging machine‑learning models for semantic schema inference and ontology alignment; (ii) predictive analytics to anticipate provider‑side changes and proactively adjust pipelines; and (iii) embedding legal and ethical provenance metadata to satisfy compliance and audit requirements.

In conclusion, the paper argues that open data is not merely raw material for analysis but a software‑like artifact that benefits from the rigor of reverse engineering and maintenance disciplines. By applying automated extraction, adaptive transformation, rigorous versioning, and systematic quality testing, the community can lower the cost of data reuse, improve reliability, and unlock the full potential of open data for research, innovation, and public good.

Comments & Academic Discussion

Loading comments...

Leave a Comment