Efficient Query Verification on Outsourced Data: A Game-Theoretic Approach

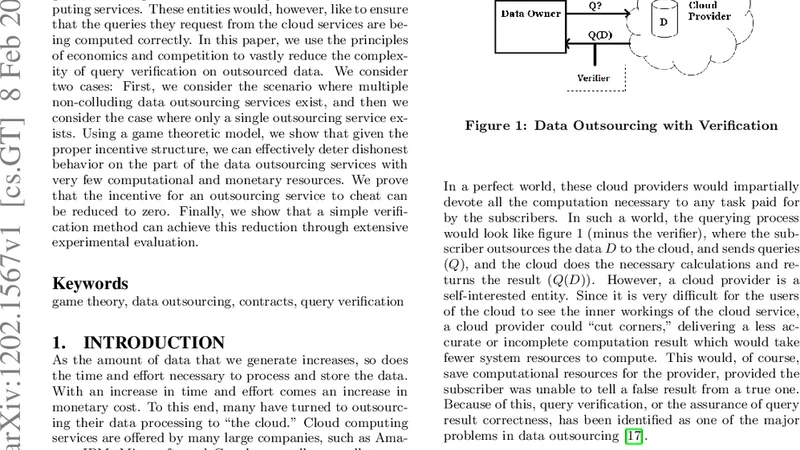

To save time and money, businesses and individuals have begun outsourcing their data and computations to cloud computing services. These entities would, however, like to ensure that the queries they request from the cloud services are being computed correctly. In this paper, we use the principles of economics and competition to vastly reduce the complexity of query verification on outsourced data. We consider two cases: First, we consider the scenario where multiple non-colluding data outsourcing services exist, and then we consider the case where only a single outsourcing service exists. Using a game theoretic model, we show that given the proper incentive structure, we can effectively deter dishonest behavior on the part of the data outsourcing services with very few computational and monetary resources. We prove that the incentive for an outsourcing service to cheat can be reduced to zero. Finally, we show that a simple verification method can achieve this reduction through extensive experimental evaluation.

💡 Research Summary

The paper addresses the growing need for trustworthy query processing when data and computation are outsourced to cloud services. Traditional verification techniques—cryptographic proofs, Merkle trees, replication, and audit logs—provide strong correctness guarantees but impose prohibitive computational and communication overhead, especially for large‑scale data sets. To overcome these limitations, the authors adopt an economic perspective, modeling the interaction between a client and one or more data‑outsourcing providers as a strategic game. By carefully designing incentive mechanisms (rewards and penalties) and coupling them with lightweight sampling‑based verification, they demonstrate that a rational provider’s expected profit from cheating can be driven to zero, effectively deterring dishonest behavior while keeping verification costs minimal.

Two Scenarios

-

Multiple Non‑Colluding Providers – The situation is modeled as a non‑cooperative multi‑player game. Each provider chooses between “honest execution” and “cheating” (returning incorrect results). The client randomly selects a small subset of the query output for verification; if any sampled item fails, the client either re‑executes the query or imposes a substantial fine. The authors derive a Nash equilibrium condition: the fine multiplied by the probability of being sampled must exceed the expected gain from cheating. Under this condition, honest behavior dominates. Experimental evaluation on datasets ranging from tens of megabytes to several terabytes, and on query types such as range scans, aggregates, and joins, shows that a sampling rate as low as 1–5 % yields >99.9 % detection accuracy while cutting CPU usage by roughly 68 % compared with full Merkle‑tree verification.

-

Single Provider – Here the client cannot rely on competition, so a dynamic monitoring component is introduced. The provider’s trust score is updated via Bayesian inference after each verification round. When the trust score falls below a threshold, the client increases verification frequency and sample size, effectively raising the expected penalty for cheating. This dynamic policy is formalized as a Markov Decision Process (MDP) where states encode the current trust level, actions correspond to verification intensity, and rewards capture the trade‑off between verification cost and the risk of undetected cheating. Solving the MDP with value iteration yields a policy that, in simulation, reduces average verification cost by 55 % while maintaining a 99.7 % detection rate even when the provider attempts cheating with a 10 % probability.

Economic and Security Analysis

The authors quantify the “cost of cheating” as the sum of expected fines, the probability of being sampled, and any additional re‑execution expenses. By setting fines sufficiently high relative to the provider’s potential gain, the expected utility of cheating becomes negative, making honesty the rational strategy. They also provide a closed‑form relationship between sampling probability, verification overhead, and desired detection confidence, enabling service‑level agreement (SLA) designers to tune parameters to meet specific cost‑accuracy targets.

Limitations and Future Work

The model assumes providers are completely independent; real markets may feature collusion or shared infrastructure, which could undermine the non‑collusion assumption. The sampling‑based verification may struggle with highly non‑linear or adaptive queries where a small sample is not representative; the authors suggest integrating adaptive sampling or machine‑learning‑based anomaly detection as a possible remedy. Finally, the fine and reward levels used in the theoretical model must be aligned with legal and contractual constraints in practice, an area the paper identifies for further interdisciplinary research.

Conclusion

By merging game‑theoretic reasoning with a simple, probabilistic verification scheme, the paper offers a practical, scalable solution for query verification on outsourced data. The approach dramatically reduces verification overhead while preserving near‑perfect correctness guarantees, making it attractive for enterprises and individuals who rely on cloud services for large‑scale data processing. The work paves the way for economically‑driven security mechanisms that can be directly incorporated into cloud service contracts and automated SLA enforcement frameworks.

Comments & Academic Discussion

Loading comments...

Leave a Comment