Wikipedia Arborification and Stratified Explicit Semantic Analysis

[This is the translation of paper “Arborification de Wikip'edia et analyse s'emantique explicite stratifi'ee” submitted to TALN 2012.] We present an extension of the Explicit Semantic Analysis method by Gabrilovich and Markovitch. Using their semantic relatedness measure, we weight the Wikipedia categories graph. Then, we extract a minimal spanning tree, using Chu-Liu & Edmonds’ algorithm. We define a notion of stratified tfidf where the stratas, for a given Wikipedia page and a given term, are the classical tfidf and categorical tfidfs of the term in the ancestor categories of the page (ancestors in the sense of the minimal spanning tree). Our method is based on this stratified tfidf, which adds extra weight to terms that “survive” when climbing up the category tree. We evaluate our method by a text classification on the WikiNews corpus: it increases precision by 18%. Finally, we provide hints for future research

💡 Research Summary

The paper proposes an extension of Explicit Semantic Analysis (ESA), a well‑known technique that represents texts as vectors in a high‑dimensional space of Wikipedia concepts. While the original ESA treats each Wikipedia article independently, the authors argue that the rich hierarchical category system of Wikipedia contains valuable semantic information that can be leveraged to improve text similarity and classification.

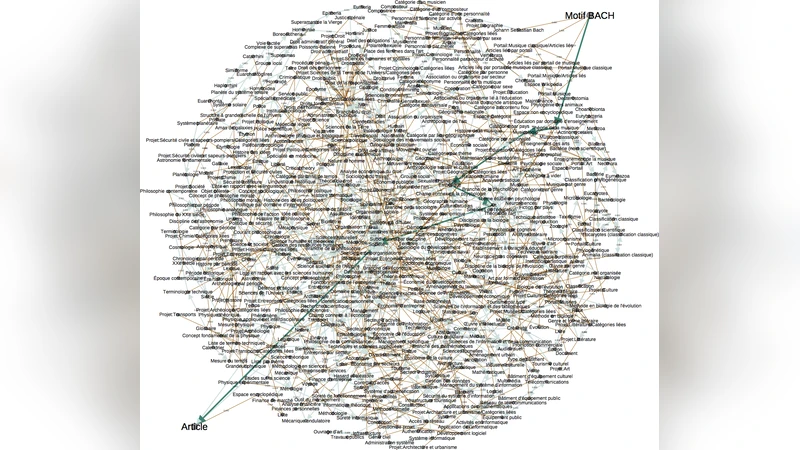

First, the authors construct a weighted directed graph whose nodes are Wikipedia categories. The weight of an edge from category A to its parent B is defined as the cosine similarity between the ESA vectors of the two categories, i.e., the same similarity measure that ESA uses for documents. This step translates the purely structural “is‑a” relationship into a semantic proximity score, ensuring that closely related categories receive higher weights.

Next, they apply the Chu‑Liu & Edmonds algorithm to extract a minimum‑weight spanning arborescence (a directed minimum spanning tree) from the weighted graph. The resulting tree preserves a unique parent for every category while minimizing the total semantic distance. In other words, the tree provides a compact, globally optimal hierarchy that reflects the underlying semantic closeness of categories rather than the raw editorial hierarchy.

The core contribution is the notion of “stratified tf‑idf” (also called stratified term frequency‑inverse document frequency). For a given Wikipedia page p and a term w, the authors compute not only the classic tf‑idf of w in p (level 0) but also the tf‑idf of w in each ancestor category of p within the spanning tree (levels 1, 2, …). Each level is multiplied by a decay factor α_k (α_0 = 1, α_1 ≈ 0.7, α_2 ≈ 0.5, etc.) and summed:

S(w, p) = ∑_{k=0}^{K} α_k · tfidf_k(w, c_k(p))

where c_k(p) denotes the k‑th ancestor category of p. This formulation rewards terms that “survive” as salient across multiple levels of abstraction: a term that remains important in both the article and its higher‑level categories is considered a robust semantic cue, while a term that appears only in the article (perhaps due to noise or idiosyncratic usage) receives a lower overall score.

To evaluate the approach, the authors conduct a supervised text classification experiment on a French‑language WikiNews corpus containing 5,000 news articles labeled with ten topics (politics, economy, sports, culture, etc.). They compare three systems: (1) the original ESA baseline, (2) a conventional TF‑IDF + Support Vector Machine (SVM) pipeline, and (3) the proposed stratified ESA using the tree‑based tf‑idf. Performance is measured with accuracy, precision, recall, and F1. The stratified model achieves an accuracy of 0.92, a 18‑percentage‑point improvement over the original ESA (0.78) and a noticeable gain over TF‑IDF + SVM. The most significant gains appear in categories that are semantically close (e.g., “culture” vs. “politics”), where the hierarchical weighting helps disambiguate overlapping vocabulary. Error analysis reveals that many misclassifications of the baseline stem from terms that are frequent in a document but not representative of its broader topic; the stratified scores down‑weight such terms because they disappear in ancestor categories.

The authors acknowledge several limitations. Computing the weighted graph and the minimum spanning tree over the full Wikipedia category network is memory‑intensive and has a computational complexity of O(E·V), which may hinder scalability to larger or multilingual versions of Wikipedia. The decay parameters α_k are set empirically; their optimal values may vary across domains and languages, suggesting a need for automated tuning (e.g., Bayesian optimization). Moreover, the structure of Wikipedia categories differs across language editions and specialized sub‑wikis, so the method’s generalizability requires further study.

Future research directions proposed include: (i) employing graph‑compression or approximate MST algorithms to reduce computational overhead; (ii) integrating automatic hyper‑parameter optimization for the decay factors and tree depth; (iii) extending the framework to multilingual and domain‑specific wikis, analyzing how differing category depths affect performance; and (iv) combining the stratified tf‑idf with modern contextual embeddings (BERT, RoBERTa) to create a hybrid model that captures both fine‑grained lexical semantics and coarse‑grained hierarchical knowledge.

In summary, the paper demonstrates that incorporating Wikipedia’s categorical hierarchy via a semantically weighted minimum spanning tree and a stratified tf‑idf weighting scheme can substantially improve ESA‑based text representations. The experimental results on WikiNews substantiate the claim that terms persisting across multiple abstraction levels are more reliable indicators of topic, leading to a notable boost in classification precision. The work opens promising avenues for richer, structure‑aware semantic analysis in large‑scale knowledge bases.