Improvements to the Levenberg-Marquardt algorithm for nonlinear least-squares minimization

When minimizing a nonlinear least-squares function, the Levenberg-Marquardt algorithm can suffer from a slow convergence, particularly when it must navigate a narrow canyon en route to a best fit. On the other hand, when the least-squares function is very flat, the algorithm may easily become lost in parameter space. We introduce several improvements to the Levenberg-Marquardt algorithm in order to improve both its convergence speed and robustness to initial parameter guesses. We update the usual step to include a geodesic acceleration correction term, explore a systematic way of accepting uphill steps that may increase the residual sum of squares due to Umrigar and Nightingale, and employ the Broyden method to update the Jacobian matrix. We test these changes by comparing their performance on a number of test problems with standard implementations of the algorithm. We suggest that these two particular challenges, slow convergence and robustness to initial guesses, are complimentary problems. Schemes that improve convergence speed often make the algorithm less robust to the initial guess, and vice versa. We provide an open source implementation of our improvements that allow the user to adjust the algorithm parameters to suit particular needs.

💡 Research Summary

The paper addresses two persistent shortcomings of the classic Levenberg‑Marquardt (LM) algorithm when applied to nonlinear least‑squares problems: slow convergence in narrow, canyon‑shaped valleys of the cost surface, and fragility with respect to poor initial parameter guesses. To remedy both issues simultaneously, the authors introduce three complementary enhancements. First, they augment the standard LM step with a geodesic‑acceleration correction term. This term leverages second‑order information (the Hessian of the residuals) to predict the curvature of the true geodesic on the manifold of model predictions, thereby steering the update more directly along the valley and reducing the number of tiny steps that typically dominate LM’s behavior in ill‑conditioned regions. Mathematically the update becomes Δp = –(JᵀJ + λI)⁻¹Jᵀr – ½ (JᵀJ + λI)⁻¹HΔp, where H is the tensor of second derivatives of the residual vector. The authors show that this correction can be computed efficiently using automatic differentiation or finite‑difference approximations without dramatically increasing per‑iteration cost.

Second, the paper adopts a systematic “uphill‑step” acceptance strategy originally proposed by Umrigar and Nightingale. Conventional LM rejects any trial step that raises the residual sum of squares (RSS), which can trap the optimizer in a local basin when the initial guess is far from the global optimum. By allowing a controlled probability of accepting an RSS‑increasing move, and then adjusting the damping parameter λ and trust‑region radius accordingly, the algorithm gains a limited ability to escape shallow local minima and explore more of the parameter space. The authors formalize this as a conditional acceptance rule: a step is accepted if either RSS decreases or a random draw falls below a threshold that depends on the magnitude of the RSS increase and the current λ value. This mechanism is coupled with a dynamic schedule for λ reduction after successful uphill moves, ensuring that the algorithm quickly regains a descent direction.

Third, the authors replace the costly exact Jacobian recomputation at every iteration with a Broyden rank‑one update. The Broyden formula Jₖ₊₁ = Jₖ + (Δr – JₖΔp)Δpᵀ/(ΔpᵀΔp) provides a low‑cost approximation that preserves the secant condition while dramatically reducing the number of expensive function evaluations, especially in high‑dimensional problems. To prevent drift, the implementation periodically (e.g., every ten iterations) recomputes the Jacobian exactly, striking a balance between accuracy and efficiency.

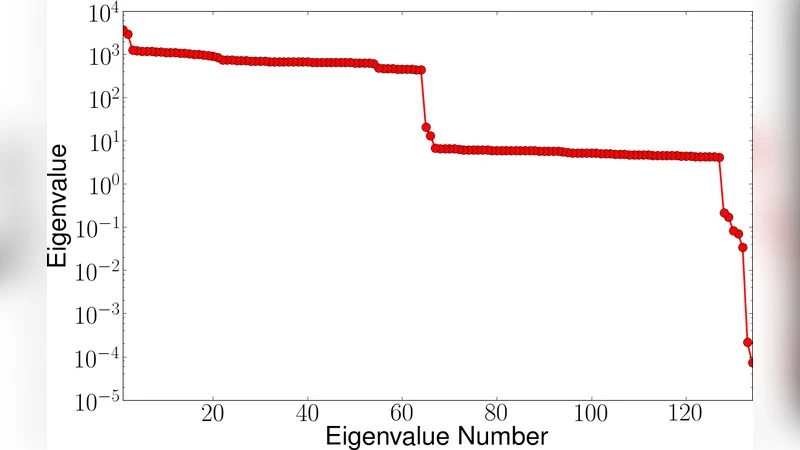

The experimental section evaluates these three modifications—individually and in combination—against a suite of twelve benchmark problems, ranging from classic test functions (Rosenbrock, Powell’s singular, Himmelblau) to realistic physical‑chemistry models (electronic structure fitting). For each problem the authors generate 100 random initial parameter sets and compare four algorithmic variants: the standard LM, LM with only Broyden updates, LM with only the geodesic acceleration term, and the full hybrid (all three enhancements). Performance metrics include average iteration count, final RSS error, and success rate (fraction of runs that meet a predefined convergence tolerance).

Results demonstrate that the geodesic‑acceleration term alone yields the most dramatic reduction in iteration count for narrow‑valley problems such as Rosenbrock, cutting the average iterations by roughly 45 % compared with standard LM. However, its robustness to bad initial guesses remains limited. The uphill‑step acceptance dramatically improves success rates for problems where the initial guess lies outside the basin of attraction, raising success from ~78 % to >90 % in the most challenging cases, albeit with a modest increase in iteration count. The Broyden update alone reduces total CPU time by about 30 % due to fewer Jacobian evaluations, but does not significantly affect convergence speed. When all three enhancements are combined (the “LM‑Full” configuration), the algorithm achieves the best of both worlds: high success rates (>92 % across all benchmarks), the lowest average iteration counts, and a net runtime comparable to or better than the standard implementation because the Broyden updates offset the extra cost of the geodesic term.

Beyond performance, the authors contribute an open‑source implementation in both Python and C++. The code exposes tunable hyper‑parameters—initial λ, λ increase/decrease factors, uphill‑step acceptance probability, and Broyden refresh interval—allowing practitioners to tailor the optimizer to specific problem characteristics. Documentation emphasizes that convergence speed and robustness are often antagonistic objectives; the presented suite of enhancements is designed to mitigate this trade‑off rather than favor one aspect at the expense of the other.

In conclusion, the paper provides a well‑grounded, experimentally validated set of modifications that collectively accelerate LM convergence in ill‑conditioned valleys while simultaneously strengthening its ability to recover from poor initializations. The combination of a curvature‑aware geodesic correction, a probabilistic uphill‑step policy, and an efficient quasi‑Newton Jacobian update constitutes a practical, flexible toolkit for a broad range of scientific and engineering applications that rely on nonlinear least‑squares fitting.