SMC^2: an efficient algorithm for sequential analysis of state-space models

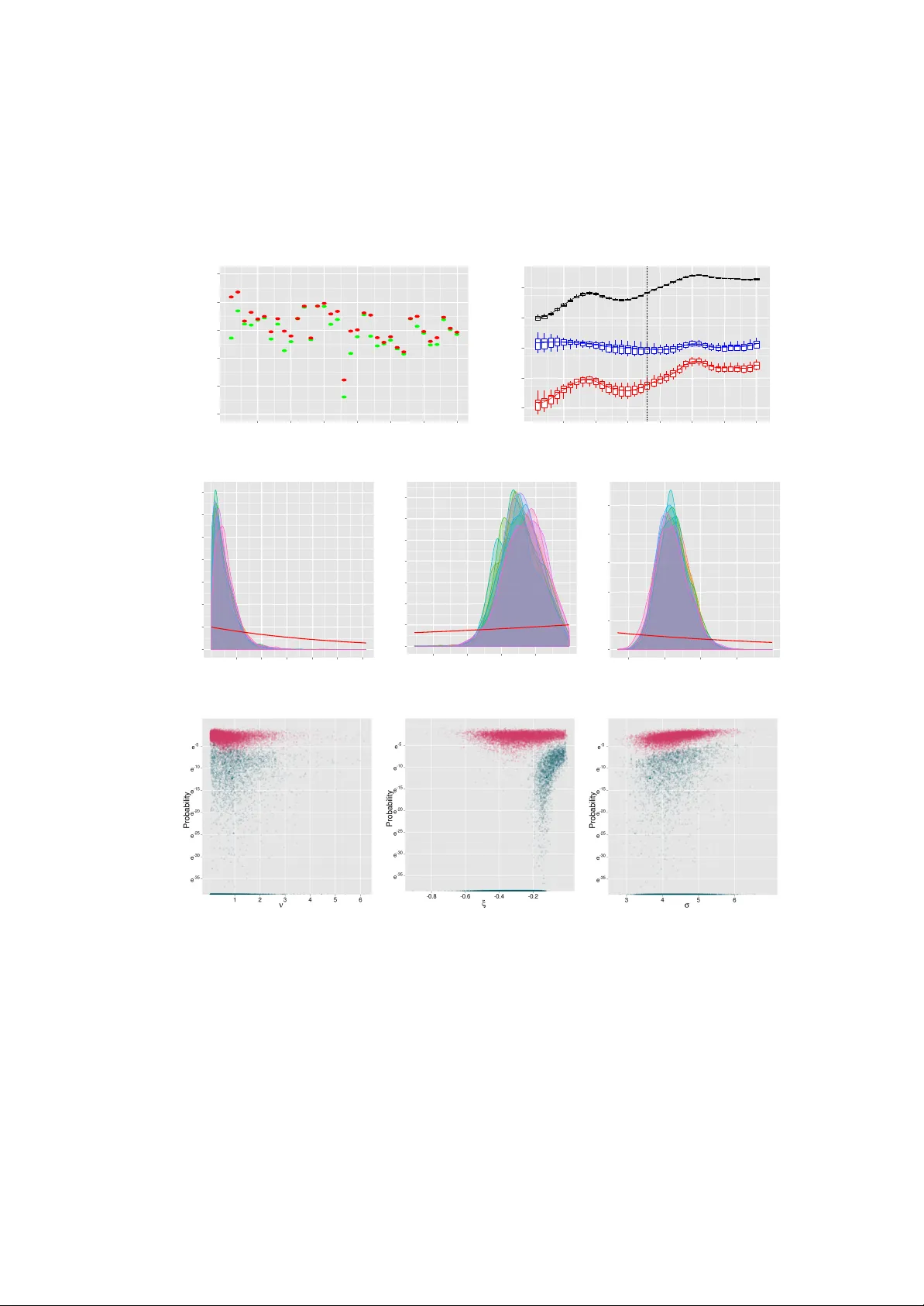

We consider the generic problem of performing sequential Bayesian inference in a state-space model with observation process y, state process x and fixed parameter theta. An idealized approach would be to apply the iterated batch importance sampling (…

Authors: Nicolas Chopin, Pierre E. Jacob, Omiros Papaspiliopoulos