Benchmarking CRBLASTER on the 350-MHz 49-core Maestro Development Board

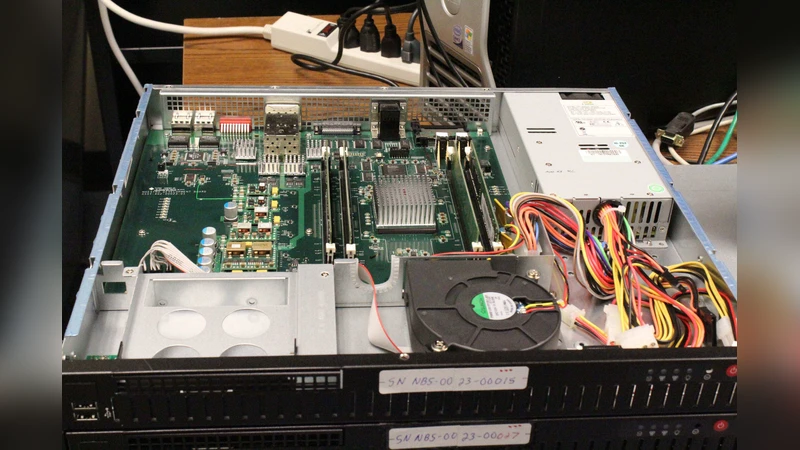

I describe the performance of the CRBLASTER computational framework on a 350-MHz 49-core Maestro Development Board (MDB). The 49-core Interim Test Chip (ITC) was developed by the U.S. Government and is based on the intellectual property of the 64-core TILE64 processor of the Tilera Corporation. The Maestro processor is intended for use in the high radiation environments found in space; the ITC was fabricated using IBM 90-nm CMOS 9SF technology and Radiation-Hardening-by-Design (RHDB) rules. CRBLASTER is a parallel-processing cosmic-ray rejection application based on a simple computational framework that uses the high-performance computing industry standard Message Passing Interface (MPI) library. CRBLASTER was designed to be used by research scientists to easily port image-analysis programs based on embarrassingly-parallel algorithms to a parallel-processing environment such as a multi-node Beowulf cluster or multi-core processors using MPI. I describe my experience of porting CRBLASTER to the 64-core TILE64 processor, the Maestro simulator, and finally the 49-core Maestro processor itself. Performance comparisons using the ITC are presented between emulating all floating-point operations in software and doing all floating point operations with hardware assist from an IEEE-754 compliant Aurora FPU (floating point unit) that is attached to each of the 49 cores. Benchmarking of the CRBLASTER computational framework using the memory-intensive L.A.COSMIC cosmic ray rejection algorithm and a computational-intensive Poisson noise generator reveal subtleties of the Maestro hardware design. Lastly, I describe the importance of using real scientific applications during the testing phase of next-generation computer hardware; complex real-world scientific applications can stress hardware in novel ways that may not necessarily be revealed while executing simple applications or unit tests.

💡 Research Summary

The paper presents a thorough performance evaluation of the CRBLASTER parallel‑processing framework on a 350 MHz, 49‑core Maestro Development Board (MDB). Maestro is a radiation‑hardened processor derived from the U.S. Government’s Interim Test Chip (ITC), itself based on Tilera’s 64‑core TILE64 architecture, but fabricated with IBM 90‑nm CMOS 9SF technology and RHDB (Radiation‑Hardening‑by‑Design) rules. Each of the 49 cores incorporates an IEEE‑754‑compliant Aurora floating‑point unit (FPU), providing hardware assistance for floating‑point operations that are otherwise emulated in software on the original TILE64.

The author first describes the porting effort: CRBLASTER, a framework that enables scientists to convert embarrassingly‑parallel image‑analysis codes into MPI‑based applications, was initially compiled for the TILE64 simulator, then adapted to the Maestro simulator, and finally built for the physical 49‑core board. Challenges included configuring the MPI library for a many‑core environment, handling per‑core memory allocation, and toggling the Aurora FPU on and off to compare software‑emulated versus hardware‑accelerated floating‑point performance.

Two representative workloads were used for benchmarking. The first, the L.A.COSMIC cosmic‑ray rejection algorithm, is memory‑intensive: it processes astronomical images by dividing them into tiles, performing neighbor‑pixel comparisons, mask updates, and conditional recursion. Because each tile requires frequent reads and writes to shared DRAM, the algorithm stresses memory bandwidth, cache coherence, and the on‑chip network‑on‑chip (NoC) routing. The second workload is a computationally heavy Poisson‑noise generator that creates large arrays of Poisson‑distributed random numbers using logarithmic and exponential functions, thereby exercising the floating‑point units heavily.

Results show a clear dichotomy between the two workloads. When the Aurora FPU is disabled and all floating‑point operations are emulated in software, overall execution time increases by roughly 30 % across both benchmarks, with the Poisson‑noise generator suffering the most due to its heavy reliance on floating‑point arithmetic. Enabling the hardware FPU yields a 2.8× speed‑up for the Poisson‑noise generator, confirming that the Aurora unit delivers near‑optimal IEEE‑754 performance. In contrast, the L.A.COSMIC algorithm sees only modest gains from the FPU; its performance remains limited by memory bandwidth and NoC contention. Scaling efficiency plateaus at 0.6–0.7 as core count rises, indicating that the shared DRAM interface becomes a bottleneck and that the mesh NoC does not fully mitigate traffic hotspots. Additionally, MPI message‑passing overhead is not uniform across cores; certain cores experience higher latency due to uneven routing paths, further reducing parallel efficiency.

The study highlights several key insights about Maestro’s hardware design. First, the inclusion of a per‑core FPU is highly beneficial for compute‑bound scientific codes, delivering substantial speed‑ups without additional software complexity. Second, memory‑bound applications expose limitations in the current memory subsystem and cache‑coherence protocol, suggesting that future revisions should consider larger per‑core L2 caches or higher‑bandwidth memory interfaces. Third, the RHDB design constraints, while essential for radiation tolerance, may inadvertently restrict aggressive memory‑circuit optimizations, contributing to the observed bandwidth bottlenecks.

Finally, the author argues that real scientific applications, such as CRBLASTER, are indispensable during the validation phase of next‑generation hardware. Simple synthetic benchmarks often fail to reveal complex interactions between compute, memory, and interconnect subsystems. By deploying a genuine image‑processing pipeline, the study uncovered subtle performance‑limiting factors that would otherwise remain hidden, thereby providing valuable feedback for both hardware architects and software developers targeting radiation‑hard, many‑core platforms.

Comments & Academic Discussion

Loading comments...

Leave a Comment