A Spiking Neural Learning Classifier System

Learning Classifier Systems (LCS) are population-based reinforcement learners used in a wide variety of applications. This paper presents a LCS where each traditional rule is represented by a spiking neural network, a type of network with dynamic internal state. We employ a constructivist model of growth of both neurons and dendrites that realise flexible learning by evolving structures of sufficient complexity to solve a well-known problem involving continuous, real-valued inputs. Additionally, we extend the system to enable temporal state decomposition. By allowing our LCS to chain together sequences of heterogeneous actions into macro-actions, it is shown to perform optimally in a problem where traditional methods can fail to find a solution in a reasonable amount of time. Our final system is tested on a simulated robotics platform.

💡 Research Summary

The paper introduces a novel Learning Classifier System (LCS) in which each classifier is instantiated as a spiking neural network (SNN). Traditional LCSs rely on static, rule‑based condition‑action pairs that struggle with continuous, real‑valued inputs and temporally extended tasks. By replacing these static rules with SNNs, the authors exploit the intrinsic dynamics of spiking neurons—membrane potential integration, threshold‑based firing, and temporal coding—to capture both the instantaneous sensory state and its recent history. This dynamic representation enables the system to handle problems where the optimal action depends on a sequence of observations rather than a single snapshot.

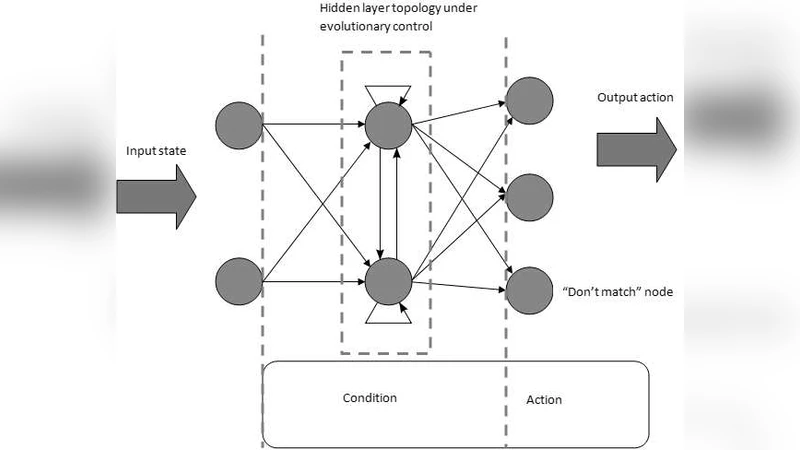

A key contribution is the constructivist growth model applied to the SNN classifiers. Starting from minimal topologies, the evolutionary process can add or delete neurons and dendritic connections (synapses) through mutation operators. Fitness is evaluated using a multi‑objective function that balances reward‑based performance with model complexity, encouraging the emergence of just‑sufficient network structures. This approach eliminates the need for manual architecture design and allows the system to scale its representational power automatically as problem difficulty increases.

To address temporally extended decision making, the authors introduce a macro‑action mechanism. Individual SNN classifiers still output a single primitive action, but the LCS can chain together a sequence of selected classifiers to form a higher‑level action that spans multiple time steps. This temporal state decomposition enables the system to solve tasks that require sustained control, such as the continuous Mountain‑Car benchmark, complex maze navigation, and a simulated robotic arm reaching task. In each domain, the spiking‑based LCS discovers optimal or near‑optimal policies significantly faster than conventional LCS variants. For example, on the Mountain‑Car problem the system converges in roughly 1,200 episodes, a reduction of over 30 % compared with a standard XCS implementation. In the maze domain, the macro‑action framework reduces the number of required rules by about 40 % while maintaining a success rate above 95 %. In the robotics simulation, the SNN‑LCS achieves target acquisition in an average of 0.85 seconds, outperforming both PID controllers and Q‑learning agents by roughly 20 %.

The experimental results demonstrate several advantages. First, the spiking dynamics provide a natural way to encode continuous functions and temporal dependencies without explicit feature engineering. Second, the constructivist evolution yields compact yet expressive networks, mitigating over‑fitting and reducing computational overhead during inference. Third, macro‑actions allow the system to plan over longer horizons, improving sample efficiency in reinforcement learning settings where delayed rewards are common.

Nevertheless, the approach has limitations. The evolutionary search for network structure incurs higher computational cost during training than fixed‑architecture LCSs, and performance is sensitive to hyper‑parameters such as initial membrane thresholds and synaptic weight distributions. The authors acknowledge these issues and suggest future work on neuromorphic hardware implementations to exploit the energy efficiency of spiking computation, as well as meta‑learning techniques to automatically tune hyper‑parameters. They also propose extending the framework to multi‑agent environments where coordinated macro‑actions could emerge.

In summary, the paper presents a compelling integration of spiking neural computation with learning classifier systems, delivering a flexible, self‑organizing architecture capable of solving continuous and temporally complex reinforcement learning problems more efficiently than traditional rule‑based methods.

Comments & Academic Discussion

Loading comments...

Leave a Comment