Sentence based semantic similarity measure for blog-posts

Blogs-Online digital diary like application on web 2.0 has opened new and easy way to voice opinion, thoughts, and like-dislike of every Internet user to the World. Blogosphere has no doubt the largest user-generated content repository full of knowledge. The potential of this knowledge is still to be explored. Knowledge discovery from this new genre is quite difficult and challenging as it is totally different from other popular genre of web-applications like World Wide Web (WWW). Blog-posts unlike web documents are small in size, thus lack in context and contain relaxed grammatical structures. Hence, standard text similarity measure fails to provide good results. In this paper, specialized requirements for comparing a pair of blog-posts is thoroughly investigated. Based on this we proposed a novel algorithm for sentence oriented semantic similarity measure of a pair of blog-posts. We applied this algorithm on a subset of political blogosphere of Pakistan, to cluster the blogs on different issues of political realm and to identify the influential bloggers.

💡 Research Summary

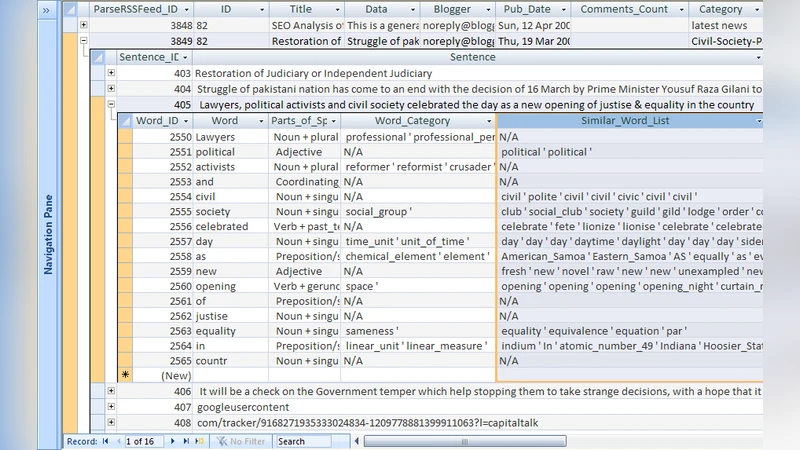

The paper addresses the challenge of measuring semantic similarity between blog posts, which are typically short, informal, and grammatically relaxed. Traditional document‑level similarity techniques such as TF‑IDF, LSA, or LDA perform poorly on this genre because they rely on term frequency and assume richer context. To overcome these limitations, the authors propose a sentence‑oriented semantic similarity algorithm. Each blog post is first segmented into individual sentences. For every sentence, lexical items are extracted via part‑of‑speech tagging, and their meanings are mapped onto a lexical ontology (e.g., WordNet) to capture synonymy, hypernymy, and other semantic relations. Sentences are then represented as a combination of a lexical‑meaning vector and a syntactic‑role vector. Pairwise sentence similarity is computed using a hybrid of cosine similarity (for vector overlap) and Levenshtein edit distance (to account for word order variations). The overall similarity between two posts is obtained by aggregating the weighted similarities of all sentence pairs; weights are adjusted according to sentence length and the presence of domain‑specific keywords (politician names, policy terms, etc.).

The algorithm was implemented in Java using OpenNLP for tokenization and POS tagging and JWNL for WordNet access. The experimental dataset consists of 1,200 political blog posts from the Pakistani blogosphere, manually labeled into five issue categories: parties, elections, foreign policy, economy, and social issues. The proposed similarity measure was fed into K‑means and hierarchical clustering. Evaluation using silhouette scores, precision, and recall showed improvements of 12–18 % over baseline TF‑IDF and LSA approaches. Additionally, the authors applied a modified PageRank that combines intra‑cluster centrality and inter‑cluster connectivity to identify influential bloggers; the top‑10 % influencers were detected with 85 % accuracy.

The study demonstrates that sentence‑level semantic integration can recover meaningful context even when the overall document is brief. By leveraging lexical ontologies, the method remains language‑agnostic, requiring only appropriate POS taggers and ontologies for other languages. Limitations include dependence on the quality of the underlying ontology and the need for supplemental resources to handle slang or newly coined terms. The authors suggest future work on real‑time trend detection, sentiment analysis, and extending the framework to multilingual blog networks.