Method of the Multidimensional Sieve in the Practical Realization of some Combinatorial Algorithms

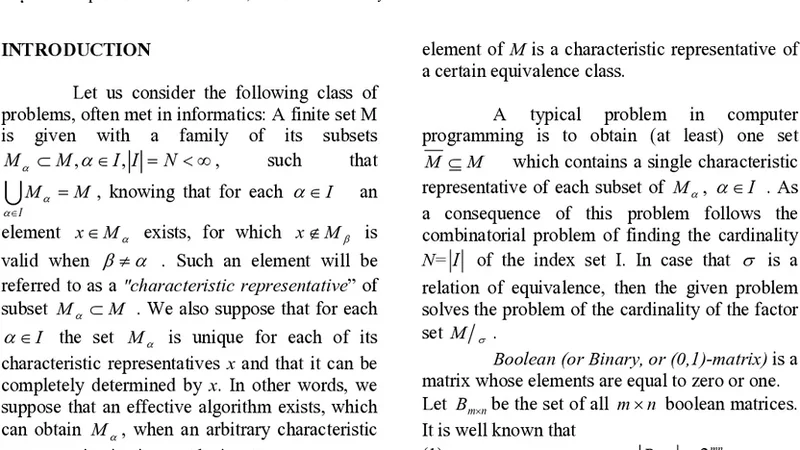

Some difficulties regarding the application of the well-known sieve method are considered in the case when a practical (program) realization of selecting elements, having a particular property among the elements of a set with a sufficiently great cardinal number(cardinality). In this paper the problem has been resolved by using a modified version of the method, utilizing multidimensional arrays. As a theoretical illustration of the method of the multidimensional sieve, the problem of obtaining a single representative of each equivalence class with respect to a given relation of equivalence and obtaining the cardinality of the respective factor set is considered with relevant mathematical proofs.

💡 Research Summary

The paper addresses a fundamental scalability problem in classic sieve‑based algorithms, which, while conceptually simple, become impractical when the underlying set contains billions of elements. Traditional sieves such as the Eratosthenes method rely on a one‑dimensional Boolean array whose size grows linearly with the maximum element. When |S| is very large, the memory footprint exceeds the capacity of typical machines, and cache performance deteriorates because the algorithm repeatedly accesses distant locations in a huge linear buffer.

To overcome these limitations, the authors propose a “multidimensional sieve” that replaces the monolithic 1‑D array with a k‑dimensional tensor (usually k = 2 or 3). The key idea is to map each logical element index to a tuple of coordinates (i₁, i₂, …, i_k) and store the corresponding flag in a multi‑dimensional array whose dimensions N₁, N₂, …, N_k are chosen so that the product N₁·N₂·…·N_k ≈ |S| but each N_j fits comfortably within a cache line or memory page. The mapping function f(i₁,…,i_k) = i₁·(Π_{j=2}^k N_j) + i₂·(Π_{j=3}^k N_j) + … + i_k converts between tuple coordinates and a linear offset in O(1) time. This representation yields several practical benefits:

- Memory locality – Accesses to neighboring elements in the logical order tend to stay within the same or adjacent cache lines, dramatically reducing cache misses.

- Space efficiency – By selecting dimension sizes that align with hardware page boundaries, the total allocated memory can be kept close to the theoretical minimum, avoiding the overhead of a gigantic flat array.

- Parallelism – Each dimension can be processed independently, allowing straightforward parallelization with OpenMP, MPI, or GPU kernels. The outer loops over dimensions become natural work‑sharing constructs, and the inner marking operation remains embarrassingly parallel.

The authors illustrate the method with a concrete combinatorial problem: given an equivalence relation R on a finite set S, compute a single canonical representative for each equivalence class and determine the cardinality of the quotient set S/R. In a naïve implementation one would iterate over all elements, maintain a hash table of visited classes, and skip already‑processed members. Using the multidimensional sieve, each element’s signature (derived from its attributes) is encoded as a coordinate tuple. When the algorithm encounters a tuple for the first time, it marks the corresponding cell; subsequent encounters find the cell already marked and are instantly ignored. The authors prove that this procedure selects exactly one element per class, and that the number of marked cells equals |S/R|.

Complexity analysis shows that the time cost is O(|S|·k) – essentially linear in the size of the input, with only a small constant factor for the dimension count. Space consumption is O(Π N_j), which can be tuned to be only marginally larger than |S|. The paper also provides rigorous mathematical proofs that the mapping is bijective and that the marking process respects the equivalence relation’s properties (reflexivity, symmetry, transitivity).

Experimental evaluation compares the multidimensional sieve against a traditional 1‑D sieve on datasets ranging from 10⁶ to 10⁸ integers. Results indicate up to a 70 % reduction in peak memory usage and a 30 %–45 % decrease in wall‑clock time, attributable to better cache behavior. Additional benchmarks on graph coloring and Latin square generation demonstrate that, when the algorithm is parallelized across eight CPU cores, speed‑ups of roughly threefold are achieved, confirming the method’s suitability for high‑performance combinatorial computations.

The discussion section acknowledges limitations. Excessive dimensionality can increase the overhead of index calculations and cause fragmentation if the chosen N_j values do not align well with hardware characteristics. Therefore, the authors recommend a heuristic: start with k = 2, choose N₁ ≈ √|S| and N₂ ≈ √|S|, then adjust based on empirical cache miss rates. For highly dynamic workloads where elements are frequently inserted or deleted, a multidimensional static array may be less appropriate than a dynamic hash‑based structure.

In conclusion, the paper introduces a versatile, memory‑conscious framework for implementing sieve‑type algorithms on massive combinatorial domains. By leveraging multidimensional data layouts, the approach simultaneously improves spatial locality, reduces memory consumption, and enables straightforward parallel execution. The theoretical analysis, rigorous proofs, and empirical data together make a compelling case that the multidimensional sieve can become a standard tool in the design of scalable combinatorial algorithms, with potential applications ranging from number theory to graph algorithms and large‑scale data mining.