Sparse Image Representation with Epitomes

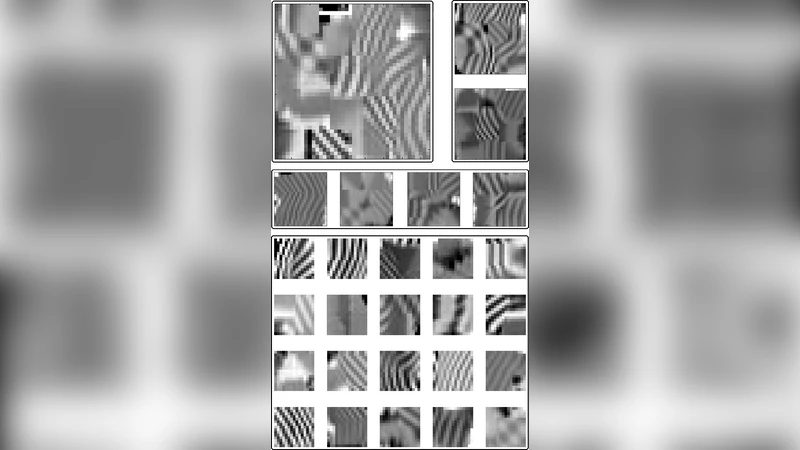

Sparse coding, which is the decomposition of a vector using only a few basis elements, is widely used in machine learning and image processing. The basis set, also called dictionary, is learned to adapt to specific data. This approach has proven to be very effective in many image processing tasks. Traditionally, the dictionary is an unstructured “flat” set of atoms. In this paper, we study structured dictionaries which are obtained from an epitome, or a set of epitomes. The epitome is itself a small image, and the atoms are all the patches of a chosen size inside this image. This considerably reduces the number of parameters to learn and provides sparse image decompositions with shiftinvariance properties. We propose a new formulation and an algorithm for learning the structured dictionaries associated with epitomes, and illustrate their use in image denoising tasks.

💡 Research Summary

The paper introduces a novel framework for sparse image representation that replaces the conventional “flat” dictionary—an unstructured collection of atoms—with a structured dictionary derived from an epitome. An epitome is a compact image whose overlapping patches of a chosen size constitute the dictionary atoms. This approach dramatically reduces the number of learnable parameters (from p × m for a flat dictionary to M, the number of pixels in the epitome) while providing inherent shift‑invariance because each atom can appear at multiple spatial locations.

The authors first revisit the classic sparse coding formulation: given training patches X∈ℝ^{m×n}, learn a dictionary D∈ℝ^{m×p} and coefficient matrix A∈ℝ^{p×n} by minimizing a reconstruction error plus an ℓ₁ sparsity term, subject to a unit‑norm constraint on the columns of D. They argue that this constraint is unsuitable for epitome‑based dictionaries, where overlapping patches naturally have different norms. Consequently, they propose an equivalent unconstrained formulation that incorporates a weighted ℓ₁ penalty: each coefficient α_{j,i} is multiplied by the ℓ₂ norm of its corresponding atom d_j. The dictionary is further constrained to lie in the image of a linear operator ϕ that extracts all overlapping patches from an epitome vector E∈ℝ^{M}. Thus the optimization problem becomes

min_{D∈Imϕ, A} (1/n)∑_{i=1}^{n}

Comments & Academic Discussion

Loading comments...

Leave a Comment