Generic Multiplicative Methods for Implementing Machine Learning Algorithms on MapReduce

In this paper we introduce a generic model for multiplicative algorithms which is suitable for the MapReduce parallel programming paradigm. We implement three typical machine learning algorithms to demonstrate how similarity comparison, gradient descent, power method and other classic learning techniques fit this model well. Two versions of large-scale matrix multiplication are discussed in this paper, and different methods are developed for both cases with regard to their unique computational characteristics and problem settings. In contrast to earlier research, we focus on fundamental linear algebra techniques that establish a generic approach for a range of algorithms, rather than specific ways of scaling up algorithms one at a time. Experiments show promising results when evaluated on both speedup and accuracy. Compared with a standard implementation with computational complexity $O(m^3)$ in the worst case, the large-scale matrix multiplication experiments prove our design is considerably more efficient and maintains a good speedup as the number of cores increases. Algorithm-specific experiments also produce encouraging results on runtime performance.

💡 Research Summary

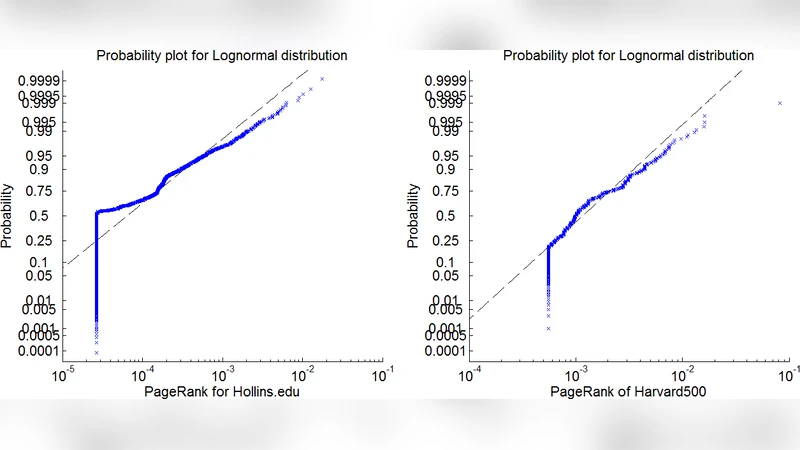

The paper proposes a generic multiplicative framework for scaling a variety of machine‑learning algorithms on the MapReduce platform. Rather than engineering each algorithm separately, the authors identify a common algebraic core—matrix multiplication, similarity (dot‑product) computation, gradient descent, and power‑iteration—that underlies three representative methods: Non‑Negative Matrix Factorization (NMF), Support Vector Machines (SVM), and PageRank. For NMF, the Lee‑Seung update rules are expressed as products of large matrices (e.g., (W^{T}A), (W^{T}WH)). For SVM, the dual problem reduces to solving (\alpha^{*}=G^{-1}y) where (G) is the kernel matrix; the gradient‑descent implementation requires repeated multiplication of the dense kernel matrix with a much smaller vector. PageRank is cast as the power method (\pi_{k+1}=P\pi_{k}), again a matrix‑vector multiplication.

To map these operations onto MapReduce, the authors introduce a two‑stage “Partition‑Summation” process. In the first Map job, each input matrix is partitioned into blocks according to a user‑defined schema ((m,n,k)); each block receives identifiers ((\alpha,\beta,\gamma)) that denote its position in the output matrix and the intermediate multiplication group. The second Reduce job groups blocks sharing the same ((\alpha,\beta)) and performs the partial products for each (\gamma), then sums the results to produce the final block of the output matrix. The design exploits sharding and hashing to keep related blocks on the same node, thereby maximizing data locality and minimizing network shuffle traffic. By adjusting the partition parameters, the method adapts to matrix sparsity, often reducing the theoretical (O(mnk)) complexity in practice.

Experimental evaluation on a Hadoop cluster demonstrates near‑linear speed‑up for NMF and PageRank as the number of cores increases from 4 to 64, while SVM benefits from the sparsity‑aware handling of the kernel matrix, achieving substantial memory savings and competitive runtime. The results confirm that the multiplicative model not only preserves algorithmic accuracy but also provides a scalable, reusable implementation strategy across diverse learning tasks. In summary, the work offers a unified, algebra‑centric approach to parallelizing machine‑learning algorithms on MapReduce, reducing development effort and delivering strong performance and scalability.

Comments & Academic Discussion

Loading comments...

Leave a Comment