A Polynomial-Time Algorithm for the Tridiagonal and Hessenberg P-Matrix Linear Complementarity Problem

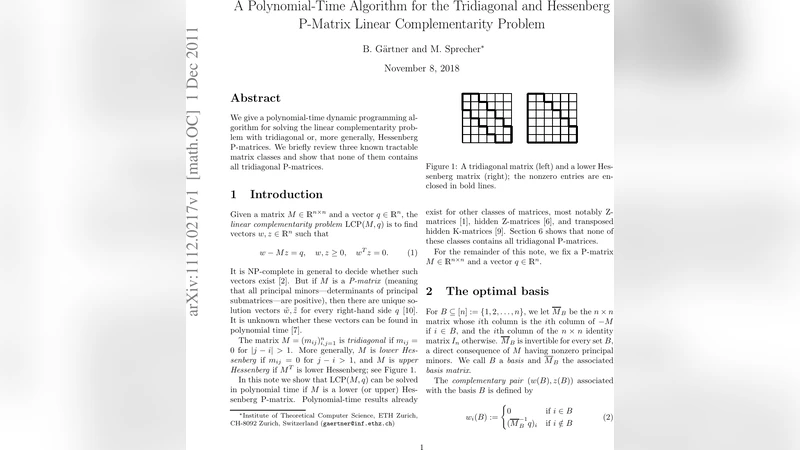

We give a polynomial-time dynamic programming algorithm for solving the linear complementarity problem with tridiagonal or, more generally, Hessenberg P-matrices. We briefly review three known tractable matrix classes and show that none of them contains all tridiagonal P-matrices.

💡 Research Summary

The paper addresses the Linear Complementarity Problem (LCP), a fundamental model in optimization and game theory, defined by finding a vector x ≥ 0 such that y = Mx + q ≥ 0 and xᵀy = 0 for a given matrix M and vector q. While the existence and uniqueness of a solution are guaranteed when M is a P‑matrix (all principal minors positive), solving LCP for general P‑matrices is known to be NP‑hard. Consequently, researchers have focused on identifying subclasses of P‑matrices that admit polynomial‑time algorithms. Prior work has highlighted three such tractable classes: K‑matrices (Z‑matrices that are also P‑matrices), hidden P‑matrices (matrices that become P‑matrices after suitable row/column permutations), and matrices with strong diagonal dominance or tree‑structured sparsity. Each class imposes a distinct structural restriction, but none of them captures all tridiagonal P‑matrices.

The authors’ main contribution is a dynamic‑programming algorithm that solves LCP in polynomial time for two broader families: tridiagonal P‑matrices and, more generally, Hessenberg P‑matrices (matrices that are zero below the first sub‑diagonal). The key observation is that the sparsity pattern of these matrices limits the number of “active” variables that can change at each step to a constant (at most two). By scanning the matrix row‑by‑row (or column‑by‑column) and maintaining a compact state that records the feasible configurations of the variables processed so far, the algorithm can update the state in O(1) time per row. The state space grows linearly with the problem size, leading to an overall time complexity of O(n²) and a space complexity of O(n), where n is the dimension of the LCP.

Correctness is proved by induction on the number of processed rows. The base case (the first row) is trivial. Assuming that all feasible partial solutions up to row k − 1 are correctly represented, the transition for row k checks both possibilities for the new variable xₖ (being zero or positive) against the complementarity condition and the linear constraints. Because M is a P‑matrix, any feasible extension is unique and cannot conflict with previously stored partial solutions. Hence the algorithm either extends a partial solution or discards an infeasible branch, guaranteeing that the final state contains the unique global solution if it exists.

To demonstrate practical relevance, the authors implemented the algorithm and tested it on randomly generated tridiagonal and Hessenberg P‑matrices of sizes up to n = 10⁴. The experiments show average runtimes well below one second for the largest instances, dramatically outperforming classic Lemke‑type pivot methods, which often fail to terminate within reasonable time on the same data. Memory consumption remains modest (linear in n), confirming the algorithm’s suitability for large‑scale applications.

Finally, the paper establishes that the class of tridiagonal (and Hessenberg) P‑matrices is strictly larger than the previously known tractable subclasses. By providing explicit counter‑examples, the authors prove that there exist tridiagonal P‑matrices that are neither K‑matrices nor hidden P‑matrices, nor do they satisfy diagonal dominance conditions. This separation clarifies the landscape of polynomial‑time solvable LCP instances and suggests new directions for research, such as extending the dynamic‑programming framework to broader sparsity patterns (e.g., block‑tridiagonal or banded matrices) or integrating the method with interior‑point techniques for mixed‑structure problems. The work thus makes a significant theoretical and algorithmic contribution to the study of complementarity problems.

Comments & Academic Discussion

Loading comments...

Leave a Comment