A fast recursive coordinate bisection tree for neighbour search and gravity

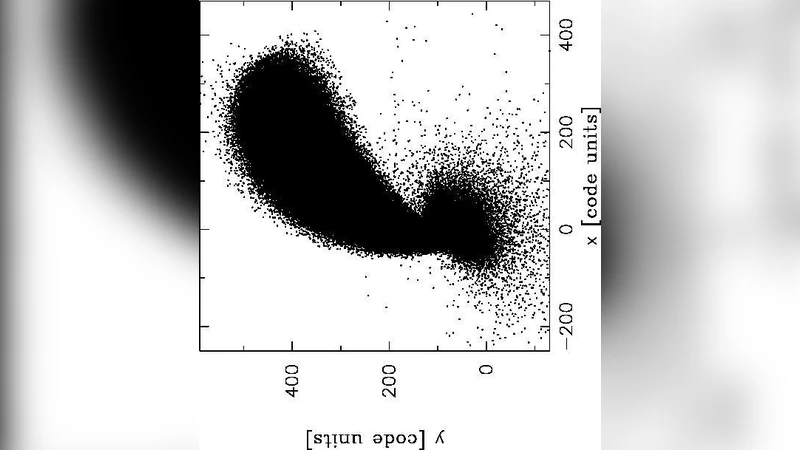

We introduce our new binary tree code for neighbour search and gravitational force calculations in an N-particle system. The tree is built in a “top-down” fashion by “recursive coordinate bisection” where on each tree level we split the longest side of a cell through its centre of mass. This procedure continues until the average number of particles in the lowest tree level has dropped below a prescribed value. To calculate the forces on the particles in each lowest-level cell we split the gravitational interaction into a near- and a far-field. Since our main intended applications are SPH simulations, we calculate the near-field by a direct, kernel-smoothed summation, while the far field is evaluated via a Cartesian Taylor expansion up to quadrupole order. Instead of applying the far-field approach for each particle separately, we use another Taylor expansion around the centre of mass of each lowest-level cell to determine the forces at the particle positions. Due to this “cell-cell interaction” the code performance is close to O(N) where N is the number of used particles. We describe in detail various technicalities that ensure a low memory footprint and an efficient cache use. In a set of benchmark tests we scrutinize our new tree and compare it to the “Press tree” that we have previously made ample use of. At a slightly higher force accuracy than the Press tree, our tree turns out to be substantially faster and increasingly more so for larger particle numbers. For four million particles our tree build is faster by a factor of 25 and the time for neighbour search and gravity is reduced by more than a factor of 6. In single processor tests with up to 10^8 particles we confirm experimentally that the scaling behaviour is close to O(N). The current Fortran 90 code version is OpenMP-parallel and scales excellently with the processor number (=24) of our test machine.

💡 Research Summary

The paper presents a new binary tree algorithm designed for fast neighbour searching and gravitational force calculation in large N‑particle systems, with a particular focus on Smoothed Particle Hydrodynamics (SPH) applications. The tree is built top‑down using a “Recursive Coordinate Bisection” (RCB) strategy: at each level the longest side of a cell is split through its centre of mass, producing two daughter cells that are roughly balanced in particle number. This process continues until the average number of particles per leaf cell falls below a user‑defined threshold Nₗₗ.

Two possible splitting criteria are examined: a median‑position split (MPS) and a centre‑of‑mass split (CMS). While MPS guarantees perfectly balanced particle counts, it can separate physically related particles and leads to larger errors in the subsequent multipole expansion. The authors find that CMS dramatically reduces force errors, especially because the far‑field force is evaluated via a Cartesian Taylor expansion around the cell’s centre of mass. Consequently, CMS is adopted for the final implementation despite the occasional creation of empty leaf cells (which are negligible in number and ignored).

The tree is binary rather than octree, which shortens the depth (by a factor of three) and yields shorter interaction lists for a given accuracy. Binary cells tend to be more compact, reducing high‑order multipole moments and improving convergence of the far‑field expansion.

A key performance optimisation is the in‑place particle sorting performed during tree construction. Inspired by quick‑sort, the algorithm makes a single pass through the particles in a node, moving those with coordinates less than the split value to the front of the array and those greater to the back. This yields a contiguous block of particles for each cell, eliminating the need for explicit particle lists or pointer structures. The sorting cost is O(N log N) overall, but because each level scans the array only once, the tree build time is less than 1 % of the total runtime in the benchmark tests.

Node labelling is implemented via simple integer arithmetic (bit‑shifts), allowing parent‑child relationships to be recovered without extra memory. This further reduces the memory footprint and improves cache locality during tree traversal.

Neighbour searching exploits the sorted particle layout: for each leaf cell the algorithm checks particles within the cell and in adjacent cells that intersect the SPH kernel support radius. The near‑field gravitational force is computed directly with a kernel‑smoothed summation, while the far‑field is approximated using a Cartesian Taylor expansion up to quadrupole order. Crucially, the authors employ a “cell‑cell interaction” scheme: the multipole moments of a distant cell are expanded once about its centre of mass, and the resulting coefficients are applied to all particles inside that cell. This reduces the number of expensive far‑field evaluations from O(N log N) to essentially O(N).

Benchmarking on a single processor shows that for four million particles the new RCB tree builds 25 times faster than the previously used Press tree, and the combined neighbour search and gravity calculation is more than six times faster. Scaling tests up to 10⁸ particles confirm an empirical O(N) behaviour for both tree construction and force evaluation. The Fortran 90 implementation is parallelised with OpenMP and demonstrates excellent strong scaling up to 24 cores, with near‑linear speed‑up. Memory consumption remains low because each cell stores only a few scalar quantities (mass, centre of mass, quadrupole moments) and particle indices are implicit in the sorted arrays.

In summary, the authors deliver a highly efficient, memory‑conservative, and cache‑friendly tree algorithm that achieves near‑linear scaling for neighbour search and gravity in large‑scale SPH simulations. By combining recursive coordinate bisection, centre‑of‑mass splitting, in‑place particle sorting, and cell‑cell Taylor expansions, the method outperforms the traditional Press tree in both speed and accuracy, making it a valuable tool for future high‑resolution astrophysical simulations.

Comments & Academic Discussion

Loading comments...

Leave a Comment