Communicating Concurrent Functions

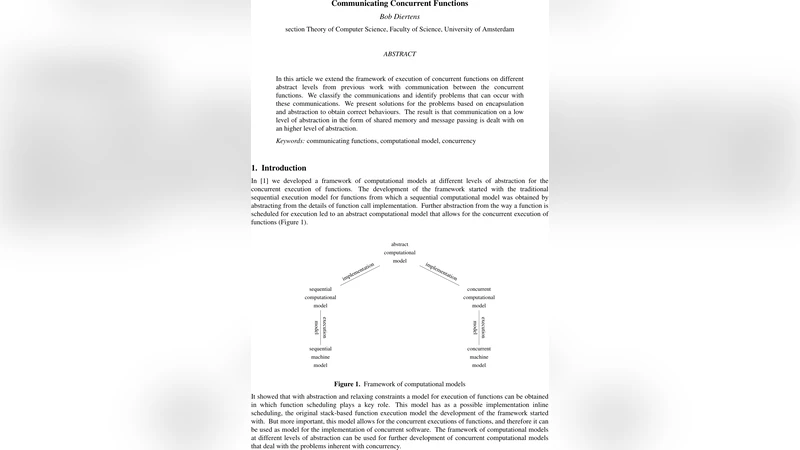

In this article we extend the framework of execution of concurrent functions on different abstract levels from previous work with communication between the concurrent functions. We classify the communications and identify problems that can occur with these communications. We present solutions for the problems based on encapsulation and abstraction to obtain correct behaviours. The result is that communication on a low level of abstraction in the form of shared memory and message passing is dealt with on an higher level of abstraction.

💡 Research Summary

The paper builds on the author’s earlier work that introduced a hierarchy of abstract computational models for executing functions concurrently. The original framework started from a sequential function‑call model, abstracted away implementation details, and then introduced a scheduling abstraction that allowed multiple functions to run in parallel. In the current contribution the authors extend this abstract concurrent execution model with explicit communication between the concurrent functions.

Communication is classified along two orthogonal dimensions: (1) direct vs. indirect and (2) unidirectional vs. bidirectional. Direct communication treats the send‑and‑receive pair as a single invisible atomic action at the level of abstraction considered. Indirect communication, by contrast, uses a shared storage location: one function writes a message, another reads it later. Because the write and read actions are separable, indirect communication is vulnerable to several problems: (a) the two actions may interleave and corrupt each other, (b) a new message may overwrite an unread one, and (c) other concurrent functions may access the same location, causing additional interference.

To solve these problems the authors propose a two‑layer encapsulation strategy. The first layer is a classic lock‑unlock mechanism that guarantees exclusive access to the shared location. The second layer hides this lock inside a dedicated object (or “communication function”), making the location inaccessible from outside code. This encapsulation forces every read or write to be preceded by a lock and followed by an unlock, eliminating unsynchronised accesses.

For unidirectional indirect communication the basic lock‑write‑unlock and lock‑read‑unlock sequences are wrapped into a single abstract communication function. The authors further enrich this wrapper with a status flag (and associated set/get operations) that records whether a message is currently present. The flag enforces the constraint that a write may only occur when no unread message exists, and a read may only occur when a message is available. This yields a producer‑consumer style protocol that is expressed entirely at the function‑level abstraction.

Bidirectional indirect communication is treated as two independent unidirectional channels. When both directions share the same storage cell, the same interference problems arise, plus the risk that one side overwrites the other’s pending message. The solution is to extend the status‑machine to track four (or more) states per direction: “write‑allowed”, “read‑allowed”, “both‑waiting”, etc. By encoding which side is allowed to write or read at any moment, the protocol prevents deadlocks and lost messages even when the two channels share a single memory cell.

The paper also discusses two special cases. First, when only the most recent message matters (e.g., real‑time sensor streams), the status constraint can be relaxed: a new write may overwrite an unread message, and the receiver simply processes the latest value. This can be implemented either with the full status‑based mechanism or with the simpler lock‑write‑unlock primitive, depending on whether the sender needs to know if the previous message was consumed. Second, the authors consider “undirected” communication, which corresponds to the traditional shared‑memory model where any participant may read or write at any time. For simple reads/writes the basic lock mechanism suffices; for compound operations that must be atomic (read‑modify‑write) the protocol requires the full lock‑read‑process‑write‑unlock sequence.

In the conclusion the authors summarise that by classifying communication forms, identifying the associated hazards, and providing encapsulated lock‑based solutions, they have extended their abstract computational model to cover realistic inter‑function communication. The mechanisms are deliberately kept at a high level of abstraction, allowing concrete implementations to replace the internal lock or status objects with any equivalent synchronization primitive (mutexes, semaphores, monitors) without changing the observable behaviour of the system. Consequently, the work offers a principled, model‑driven approach to designing safe concurrent function interactions, bridging the gap between low‑level shared‑memory/message‑passing techniques and high‑level functional abstractions.

Comments & Academic Discussion

Loading comments...

Leave a Comment