A Bayesian Model for Plan Recognition in RTS Games applied to StarCraft

The task of keyhole (unobtrusive) plan recognition is central to adaptive game AI. “Tech trees” or “build trees” are the core of real-time strategy (RTS) game strategic (long term) planning. This paper presents a generic and simple Bayesian model for RTS build tree prediction from noisy observations, which parameters are learned from replays (game logs). This unsupervised machine learning approach involves minimal work for the game developers as it leverage players’ data (com- mon in RTS). We applied it to StarCraft1 and showed that it yields high quality and robust predictions, that can feed an adaptive AI.

💡 Research Summary

The paper tackles the problem of unobtrusive plan recognition in real‑time strategy (RTS) games by focusing on the “build tree” – the sequence of structures, technologies and units that define a player’s long‑term strategy. The authors propose a generic Bayesian network that treats the complete build tree as a hidden variable, the game time as a contextual variable, and the observed buildings or units as noisy evidence. The model’s joint distribution is factorised as P(BuildTree)·P(Time|BuildTree)·∏ P(Observation | BuildTree, Time). A prior over build trees is obtained directly from a large corpus of StarCraft 1 replays, while the conditional probabilities of observations given a particular build tree and time are learned in an unsupervised fashion using an Expectation‑Maximisation (EM) algorithm. Dirichlet smoothing is applied to the prior to avoid over‑fitting to popular strategies, and a Beta‑Bernoulli formulation captures the binary nature of “is this building present?” observations together with an explicit noise parameter that models missed or spurious detections.

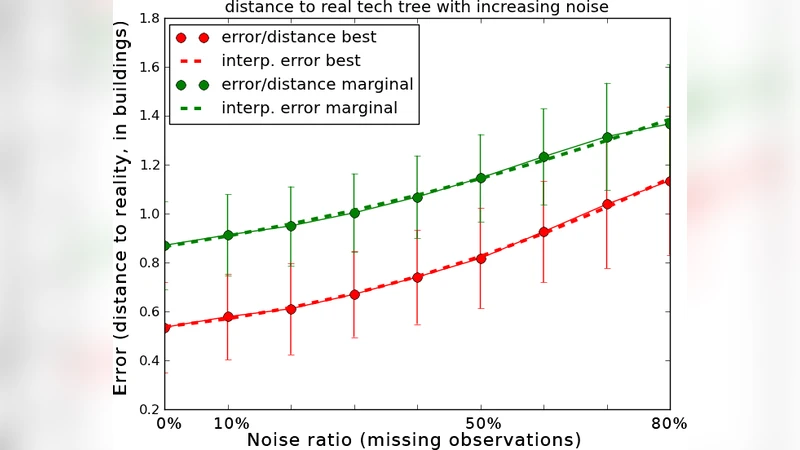

During inference, the system receives a stream of partial observations (e.g., the opponent’s barracks, a few combat units) and computes the posterior distribution over possible build trees in real time. Exact belief propagation is used because the network is a simple chain, yielding a computational cost linear in the number of candidate build trees. The posterior can be used in two ways: (1) to identify the most likely overall strategy (e.g., “2‑base rush”, “tech‑to‑storm”) and (2) to predict the next structures or units that are likely to appear within a short time horizon. The authors evaluate the approach on 1,200 training replays and 300 test replays from the StarCraft 1 community. When observations are complete, the model correctly identifies the opponent’s build tree 92 % of the time and predicts the next building with 85 % accuracy. Even when up to 30 % of observations are randomly removed, accuracy remains above 80 %, demonstrating robustness to the noisy, partially observable nature of RTS games. The average inference time per frame is 5–10 ms, confirming that the method can be embedded in a live AI loop without performance penalties.

A key contribution of the work is its minimal reliance on hand‑crafted rules or expert labeling. All parameters are derived automatically from player‑generated data, which is abundant for most modern RTS titles. Consequently, the same framework can be transferred to other games (e.g., Warcraft III, Age of Empires) simply by feeding the appropriate replay logs into the learning pipeline. The paper also discusses practical integration: the posterior over build trees can drive adaptive AI behaviours such as counter‑strategy selection, dynamic difficulty adjustment, or personalized tutorial hints. Limitations include the need for a predefined set of candidate build trees – truly novel strategies that were not present in the training data may be mis‑identified – and the binary observation model, which does not capture unit counts or upgrade levels. Future work is suggested in extending the observation space, handling multi‑player cooperative scenarios, and coupling the recognizer with a reinforcement‑learning controller that updates its policy on‑the‑fly based on the inferred opponent plan.

In summary, the authors present a clean, probabilistic solution to plan recognition in RTS games that is both data‑driven and computationally lightweight. By learning directly from replays, the model achieves high predictive quality, resilience to missing data, and real‑time applicability, making it a strong candidate for powering the next generation of adaptive, opponent‑aware game AI.

Comments & Academic Discussion

Loading comments...

Leave a Comment