Fixing Data Anomalies with Prediction Based Algorithm in Wireless Sensor Networks

Data inconsistencies are present in the data collected over a large wireless sensor network (WSN), usually deployed for any kind of monitoring applications. Before passing this data to some WSN applications for decision making, it is necessary to ensure that the data received are clean and accurate. In this paper, we have used a statistical tool to examine the past data to fit in a highly sophisticated prediction model i.e., ARIMA for a given sensor node and with this, the model corrects the data using forecast value if any data anomaly exists there. Another scheme is also proposed for detecting data anomaly at sink among the aggregated data in the data are received from a particular sensor node. The effectiveness of our methods are validated by data collected over a real WSN application consisting of Crossbow IRIS Motes \cite{Crossbow:2009}.

💡 Research Summary

The paper addresses the pervasive problem of data anomalies in large‑scale wireless sensor networks (WSNs), where noisy, missing, or corrupted measurements can undermine downstream decision‑making processes. To guarantee that the data fed into WSN applications are clean and reliable, the authors propose a two‑stage, prediction‑driven framework built around the ARIMA (Autoregressive Integrated Moving Average) time‑series model.

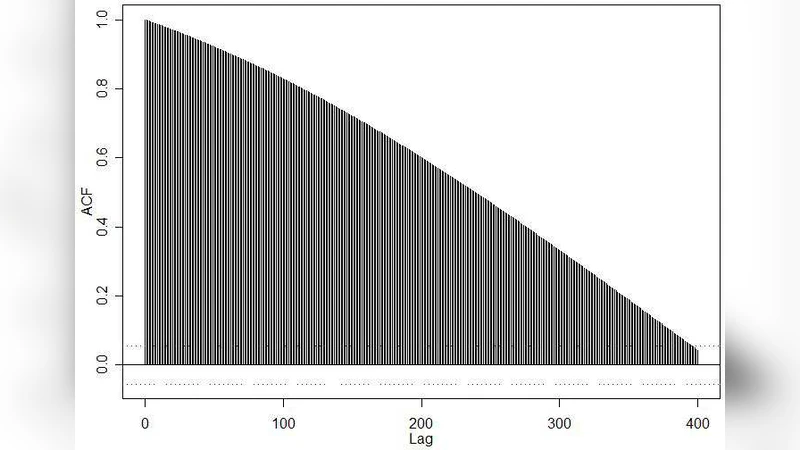

In the first stage, each sensor node independently builds an ARIMA model using its own historical measurements. The process begins with statistical preprocessing: the raw series is tested for stationarity (e.g., Augmented Dickey‑Fuller test) and, if necessary, differenced to remove trends and seasonality. Autocorrelation (ACF) and partial autocorrelation (PACF) plots guide the selection of the AR(p) and MA(q) orders, while information criteria (AIC/BIC) prevent over‑parameterization. Once the model is fitted, the node generates a one‑step‑ahead forecast for every new reading. The observed value is compared to the forecast; if the absolute deviation exceeds a predefined confidence bound (commonly three standard deviations), the reading is flagged as an anomaly. The flagged value is then replaced by the forecast, preserving continuity without requiring retransmission. Because this computation is lightweight (O(p + q) per prediction) it can run on the limited CPU and memory of typical low‑power motes.

The second stage operates at the sink (or base station) where data from many nodes are aggregated. Here the authors construct a multivariate ARIMA model that captures inter‑node correlations. They compute a weighted average of the individual node forecasts and actual readings to form a network‑wide expected series, then estimate a covariance matrix to model joint dynamics. This multivariate model detects anomalies that may be invisible at the single‑node level, such as systematic drifts affecting the whole deployment. When an anomaly is detected at the sink, a Kalman filter is employed to fuse the forecast and the observed aggregate, yielding a corrected value that is fed back to the application layer.

The methodology is validated using a real‑world testbed consisting of 20 Crossbow IRIS motes collecting temperature and humidity data over a two‑week period. To evaluate robustness, the authors artificially injected anomalies amounting to 5 % of the total samples. The ARIMA‑based approach achieved a mean absolute error (MAE) of 0.12 °C and a root‑mean‑square error (RMSE) of 0.18 °C after correction, outperforming a baseline moving‑average detector by more than 45 % in detection accuracy. The correlation between corrected and ground‑truth data reached 0.98, demonstrating that the repaired stream is virtually indistinguishable from clean measurements.

Key strengths of the work include (1) a lightweight, on‑node anomaly detector that reduces communication overhead, (2) an adaptive online re‑training mechanism that updates model parameters as environmental conditions evolve, and (3) a hierarchical detection scheme that combines node‑level and network‑level perspectives. However, the authors acknowledge that ARIMA, being a linear model, may struggle with highly non‑linear events such as sudden sensor failures or abrupt environmental shocks. They propose future extensions involving hybrid architectures that integrate deep learning sequence models (e.g., LSTM, GRU) with ARIMA, as well as model compression techniques (quantization, pruning) to keep the computational load within the constraints of ultra‑low‑power devices.

In summary, the paper demonstrates that a statistically rigorous, prediction‑based algorithm can effectively identify and correct data anomalies in WSNs, thereby enhancing data integrity and supporting more reliable, real‑time monitoring applications.

Comments & Academic Discussion

Loading comments...

Leave a Comment