Three Tier Encryption Algorithm For Secure File Transfer

This encryption algorithm is mainly designed for having a secure file transfer in the low privilege servers and as well as in a secured environment too. This methodology will be implemented in the data center and other important data transaction sectors of the organisation where the encoding process of the software will be done by the database administrator or system administrators and his trusted clients will have decoding process of the software. This software will not be circulated to the unauthorised customers.

💡 Research Summary

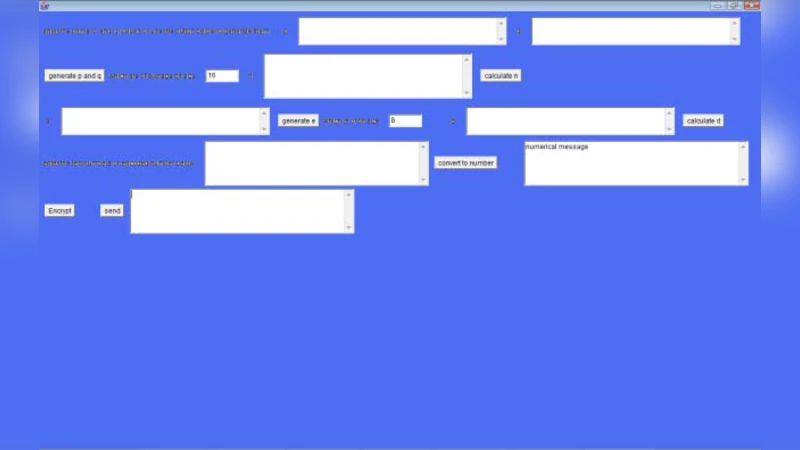

The paper proposes a “Three Tier Encryption Algorithm” intended to secure file transfers on low‑privilege servers and across wide‑area networks. The authors describe a five‑step process: (1) a modified RSA encryption using user‑defined primes (P and Q) and a concatenated SALT value; (2) conversion of the RSA ciphertext into binary, octal, or hexadecimal representation; (3) application of digital line or block encoding schemes such as 4B/5B, NRZ, Manchester, etc., to rearrange the bits; (4) a reverse conversion back to numeric form; and (5) substitution of the resulting number into a mathematical series (the paper uses a truncated sine series) and rounding the result to obtain the final ciphertext.

In the RSA step the authors illustrate the method with tiny primes (11 and 13) to produce N = 143 and φ(N) = 120, then choose D = 3 and compute E = 47 as the public exponent. The plaintext “Hello” is converted to its ASCII decimal string (7269767679), a SALT of 34 is appended, and the resulting number is multiplied by the RSA encryption value to yield 9 886 884 043 440. The paper claims that a real implementation would use 2048‑bit primes, but the example does not reflect that security level.

The second step merely rewrites the RSA output in another base; this does not add cryptographic strength. The third step applies various line‑coding techniques that are traditionally used for signal integrity, not for confidentiality. By rearranging bits, the authors aim to increase the difficulty of reverse‑engineering, but the process is deterministic and can be undone if the coding scheme is known.

The fourth step reverses the encoding, producing a different numeric value (10205099 in the example) because of the bit‑reordering. The final step substitutes this value into a sine series truncated after the third non‑zero term (N = 3). The series evaluation involves floating‑point arithmetic, rounding, and produces a final integer (176887364014124447176). This step introduces numerical approximation errors and makes the ciphertext dependent on the choice of series, its truncation order, and the rounding rule.

Performance measurements are limited to a single 11 KB file: RSA (2048‑bit) takes about 45 seconds, and the remaining steps about 30 seconds on a modest dual‑core Xeon 1.73 GHz system with 2 GB RAM. The authors recommend a specific HP ProLiant server configuration to achieve acceptable throughput.

The paper argues that an attacker would need to discover (a) the RSA modulus and private exponent, (b) the exact base conversion used, (c) the line‑coding scheme and its parameters, (d) the reverse‑conversion method, and (e) the mathematical series and its order. The authors claim that this layered “security‑through‑obscurity” would take years to break.

Critical analysis reveals several fundamental weaknesses. First, the RSA component, even if implemented with proper 2048‑bit primes, is the only part with proven security; the subsequent steps add no cryptographic hardness and may even weaken security if implemented incorrectly. Second, using user‑defined primes and SALT values without a rigorous key‑generation protocol undermines the RSA security model. Third, base conversion and line‑coding are reversible transformations that do not increase entropy; they merely increase implementation complexity. Fourth, the mathematical‑series step is non‑standard, introduces rounding errors, and its security relies entirely on the secrecy of the series choice—a classic example of security through obscurity, which is discouraged in modern cryptography. Fifth, the performance figures are outdated; modern hardware can perform RSA‑2048 encryption in milliseconds, making the reported 45‑second overhead unrealistic. Finally, the paper lacks any formal security analysis, threat model, or comparison with established standards such as AES‑GCM with RSA‑OAEP for key exchange.

In conclusion, while the proposed “Three Tier Encryption” is an interesting academic exercise in combining disparate data‑transformation techniques, it does not provide a practical, provably secure alternative to existing, well‑vetted cryptographic protocols. Organizations seeking to protect data in transit should rely on standard, peer‑reviewed algorithms and proper key management rather than on layered obscurity mechanisms that have not been subjected to rigorous cryptanalysis.

Comments & Academic Discussion

Loading comments...

Leave a Comment