The random initialization of weights of a multilayer perceptron makes it possible to model its training process as a Las Vegas algorithm, i.e. a randomized algorithm which stops when some required training error is obtained, and whose execution time is a random variable. This modeling is used to perform a case study on a well-known pattern recognition benchmark: the UCI Thyroid Disease Database. Empirical evidence is presented of the training time probability distribution exhibiting a heavy tail behavior, meaning a big probability mass of long executions. This fact is exploited to reduce the training time cost by applying two simple restart strategies. The first assumes full knowledge of the distribution yielding a 40% cut down in expected time with respect to the training without restarts. The second, assumes null knowledge, yielding a reduction ranging from 9% to 23%.

Deep Dive into Exploiting Heavy Tails in Training Times of Multilayer Perceptrons: A Case Study with the UCI Thyroid Disease Database.

The random initialization of weights of a multilayer perceptron makes it possible to model its training process as a Las Vegas algorithm, i.e. a randomized algorithm which stops when some required training error is obtained, and whose execution time is a random variable. This modeling is used to perform a case study on a well-known pattern recognition benchmark: the UCI Thyroid Disease Database. Empirical evidence is presented of the training time probability distribution exhibiting a heavy tail behavior, meaning a big probability mass of long executions. This fact is exploited to reduce the training time cost by applying two simple restart strategies. The first assumes full knowledge of the distribution yielding a 40% cut down in expected time with respect to the training without restarts. The second, assumes null knowledge, yielding a reduction ranging from 9% to 23%.

The training time of a Multilayer Perceptron (MLP), understood as the time needed to obtain some required training error, is a random variable which depends on the random initialization of the MLP weights.

These weights are commonly initialized according to a given probability distribution, having this choice a significant impact on the training time distribution (see Delashmit & Manry 2002, Duch, et al. 1997, LeCun, et al. 1998). To address this problem, some weight initialization methods have been proposed (e.g. Duch et al. 1997, Weymaere & Martens 1994). They attempt to reduce the training time by applying different probability distributions on the initial weights of the MLP based on knowledge about the training set.

In this correspondence, a simpler and more general approach which does not make use of the mentioned information is presented. To do this, we model the learning process of a MLP as a las Vegas algorithm (Luby, et al. 1993), i.e. a randomized algorithm which meets three conditions: (i) it stops when some pre-defined training error δ is obtained, (ii) its only measurable observation is the training time, and (iii) it only has either full or null knowledge about the training time probability distribution.

Using this modeling, we perform a case study with the UCI Thyroid Disease database1 , revealing that the time distribution for learning this pattern recognition benchmark belongs to the heavy tail distribution family. This type of distributions is regarded as non-standard for its big probability mass of arbitrary long values.

We make use of formal and experimental results which prove that the expected execution time of a random algorithm with such underlying distribution can be reduced by using restart strategies (Gomes 2003). This work adapts these strategies to the MLP context: the MLP is trained during a number of epochs t 1 . If the required training error δ is achieved before t 1 , then the execution finishes. Otherwise, we initialize again the weights in a randomized way, and re-train the MLP during t 2 epochs. The process is iteratively repeated until the training error δ is reached, being t i the restart threshold (in epochs) after i -1 restarts have been performed.

Two different strategies are applied for the determination of optimal restarting times. The first assumes full knowledge of the distribution yielding a 40% cut down in expected time with respect to the training without restarts. The second assumes null knowledge, yielding a reduction ranging from 9% to 23%.

The rest of the paper is organized as follows. Section 2 presents the Thyroid Disease database and provides evidence of heavy tail behavior when a MLP is trained on it. Section 3 tests the condition to be satisfied by the probability distribution to profit from restart strategies, providing an empirical evaluation of two strategies on the particular case study. Finally, some conclusions and future research lines are given in section 4.

To motivate the use of restarts in MLP learning, we firstly present the existence of a high variability in its training time, indicative of an underlying heavy tail behavior. The evaluation was performed using the UCI Thyroid Disease database, as a case study.

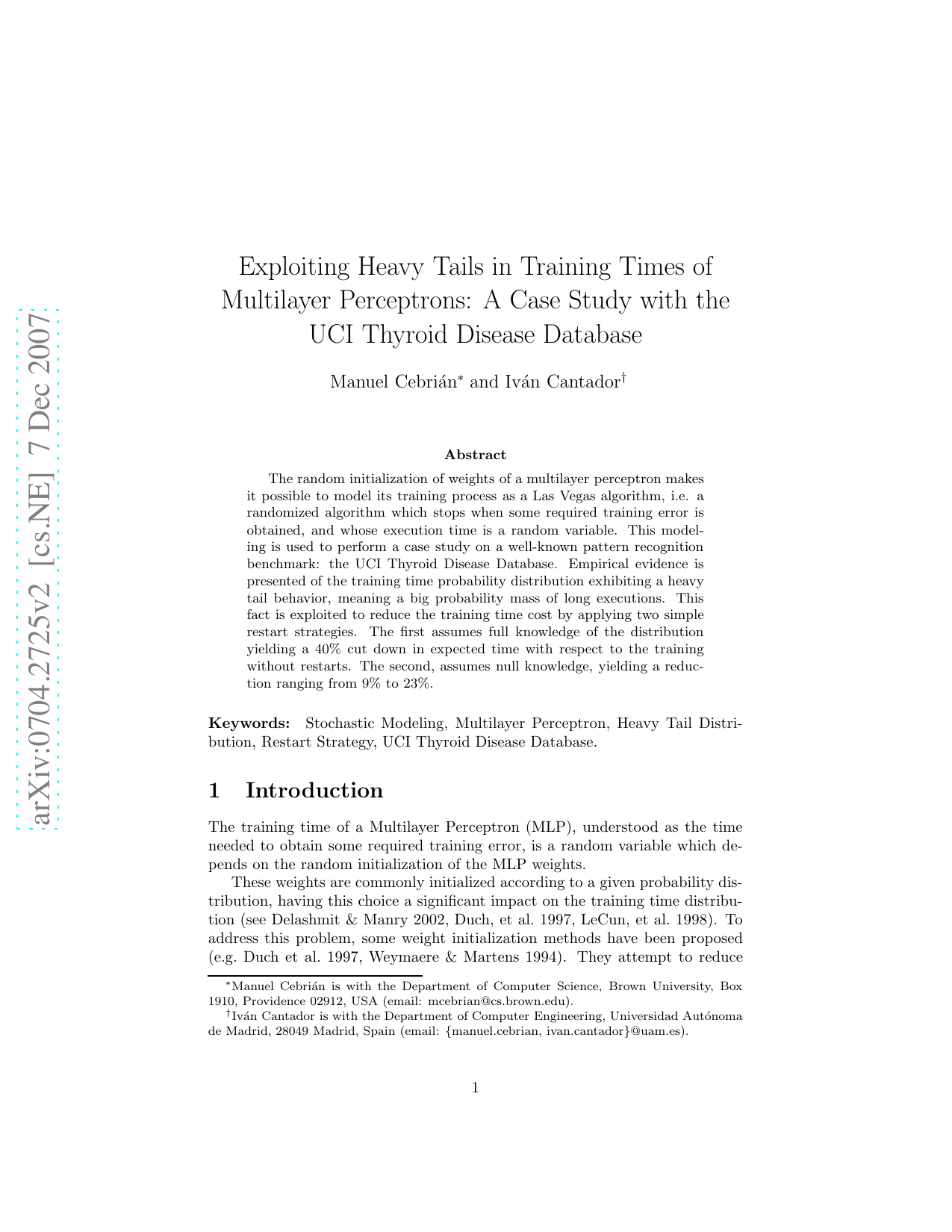

Table 1 shows the expectations, deviations (and its ratio) of the numbers of epochs T spent in building a single hidden layer MLP with n = 1, . . . , 8 units. The MLP was trained using the well-known Back- target training error δ = 0.02. The results shown were computed using 10-fold cross validation.

The obtained deviations are very large respect to the expectations for most of the architectures. For the rest of the experiments, we shall use a MLP with n = 3 hidden units, which has the highest relative variability. This will serve as a proof of concept, although the same behavior is observed in MLPs with other number of hidden units.

In the following, we give visual evidence that T is heavy tailed, i.e. that the probability of the training time T being greater than some number of epochs t has polynomial decay, viz. P [T > t] ∼ C.t -α , where α ∈ (0, 2), C is some constant, and t > 0.

Figure 1 presents a log-log plot of P [T > t] for the 10% largest values (t > 3, 000). The plot confirms the polynomial decay by displaying a straight line with slope -α. This is because, for sufficiently large t, log P

Finally, we verify that α belong to the (0, 2) interval by computing the Hill’s (1975) estimator:

where T m,1 ≤ T m,2 ≤ . . . ≤ T m,m are the m ordered training completion times, and r < m is a cutoff that allows to observe only the highest values (the tail). We use the typical cutoff r = 0.1m and obtain αr = 1.942, which is consistent with our hypothesis.

This polynomial decay, which yields a big probability mass for long executions, is due to the fact that certain initial weights entail a convergence to local minima of the target function, requiring very long (even infinite) training periods, while others yield a convergen

…(Full text truncated)…

This content is AI-processed based on ArXiv data.