Hardware Engines for Bus Encryption: A Survey of Existing Techniques

The widening spectrum of applications and services provided by portable and embedded devices bring a new dimension of concerns in security. Most of those embedded systems (pay-TV, PDAs, mobile phones, etc…) make use of external memory. As a result, the main problem is that data and instructions are constantly exchanged between memory (RAM) and CPU in clear form on the bus. This memory may contain confidential data like commercial software or private contents, which either the end-user or the content provider is willing to protect. The goal of this paper is to clearly describe the problem of processor-memory bus communications in this regard and the existing techniques applied to secure the communication channel through encryption - Performance overheads implied by those solutions will be extensively discussed in this paper.

💡 Research Summary

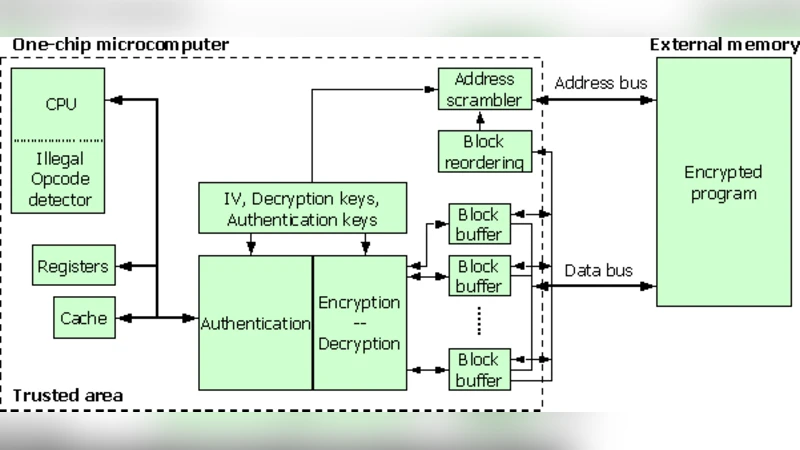

The paper addresses a critical security gap in modern portable and embedded devices that rely on external DRAM: the clear‑text transfer of data and instructions over the processor‑memory bus. Because the bus is physically accessible, an adversary can sniff, inject, or tamper with memory traffic, exposing proprietary software, personal data, or digital content. The authors first define a threat model that includes bus snooping, memory replacement attacks, and side‑channel techniques such as power and electromagnetic analysis. They then categorize existing protection mechanisms into three main dimensions: cryptographic algorithm, placement of the encryption engine, and key‑management strategy.

In terms of algorithms, the survey covers stream ciphers, block ciphers (primarily AES), and tweakable block modes such as XTS. Stream ciphers offer minimal latency but suffer from severe security degradation if the keystream is ever reused. Block ciphers provide strong confidentiality and, when combined with authentication (e.g., GCM), also guarantee integrity, but they introduce padding overhead and higher latency. Tweakable modes incorporate the memory address into the encryption process, preventing pattern analysis across different locations.

The placement of the encryption hardware is examined in two principal architectures. The first embeds a dedicated encryptor/decryptor inside the memory controller, applying encryption to every memory transaction in an inline fashion. This approach guarantees uniform protection but adds a fixed latency penalty to the memory path. The second places the cryptographic engine at the cache‑line level, encrypting data only on cache misses and decrypting on cache hits. This cache‑aware design can hide most of the encryption cost when the hit rate is high, but it requires substantial modifications to cache‑coherence protocols and line‑replacement policies. The paper also mentions self‑encrypting memory modules as a third, less common option.

Key management is identified as a decisive factor for practical deployment. Most surveyed solutions rely on a secure boot process that derives a master key from a TPM, a Physical Unclonable Function (PUF), or a dedicated hardware security module. The master key is then provisioned to the encryption engine via a protected channel, and mechanisms for key rollover, revocation, and secure erasure are discussed. The authors stress that any exposure of the key on the bus would defeat the entire scheme, so constant‑power or masking techniques are recommended to mitigate side‑channel leakage.

Performance evaluation is presented using four metrics: latency, throughput, silicon area, and power consumption. Stream‑cipher based designs typically add 1–2 clock cycles per memory access, whereas block‑cipher implementations incur 5–10 cycles. Area figures range from roughly 2,000–3,000 gates for a full AES‑128 core to 500–800 gates for lightweight ciphers such as PRESENT or Simon. Power impact is proportional to the encryption frequency; in high‑bandwidth DDR4/5 systems the cryptographic engine can consume 5–10 % of total system power. Cache‑aware designs can keep overall performance loss below 2 % when hit rates exceed 80 %.

Security analysis shows that combining encryption with authentication (e.g., AES‑GCM) protects both confidentiality and integrity, while tweakable modes prevent replay attacks across different addresses. Countermeasures against side‑channel attacks—randomized clocks, power‑balancing circuits, and data masking—are also surveyed.

Finally, the paper outlines open research challenges: (1) designing pipelined, multi‑core‑friendly encryption engines that scale with emerging high‑speed memory interfaces; (2) integrating lightweight ciphers with dynamic key‑scheduling to reduce area and energy while avoiding key reuse; (3) re‑architecting cache‑coherence and memory‑consistency protocols to incorporate cryptographic verification without sacrificing scalability; and (4) standardizing secure‑boot and runtime key‑update interfaces for heterogeneous platforms. By addressing these issues, bus encryption can become a practical, low‑overhead security layer for the next generation of mobile, automotive, and IoT devices.

Comments & Academic Discussion

Loading comments...

Leave a Comment