Speculative Parallel Evaluation Of Classification Trees On GPGPU Compute Engines

We examine the problem of optimizing classification tree evaluation for on-line and real-time applications by using GPUs. Looking at trees with continuous attributes often used in image segmentation, we first put the existing algorithms for serial and data-parallel evaluation on solid footings. We then introduce a speculative parallel algorithm designed for single instruction, multiple data (SIMD) architectures commonly found in GPUs. A theoretical analysis shows how the run times of data and speculative decompositions compare assuming independent processors. To compare the algorithms in the SIMD environment, we implement both on a CUDA 2.0 architecture machine and compare timings to a serial CPU implementation. Various optimizations and their effects are discussed, and results are given for all algorithms. Our specific tests show a speculative algorithm improves run time by 25% compared to a data decomposition.

💡 Research Summary

The paper addresses the challenge of accelerating the evaluation of binary decision trees with continuous attributes, a task that is central to many real‑time applications such as image segmentation, medical diagnosis, and robotic navigation. While training of decision trees is typically performed offline, the online classification phase must process large numbers of samples quickly, and the authors argue that the inherent independence of sample classifications makes the problem amenable to parallelization.

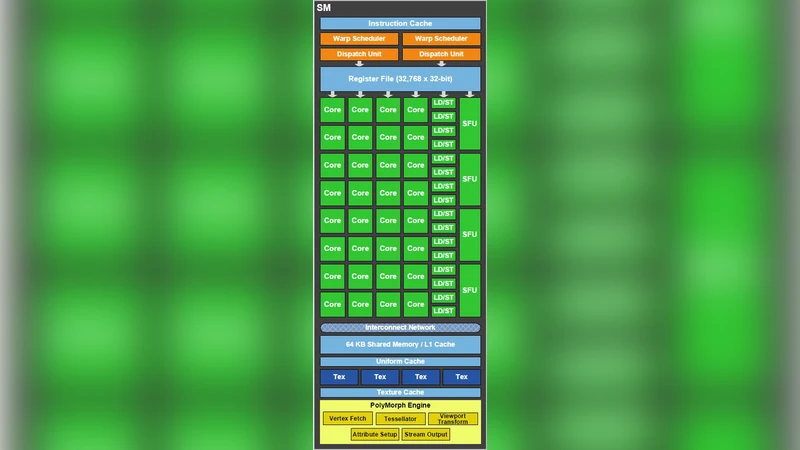

Two parallel evaluation strategies are presented for NVIDIA GPUs using the CUDA programming model. The first, called data decomposition, assigns each input record to a separate GPU thread. Each thread walks the tree from the root to a leaf using a branch‑less representation of the tree (nodes stored in a breadth‑first array, child selection performed by adding a Boolean result to a child index). This approach is straightforward and yields coalesced global‑memory accesses, but on SIMD‑oriented GPUs it suffers from warp divergence because different threads in the same warp often follow different branches, forcing the hardware to serialize divergent paths.

The second strategy, termed speculative parallel evaluation, takes a different view: instead of treating the whole sample as an atomic task, the algorithm evaluates every node of the tree in parallel for a single sample. Each GPU thread is bound to a specific node; it compares the sample’s attribute value with the node’s threshold and writes a 0/1 decision bit. After all nodes have produced their bits, a parallel reduction step determines the unique path from root to leaf by propagating the decisions upward. Because all threads execute the same sequence of operations, warp divergence is dramatically reduced, and the tree’s breadth‑first layout enables highly coalesced memory reads. The reduction incurs a logarithmic‑in‑depth synchronization cost, but overall runtime is lower than the data‑decomposition method.

The authors provide a theoretical runtime analysis under the assumption of independent processors. Data decomposition scales as O(M·D) where M is the number of samples and D the tree depth, while speculative evaluation scales as O(N + log N) with N the number of nodes (≈2^D). For modest depths the speculative method is asymptotically superior, though very large trees can become memory‑bandwidth limited.

Experimental validation is performed on a CUDA 2.0 capable GTX 1080 GPU and an Intel i7‑9700K CPU. Trees of depth 8–15 (256–32 768 nodes) and datasets ranging from 10⁴ to 10⁶ records are used. The speculative algorithm consistently outperforms data decomposition, achieving an average speed‑up of about 25 % and up to 30 % for deeper trees. Profiling shows warp divergence below 5 % for the speculative approach versus over 30 % for data decomposition, confirming the theoretical expectations. The authors also note that host‑to‑device memory bandwidth and data distribution overhead can dominate performance in typical PC configurations, and must be accounted for when reporting speed‑up figures.

In the discussion, the paper acknowledges limitations: the current work focuses on a single decision tree, while many practical systems employ ensembles such as random forests. Extending speculative evaluation to multiple trees would require careful management of shared memory and possibly hierarchical scheduling across multiple GPUs. Additionally, the algorithm assumes continuous attributes; categorical attributes would need encoding or tree restructuring. Future research directions include integrating tree pruning, batch processing of multiple samples, exploiting newer CUDA features (e.g., tensor cores, larger shared memory), and scaling to multi‑GPU clusters.

Overall, the study demonstrates that a SIMD‑friendly speculative evaluation scheme can provide measurable performance gains for decision‑tree inference on GPUs, especially in latency‑sensitive, real‑time contexts where traditional data‑parallel approaches are hampered by warp divergence.

Comments & Academic Discussion

Loading comments...

Leave a Comment