On Nonparametric Guidance for Learning Autoencoder Representations

Unsupervised discovery of latent representations, in addition to being useful for density modeling, visualisation and exploratory data analysis, is also increasingly important for learning features relevant to discriminative tasks. Autoencoders, in particular, have proven to be an effective way to learn latent codes that reflect meaningful variations in data. A continuing challenge, however, is guiding an autoencoder toward representations that are useful for particular tasks. A complementary challenge is to find codes that are invariant to irrelevant transformations of the data. The most common way of introducing such problem-specific guidance in autoencoders has been through the incorporation of a parametric component that ties the latent representation to the label information. In this work, we argue that a preferable approach relies instead on a nonparametric guidance mechanism. Conceptually, it ensures that there exists a function that can predict the label information, without explicitly instantiating that function. The superiority of this guidance mechanism is confirmed on two datasets. In particular, this approach is able to incorporate invariance information (lighting, elevation, etc.) from the small NORB object recognition dataset and yields state-of-the-art performance for a single layer, non-convolutional network.

💡 Research Summary

The paper introduces a novel way to guide auto‑encoder (AE) learning with label information without explicitly tying the latent representation to a specific parametric classifier. Traditional semi‑supervised AEs augment the reconstruction loss with a parametric mapping (e.g., a logistic regression) from the latent space to the label space and back‑propagate the classification error. While effective, this approach forces the latent code to be useful for a particular classifier and requires simultaneous optimization of the classifier parameters, which can hinder representation learning.

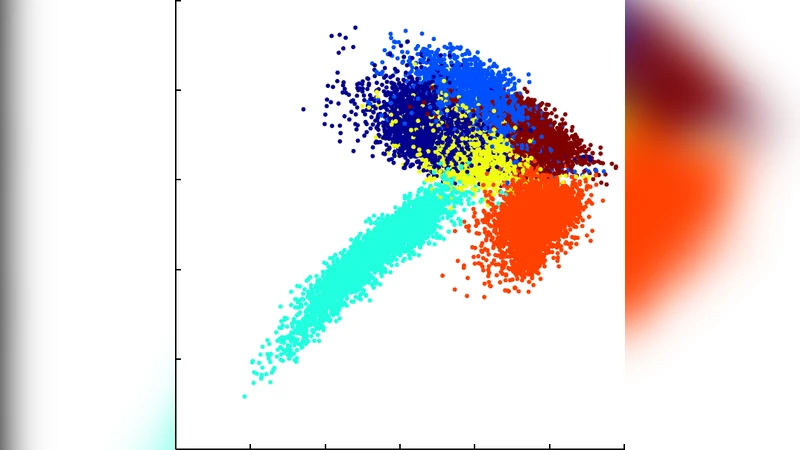

To overcome these limitations, the authors propose a Non‑Parametrically Guided Auto‑encoder (NPGA). The key idea is to require only that some function exists that can predict the labels from the latent codes. This is achieved by marginalizing over the parameters of a label‑mapping function under a Gaussian Process (GP) prior, effectively integrating out the classifier. The latent space of the AE is treated as the latent space of a back‑constrained Gaussian Process Latent Variable Model (GPLVM). The encoder g(y; φ) maps observed data y to latent points x, and a linear projection Γ reduces the dimensionality of x before feeding it to the GP. The GP defines a covariance matrix Σ(θ, φ, Γ) over the projected latent points; the GP marginal likelihood on the label vectors z then becomes a loss term L_GP that measures how well the latent space can support a smooth function to the labels.

The overall objective blends the standard reconstruction loss L_auto with the GP label loss L_GP:

min_{φ,ψ,Γ} (1 − α) L_auto(φ,ψ) + α L_GP(φ,Γ),

where α∈

Comments & Academic Discussion

Loading comments...

Leave a Comment