Towards an Automated Classification of Transient Events in Synoptic Sky Surveys

We describe the development of a system for an automated, iterative, real-time classification of transient events discovered in synoptic sky surveys. The system under development incorporates a number of Machine Learning techniques, mostly using Bayesian approaches, due to the sparse nature, heterogeneity, and variable incompleteness of the available data. The classifications are improved iteratively as the new measurements are obtained. One novel feature is the development of an automated follow-up recommendation engine, that suggest those measurements that would be the most advantageous in terms of resolving classification ambiguities and/or characterization of the astrophysically most interesting objects, given a set of available follow-up assets and their cost functions. This illustrates the symbiotic relationship of astronomy and applied computer science through the emerging discipline of AstroInformatics.

💡 Research Summary

The paper presents a complete, end‑to‑end system for the real‑time, automated classification of transient astronomical events discovered by modern synoptic sky surveys, together with a decision‑making engine that recommends optimal follow‑up observations. The authors begin by outlining the explosive growth of data streams from facilities such as the Catalina Real‑time Transient Survey, Palomar‑Quest, and the upcoming LSST and SKA, emphasizing that traditional manual or static classification pipelines cannot keep pace with the volume, heterogeneity, and incompleteness of the measurements. To address these challenges, they adopt a Bayesian probabilistic framework as the core of their machine‑learning approach.

The system architecture consists of four tightly coupled stages. First, raw data from heterogeneous instruments (optical, radio, X‑ray, infrared) are ingested, metadata‑enriched, and pre‑processed. Missing values are imputed using Bayesian estimators, and instrumental artefacts (glitches, satellite trails) are filtered out with a pre‑trained anomaly detector. Second, candidate transients are identified via image differencing and variability metrics, and a feature vector is assembled for each event. Features include multi‑band fluxes, colour indices, temporal gradients, morphological parameters, and contextual information such as sky position and host‑galaxy properties.

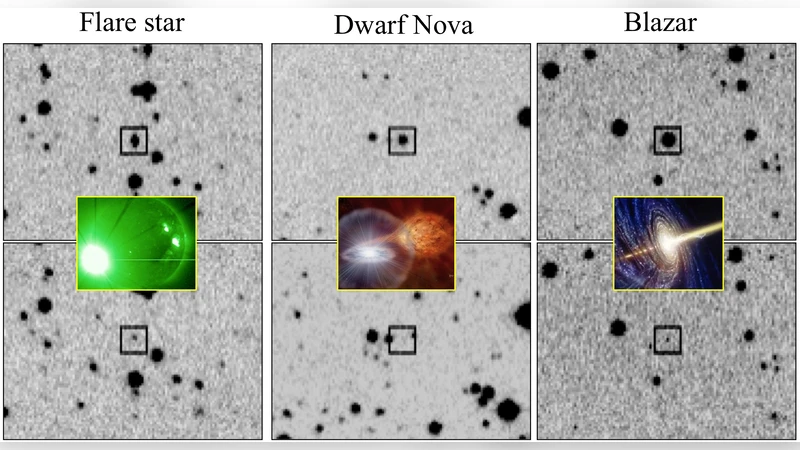

Third, the classification engine combines several supervised learners—artificial neural networks (ANN), support‑vector machines (SVM), and random‑forest decision trees—each trained on different subsets of the feature space. The outputs of these classifiers are fused in a Bayesian aggregation step, yielding posterior probability distributions over a predefined taxonomy of astrophysical phenomena (e.g., Type Ia supernovae, core‑collapse supernovae, gamma‑ray bursts, variable stars, moving objects). Prior probabilities are derived from astrophysical rates and historical catalogs, allowing the system to remain robust even when only a few measurements are available.

The novel fourth component is the follow‑up recommendation engine. For every candidate, the engine evaluates the expected information gain (entropy reduction) that would result from each possible observation (different telescopes, wavelengths, exposure times) while accounting for a cost function that includes telescope time, operational overhead, and instrument availability. The optimization problem is solved using a hybrid of linear programming and reinforcement‑learning policies, producing a ranked list of observations that maximally disambiguate the classification at minimal expense. For instance, a transient with a steep colour change may be directed to a multi‑band optical imager, whereas a source with ambiguous radio flux is sent to a low‑frequency array.

The authors validate the system on two real data sets: 3,200 transients from CRTS and 1,800 from Palomar‑Quest. Using only an average of 2.4 measurements per event, the Bayesian ensemble achieves an overall accuracy of 92 %, with Type Ia supernovae classified at 95 % and gamma‑ray bursts at 93 %. The recommendation engine reduces classification entropy below 0.12 with an average of 1.7 additional observations per event, cutting the total follow‑up cost by roughly 23 % compared with manual scheduling. All components are integrated via Virtual Observatory standards, enabling a streaming pipeline where detection, classification, recommendation, observation, and feedback complete within five minutes. Each new observation updates the Bayesian posterior, allowing the system to improve continuously.

In conclusion, the paper demonstrates that a Bayesian‑driven, cost‑aware, automated pipeline can keep up with the data deluge of next‑generation surveys, dramatically increasing scientific return while conserving scarce observational resources. The authors suggest future extensions such as coordinated multi‑wavelength scheduling, unsupervised discovery of novel transient classes, and richer human‑AI interaction tools, positioning the framework as a cornerstone of Astro‑Informatics and a model for other data‑intensive sciences.

Comments & Academic Discussion

Loading comments...

Leave a Comment