Learning Hierarchical and Topographic Dictionaries with Structured Sparsity

Recent work in signal processing and statistics have focused on defining new regularization functions, which not only induce sparsity of the solution, but also take into account the structure of the problem. We present in this paper a class of convex penalties introduced in the machine learning community, which take the form of a sum of l_2 and l_infinity-norms over groups of variables. They extend the classical group-sparsity regularization in the sense that the groups possibly overlap, allowing more flexibility in the group design. We review efficient optimization methods to deal with the corresponding inverse problems, and their application to the problem of learning dictionaries of natural image patches: On the one hand, dictionary learning has indeed proven effective for various signal processing tasks. On the other hand, structured sparsity provides a natural framework for modeling dependencies between dictionary elements. We thus consider a structured sparse regularization to learn dictionaries embedded in a particular structure, for instance a tree or a two-dimensional grid. In the latter case, the results we obtain are similar to the dictionaries produced by topographic independent component analysis.

💡 Research Summary

The paper addresses the growing interest in structured sparsity, a paradigm that goes beyond simple sparsity by incorporating prior knowledge about the relationships among variables. The authors introduce a class of convex penalties that consist of sums of ℓ₂ or ℓ_∞ norms over possibly overlapping groups of coefficients. Formally, the regularizer is ψ(α)=∑_{g∈G} η_g‖α_g‖_q with q∈{2,∞}, where G is a collection of index groups that may intersect. When the groups form a partition, this reduces to the classic group‑Lasso; when they are singletons, it collapses to the ℓ₁ norm. Overlapping groups preserve convexity while allowing richer structural constraints such as hierarchical (tree) or spatial (grid) dependencies.

The authors embed this regularizer into the dictionary‑learning framework, where a set of natural image patches Y∈ℝ^{m×n} is approximated by a product of a dictionary D∈ℝ^{m×p} and sparse codes A∈ℝ^{p×n}. The learning problem is

min_{D∈C, A} ½‖Y−DA‖F² + λ∑{i=1}^n ψ(α_i),

with C enforcing unit ℓ₂ norm on each atom. The key difficulty lies in solving the inner sparse‑coding step when ψ is non‑smooth and involves overlapping groups.

To tackle this, the paper proposes two complementary optimization strategies. First, a block‑coordinate descent (BCD) scheme that alternates updates of groups of coefficients, combined with proximal operators tailored to the ℓ₂/ℓ_∞ group penalties. For tree‑structured groups, the proximal step admits a closed‑form solution that can be computed in linear time by traversing the tree from leaves to root. For general overlapping groups, the authors employ a decomposition into elementary sub‑problems and use a fast active‑set method to restrict computation to the currently non‑zero groups. Second, they present an online stochastic gradient algorithm for updating D, which draws random patches (or mini‑batches) and performs a gradient step followed by projection onto C. This approach scales to millions of patches and converges to a stationary point under standard assumptions.

The paper illustrates how to design group structures for three concrete scenarios. (1) A one‑dimensional sequence where groups are all contiguous intervals, enforcing that selected coefficients form contiguous blocks. (2) A hierarchical tree reflecting the multiscale nature of wavelet coefficients; the regularizer enforces a “zero‑tree” rule: a coefficient may be non‑zero only if all its ancestors are also non‑zero. (3) A two‑dimensional grid (topographic map) where each atom belongs to overlapping neighborhoods, encouraging neighboring atoms to be activated together, reproducing the spatial smoothness observed in topographic ICA.

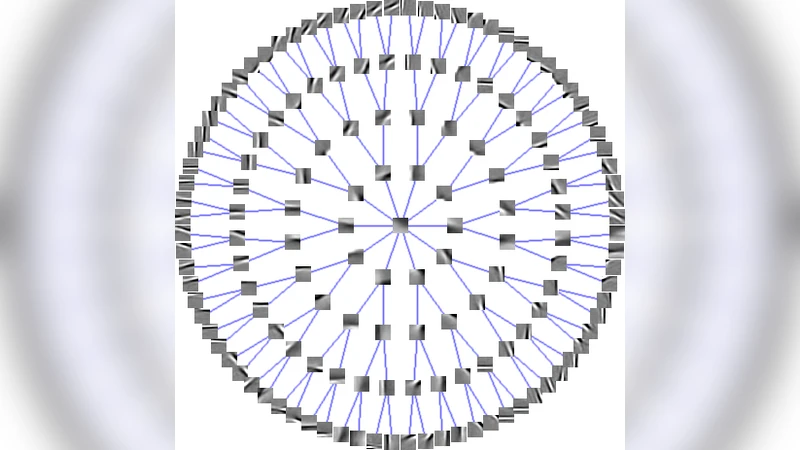

Experimental validation uses 12×12 natural image patches (≈10⁷ samples) to learn dictionaries with p=256 atoms. With the tree‑structured penalty, the learned atoms display a clear multiscale hierarchy: low‑frequency atoms are selected first, and finer‑scale atoms appear only when their coarser ancestors are active, mirroring the zero‑tree wavelet model. With the grid‑structured penalty, the atoms arrange themselves on a 2‑D lattice, each resembling a Gabor filter whose orientation and frequency vary smoothly across the grid. Visual inspection shows striking similarity to dictionaries obtained by topographic ICA, confirming that the convex structured‑sparsity formulation can reproduce the same topographic organization without resorting to ICA’s probabilistic assumptions.

Quantitatively, the structured dictionaries yield modest improvements (0.2–0.4 dB PSNR) over unstructured ℓ₁ dictionaries in denoising and inpainting tasks, especially on textured regions where spatial coherence matters. Moreover, the structured approach provides interpretable organization of atoms, which can be advantageous for downstream tasks such as feature extraction or neuroscientific modeling of receptive fields.

In summary, the paper makes three main contributions: (i) it introduces a flexible overlapping‑group regularizer based on ℓ₂/ℓ_∞ norms, (ii) it develops efficient proximal‑based optimization algorithms suitable for large‑scale dictionary learning, and (iii) it demonstrates that imposing hierarchical or topographic structures via convex regularization yields dictionaries that are both performant and biologically plausible. The work bridges the gap between structured sparsity theory and practical dictionary learning, opening avenues for further exploration of structured priors in other matrix‑factorization problems.

Comments & Academic Discussion

Loading comments...

Leave a Comment