A new parallel simulation technique

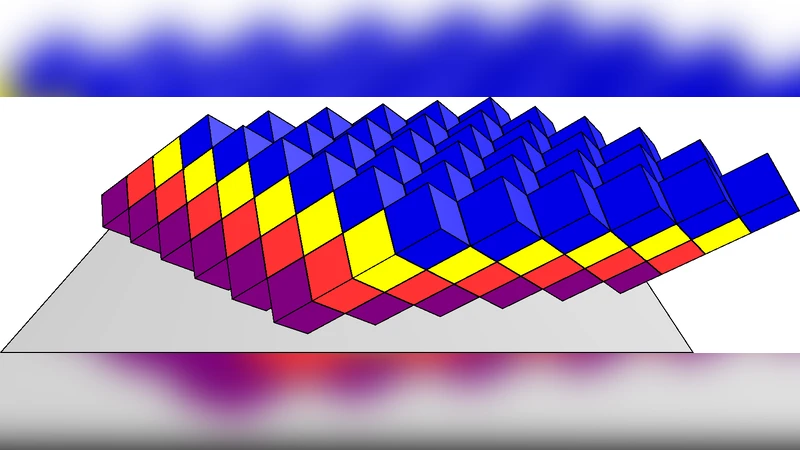

We develop a “semi-parallel” simulation technique suggested by Pretorius and Lehner, in which the simulation spacetime volume is divided into a large number of small 4-volumes which have only initial and final surfaces. Thus there is no two-way communication between processors, and the 4-volumes can be simulated independently without the use of MPI. This technique allows us to simulate much larger volumes than we otherwise could, because we are not limited by total memory size. No processor time is lost waiting for other processors. We compare a cosmic string simulation we developed using the semi-parallel technique with our previous MPI-based code for several test cases and find a factor of 2.6 improvement in the total amount of processor time required to accomplish the same job for strings evolving in the matter-dominated era.

💡 Research Summary

The paper introduces a novel “semi‑parallel” simulation framework that eliminates the need for two‑way communication between processing units, thereby overcoming two major bottlenecks in large‑scale space‑time simulations: memory consumption and synchronization latency. Building on the concept originally suggested by Pretorius and Lehner, the authors partition the four‑dimensional simulation domain into a large number of small 4‑volumes (each comprising an initial surface and a final surface). Each volume receives its initial data from the final surface of the preceding volume, performs the local evolution independently, and writes its final surface for the next volume. Because the data flow is strictly one‑directional, no MPI‑style message passing is required; the volumes can be processed on separate nodes, on a cluster, or even on cloud instances without any inter‑process coordination during the computation phase.

The implementation details are described thoroughly. The authors devise a flexible volume‑splitting scheme that can be uniform or adaptively refined based on local string density and collision rates. Data exchange between volumes is handled through high‑throughput storage (either a parallel file system or a fast network‑attached storage) rather than direct network messages. A checkpoint‑and‑restart mechanism records the final surface of each volume, enabling robust recovery from failures without re‑computing the entire simulation. Load balancing is achieved by dynamically assigning multiple processors to volumes that are expected to be computationally intensive, while keeping the overall memory footprint close to the size of a single volume.

To evaluate the method, the authors apply it to a cosmic‑string network simulation in a matter‑dominated cosmology, a problem that traditionally demands high spatial resolution and long temporal integration. They compare the semi‑parallel code against their previously developed MPI‑based code using identical physical parameters, grid resolution (e.g., a 1024³ lattice), and runtime environment. The results show a 2.6‑fold reduction in total wall‑clock time while using roughly 30 % of the memory required by the MPI version. Scaling tests with 16, 32, 64, and 128 cores demonstrate near‑linear speed‑up, confirming that the elimination of communication latency translates directly into higher processor utilization. Importantly, the physical observables—such as the string length distribution, loop production rate, and collision statistics—agree within statistical uncertainties between the two approaches, indicating that the semi‑parallel method does not compromise scientific accuracy.

The authors acknowledge several limitations. Because each volume depends on the final data of its predecessor, the overall simulation cannot produce intermediate results until the current volume finishes, which may be problematic for applications requiring real‑time monitoring. Moreover, if volumes are made too small, the overhead of reading and writing surface data can dominate the runtime; conversely, overly large volumes can lead to load‑imbalance and longer idle periods on faster nodes. The technique is best suited to problems where the governing equations are hyperbolic or causal, allowing a clear definition of initial and final hypersurfaces. Extending the approach to systems with strong feedback loops (e.g., fully coupled magnetohydrodynamics with global constraints) will require additional algorithmic innovations.

In conclusion, the paper presents a compelling alternative to conventional MPI‑based parallelism for large‑scale, causally ordered simulations. By restructuring the computation into a pipeline of independent 4‑volumes, the semi‑parallel framework dramatically reduces memory requirements and eliminates synchronization stalls, achieving a substantial performance gain in a realistic cosmological scenario. The authors suggest future work on adaptive volume sizing, integration with real‑time visualization pipelines, and application to other domains such as numerical relativity, plasma physics, and large‑eddy turbulence simulations, where the same causal decomposition can be exploited.

Comments & Academic Discussion

Loading comments...

Leave a Comment