Parallel Breadth-First Search on Distributed Memory Systems

Data-intensive, graph-based computations are pervasive in several scientific applications, and are known to to be quite challenging to implement on distributed memory systems. In this work, we explore the design space of parallel algorithms for Breadth-First Search (BFS), a key subroutine in several graph algorithms. We present two highly-tuned parallel approaches for BFS on large parallel systems: a level-synchronous strategy that relies on a simple vertex-based partitioning of the graph, and a two-dimensional sparse matrix-partitioning-based approach that mitigates parallel communication overhead. For both approaches, we also present hybrid versions with intra-node multithreading. Our novel hybrid two-dimensional algorithm reduces communication times by up to a factor of 3.5, relative to a common vertex based approach. Our experimental study identifies execution regimes in which these approaches will be competitive, and we demonstrate extremely high performance on leading distributed-memory parallel systems. For instance, for a 40,000-core parallel execution on Hopper, an AMD Magny-Cours based system, we achieve a BFS performance rate of 17.8 billion edge visits per second on an undirected graph of 4.3 billion vertices and 68.7 billion edges with skewed degree distribution.

💡 Research Summary

The paper addresses the challenge of scaling breadth‑first search (BFS), a fundamental primitive for many graph analytics, on modern distributed‑memory supercomputers. Recognizing that BFS is inherently memory‑bound and that irregular memory accesses dominate performance, the authors explore two distinct parallel designs that differ in how the graph is partitioned across processes and how inter‑process communication is organized.

The first design follows the classic one‑dimensional (1‑D) vertex partitioning. The graph’s adjacency lists are divided evenly among the MPI ranks; each rank holds a subset of vertices and their incident edges. BFS proceeds level‑by‑level in a level‑synchronous fashion: each rank expands its local frontier, aggregates edges that point to vertices owned by other ranks, and then performs an all‑to‑all exchange of these “remote” edges at the end of the level. This approach is simple and works well when the number of processes is modest, but the all‑to‑all communication volume grows linearly with the number of ranks and quickly becomes a bottleneck on large machines.

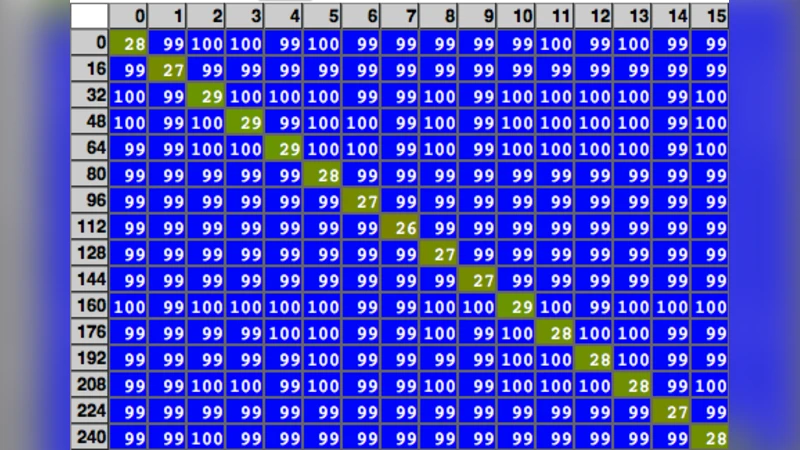

The second design introduces a two‑dimensional (2‑D) sparse‑matrix partitioning. The graph is viewed as an adjacency matrix and both rows and columns are distributed over a √P × √P processor grid (P = total number of MPI ranks). Each rank therefore owns a sub‑matrix block and participates in two communication phases per BFS level: a row‑wise exchange followed by a column‑wise exchange. Because each phase involves only the ranks that share a row or a column, the total number of messages per level drops from O(P) to O(√P), and the volume of data transferred is similarly reduced. The authors further compress messages and overlap communication with computation using non‑blocking MPI calls, achieving a substantial reduction in communication latency.

Both algorithms are “hybrid”: within each node, OpenMP threads share the local data structures, and the authors apply careful load‑balancing, cache‑friendly data layouts, and atomic‑free updates where possible. To quantify the impact of memory accesses, the paper proposes a simple performance model that accounts for local memory bandwidth, remote memory latency, and network bandwidth. The model predicts that, as the number of cores grows, the 2‑D scheme can cut communication time by up to a factor of 3.5 compared with the 1‑D scheme—a prediction that is confirmed by the experimental results.

The experimental platform is the Cray XE6 “Hopper” system at NERSC, equipped with AMD Magny‑Cours CPUs and a high‑speed InfiniBand network. The authors use the Graph500 benchmark graphs at scale‑30 (≈4.3 billion vertices, 68.7 billion edges) which exhibit a skewed degree distribution typical of real‑world networks. On this workload, the 1‑D algorithm scales up to about 8 000 cores before communication dominates, achieving roughly 10 billion edge visits per second (BTEPS). In contrast, the hybrid 2‑D algorithm scales smoothly to the full 40 000‑core configuration, delivering 17.8 BTEPS—more than a 70 % performance increase over the 1‑D approach and a new record on the Graph500 metric. Memory consumption is kept low (≈8 bytes per vertex), allowing the massive graph to fit within the available aggregate memory.

Beyond BFS, the authors argue that the 2‑D partitioning strategy is applicable to other sparse‑matrix‑vector operations such as PageRank, Connected Components, and general SpMV kernels, because the same reduction in communication volume and the ability to overlap communication with computation hold for those algorithms as well. They also discuss future trends: as exascale systems increase core counts faster than interconnect bandwidth, communication‑avoiding designs like the 2‑D layout will become increasingly critical. Potential extensions include dynamic repartitioning for load‑imbalance, adaptation to non‑Cartesian network topologies, and integration with accelerator‑rich nodes (GPUs, many‑core cards).

In summary, the paper makes three major contributions: (1) it presents two highly tuned BFS implementations for distributed memory machines, including a novel hybrid 2‑D algorithm that dramatically reduces communication overhead; (2) it provides a concise memory‑reference‑centric performance model that explains the observed scalability and guides future architectural decisions; and (3) it demonstrates record‑breaking BFS performance on a 40 000‑core supercomputer, establishing a new benchmark for large‑scale graph processing. The work offers valuable insights for researchers and system designers aiming to push graph analytics toward the exascale era.

Comments & Academic Discussion

Loading comments...

Leave a Comment