Robust Localization from Incomplete Local Information

We consider the problem of localizing wireless devices in an ad-hoc network embedded in a d-dimensional Euclidean space. Obtaining a good estimation of where wireless devices are located is crucial in wireless network applications including environment monitoring, geographic routing and topology control. When the positions of the devices are unknown and only local distance information is given, we need to infer the positions from these local distance measurements. This problem is particularly challenging when we only have access to measurements that have limited accuracy and are incomplete. We consider the extreme case of this limitation on the available information, namely only the connectivity information is available, i.e., we only know whether a pair of nodes is within a fixed detection range of each other or not, and no information is known about how far apart they are. Further, to account for detection failures, we assume that even if a pair of devices is within the detection range, it fails to detect the presence of one another with some probability and this probability of failure depends on how far apart those devices are. Given this limited information, we investigate the performance of a centralized positioning algorithm MDS-MAP introduced by Shang et al., and a distributed positioning algorithm, introduced by Savarese et al., called HOP-TERRAIN. In particular, for a network consisting of n devices positioned randomly, we provide a bound on the resulting error for both algorithms. We show that the error is bounded, decreasing at a rate that is proportional to R/Rc, where Rc is the critical detection range when the resulting random network starts to be connected, and R is the detection range of each device.

💡 Research Summary

**

The paper tackles the fundamental problem of sensor localization in wireless ad‑hoc networks when only binary connectivity information is available, i.e., we know whether two nodes are within a fixed detection range R, but we have no direct distance measurements. Moreover, the model incorporates probabilistic detection failures: even if two nodes are within range, they may fail to detect each other with a probability that depends on their actual separation.

The authors focus on two widely used algorithms: the centralized multidimensional scaling based method MDS‑MAP (Shang et al.) and the distributed HOP‑TERRAIN algorithm (Savarese et al.). They consider n sensors placed independently and uniformly at random in a d‑dimensional unit hyper‑cube. The detection range R is assumed to be o(1) and the average node degree grows at least logarithmically with n, which is the minimal condition for the random geometric graph to be connected with high probability. The critical detection range for connectivity, denoted Rc, scales as (log n / n)^{1/d}.

Centralized MDS‑MAP analysis

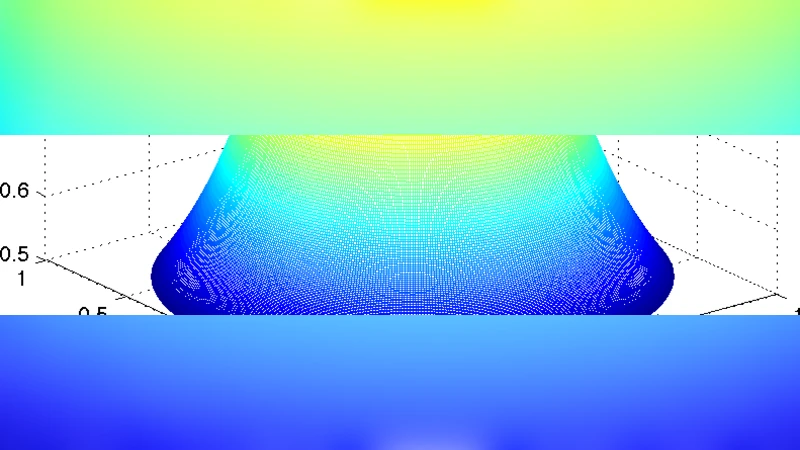

MDS‑MAP first computes all‑pairs shortest‑path lengths (in hop count) on the connectivity graph, uses these lengths as surrogate distances, and then applies classical MDS to obtain node coordinates. The paper proves two key results: (i) the shortest‑path distances approximate the true Euclidean distances up to an additive error proportional to R, provided the average degree is Ω(log n); (ii) the reconstruction error measured by the Frobenius norm of the centered Gram matrices satisfies

d_inv(X, \hat X) ≤ C_d · ( log n / n )^{1/d} + o(1),

where C_d depends only on the ambient dimension. This bound shows that the error decays as the ratio R / Rc, i.e., larger radio ranges (or denser deployments) yield more accurate localization.

Distributed HOP‑TERRAIN analysis

HOP‑TERRAIN can be viewed as a distributed analogue of MDS‑MAP. Each node estimates its distance to a set of d + 1 anchors (nodes with known absolute positions) by counting hops, then solves a local linear system to infer its own coordinates. The authors prove that for every non‑anchor node i,

‖x_i − \hat x_i‖ ≤ C_{0,d} · ( log n / n )^{1/d} + o(1),

with C_{0,d} again a dimension‑dependent constant. Thus the distributed algorithm achieves the same asymptotic error scaling as the centralized method, while requiring only local communication and O(log n) computational effort per node.

Contributions and significance

The work provides the first rigorous, probabilistic error bounds for localization based solely on connectivity, for both centralized and distributed settings. It relaxes several strong assumptions present in earlier analyses (e.g., linear growth of average degree, knowledge of pairwise detection probabilities, or availability of far‑field distance measurements). The results give network designers a concrete guideline: to achieve a target localization accuracy ε, the detection range must satisfy R ≥ Θ( ε · Rc ), where Rc ≈ (log n / n)^{1/d}.

Limitations and future directions

The analysis assumes a specific probabilistic detection model but does not quantify how different functional forms of p(d) affect the constants. It also treats the noiseless case; extending the theory to incorporate measurement noise (e.g., RSSI fluctuations) remains open. The distributed algorithm relies on the existence of d + 1 perfectly known anchors; reducing this requirement or optimizing anchor placement are natural next steps. Finally, handling non‑uniform node distributions, dynamic networks, or hybrid schemes that combine occasional range measurements with connectivity would broaden the applicability of the theoretical framework.

In summary, the paper bridges a gap between empirical studies of range‑free localization and rigorous performance guarantees, demonstrating that even with the most limited information—binary connectivity—both centralized and distributed algorithms can recover node positions with provably diminishing error as the network becomes denser.

Comments & Academic Discussion

Loading comments...

Leave a Comment