Large-Margin Learning of Submodular Summarization Methods

In this paper, we present a supervised learning approach to training submodular scoring functions for extractive multi-document summarization. By taking a structured predicition approach, we provide a large-margin method that directly optimizes a convex relaxation of the desired performance measure. The learning method applies to all submodular summarization methods, and we demonstrate its effectiveness for both pairwise as well as coverage-based scoring functions on multiple datasets. Compared to state-of-the-art functions that were tuned manually, our method significantly improves performance and enables high-fidelity models with numbers of parameters well beyond what could reasonbly be tuned by hand.

💡 Research Summary

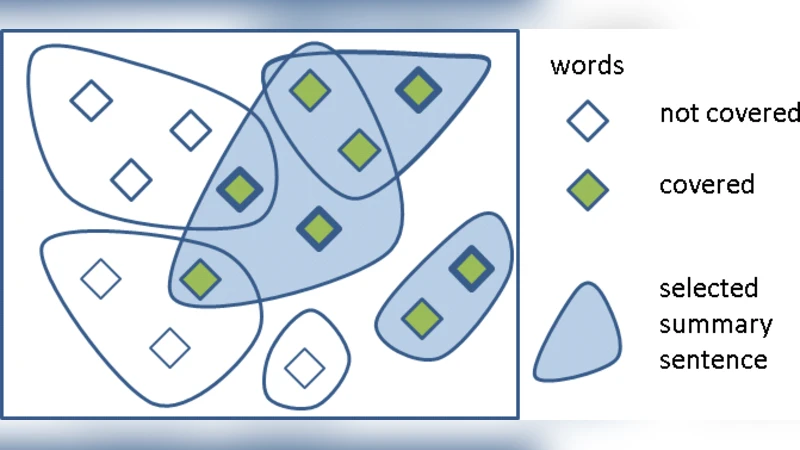

The paper introduces a supervised learning framework for training submodular scoring functions used in extractive multi‑document summarization. By casting summarization as a structured prediction problem, the authors employ a large‑margin approach based on structural Support Vector Machines (SVMs) to directly optimize a convex relaxation of the evaluation metric (ROUGE‑1 F‑score). Two representative submodular objective functions are considered: (1) a pairwise model that rewards similarity between selected summary sentences and the rest of the document set while penalizing redundancy among selected sentences, and (2) a coverage model that sums importance weights of distinct words covered by the summary. Both similarity σ(i,j) and word importance ω(v) are parameterized linearly as σₓ(i,j)=wᵀφᵖₓ(i,j) and ωₓ(v)=wᵀφᶜₓ(v), where φᵖₓ and φᶜₓ are rich feature vectors (e.g., TF‑IDF cosine, title word overlap, positional cues, word frequency statistics).

Training data consist of documents paired with multiple human abstracts Yᵢ. Since an exact extractive “gold” summary is unavailable, the authors define a target extractive summary yᵢ as the subset that maximizes ROUGE‑1 F‑score against Yᵢ (or equivalently minimizes a derived loss). The structural SVM learns a weight vector w by solving the quadratic program: minimize ½‖w‖² + C∑ξᵢ subject to w·Ψ(xᵢ,yᵢ) – w·Ψ(xᵢ,ŷ) ≥ Δ(Yᵢ, ŷ) – ξᵢ for all ŷ≠yᵢ, where Ψ encodes the submodular objective (pairwise or coverage) and Δ is a loss derived from ROUGE‑1 (Δ_R = max(0,1−ROUGE‑1_F)). Because the number of constraints is exponential in the number of sentences, a cutting‑plane algorithm is used: at each iteration the most violated constraint is found by solving ŷ = argmax_y w·Ψ(xᵢ,y)+Δ(Yᵢ,y), which can be done efficiently with the same greedy algorithm that provides a (1−1/e) approximation for monotone submodular maximization under a budget.

The greedy algorithm iteratively adds the sentence that yields the largest marginal gain per unit length (adjusted by a parameter r controlling density). This algorithm serves both for inference (producing a summary at test time) and for loss‑augmented inference during training.

Experiments on standard summarization benchmarks (DUC 2003/2004, TAC 2008, etc.) demonstrate that models with hundreds of parameters can be reliably trained from as few as 30–40 document clusters. The learned pairwise and coverage models consistently outperform manually tuned baselines, achieving ROUGE‑1 improvements of roughly 2–3 absolute points. Feature analysis shows that incorporating title overlap, positional information, and document‑wide word statistics significantly boosts performance. The r‑parameter is tuned in the range 0.5–0.8, confirming that emphasizing information density improves summary quality.

Key contributions of the work are:

- A unified large‑margin learning framework for any submodular summarization objective, eliminating the need for heuristic, separate tuning of similarity measures or coverage weights.

- Direct optimization of the evaluation metric via a structured loss, ensuring that the learned model aligns with the ultimate summarization goal.

- Empirical evidence that high‑dimensional submodular models can be trained with very limited supervision, making the approach practical for new domains.

Future directions suggested include extending the linear feature maps to nonlinear kernels or neural embeddings to capture richer interactions, incorporating multiple evaluation metrics (e.g., ROUGE‑2, human readability scores) into a multi‑task structural SVM, and exploring more sophisticated budget constraints (e.g., non‑linear length penalties). Overall, the paper establishes that large‑margin learning of submodular functions is a powerful and flexible method for building state‑of‑the‑art extractive summarizers.

Comments & Academic Discussion

Loading comments...

Leave a Comment