Applying statistical methods to text steganography

This paper presents a survey of text steganography methods used for hid- ing secret information inside some covertext. Widely known hiding techniques (such as translation based steganography, text generating and syntactic embed- ding) and detection are considered. It is shown that statistical analysis has an important role in text steganalysis.

💡 Research Summary

**

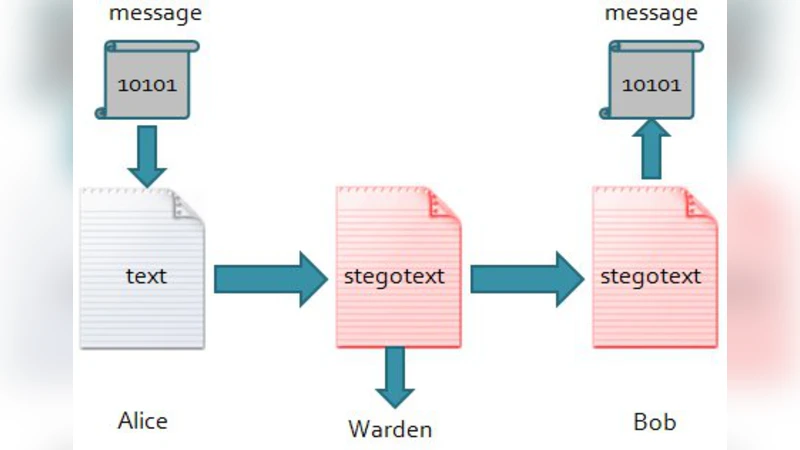

The paper provides a comprehensive survey of text steganography techniques and the statistical methods used to detect them. It begins with a brief introduction to steganography, citing Simmons’ “prisoners’ problem” as a conceptual foundation for covert communication. The authors categorize text‑based hiding methods into four main groups: syntactic, semantic, linguistically‑driven generation, and translation‑based steganography.

Syntactic approaches manipulate the physical formatting of text, such as inserting extra spaces, using misspelled words, or embedding special characters. While these methods are simple to implement, they are highly vulnerable to detection because abnormal spacing or spelling errors are easy for automated analyzers to flag.

Semantic methods rely on synonym substitution. The classic T‑Lex system replaces words with synonyms to encode bits, but it suffers from two major drawbacks: (1) the substituted synonyms often clash with proper English usage, and (2) they may be inconsistent with the genre or author’s style, producing awkward or ungrammatical sentences.

Linguistically‑driven generation methods employ context‑free grammars, Markov chains, or dedicated stegosystems such as Nicetext and Texto. These systems generate entire sentences or paragraphs where each word choice corresponds to a hidden bit. They achieve a high payload‑to‑cover ratio, but the generated text is frequently meaningless, which can raise suspicion when large volumes of such text are transmitted.

Translation‑based steganography exploits the inherent variability of machine translation. By selecting among multiple valid translations of the same source sentence, a hidden message can be encoded. This approach is particularly stealthy because the variations are natural to bilingual corpora and are difficult to distinguish from ordinary translation noise.

The second part of the paper focuses on steganalysis, i.e., the statistical attacks used to uncover hidden messages. The most common framework is supervised learning with Support Vector Machines (SVM). Researchers extract meta‑features such as word frequency distributions, space frequency, character‑level statistics, and first‑letter distributions. Using these features, SVM classifiers achieve detection accuracies exceeding 99 % for Nicetext and Texto, and around 84 %–99 % for Markov‑Chain‑Based systems, depending on the text length.

Another powerful technique is compression‑based detection. The authors argue that embedding a secret message disrupts the statistical regularity of the cover text, increasing its entropy and therefore reducing compression efficiency. Experiments show that when a 400‑byte or larger text is compressed, the presence of hidden data can be inferred with an accuracy above 99.98 %.

A third line of work uses entropy and word‑distance variance as features. By measuring the variability of distances between words in a sentence, the method captures the “noisier” structure typical of stegotext generated by translation‑based schemes. This approach yields detection rates of 97 %–99 % for texts ranging from 10 KB to 20 KB.

The paper also reports on error rates for various methods. Some semantic‑based attacks suffer from high false‑positive (38.6 %) and false‑negative (15.1 %) rates, limiting their practical applicability. These methods often require extensive linguistic rule databases and significant computational resources.

In the conclusion, the authors emphasize that the majority of effective steganalysis techniques are grounded in statistical analysis. Most embedding schemes—whether syntactic, semantic, generative, or translation‑based—can be detected with high probability when appropriate statistical features are examined. The paper calls for future research to develop lightweight detection models that maintain high accuracy on short text fragments and to design more robust embedding strategies that can resist the ever‑advancing statistical steganalysis toolbox.

Comments & Academic Discussion

Loading comments...

Leave a Comment