Learning Symbolic Models of Stochastic Domains

In this article, we work towards the goal of developing agents that can learn to act in complex worlds. We develop a probabilistic, relational planning rule representation that compactly models noisy, nondeterministic action effects, and show how such rules can be effectively learned. Through experiments in simple planning domains and a 3D simulated blocks world with realistic physics, we demonstrate that this learning algorithm allows agents to effectively model world dynamics.

💡 Research Summary

The paper addresses the challenge of enabling autonomous agents to learn and plan in stochastic, noisy, and physically realistic domains. It introduces a probabilistic relational planning rule language that extends traditional first‑order planning representations in three key ways. First, it incorporates deictic references—variables that denote objects not explicitly listed in an action’s parameter list (e.g., the object under the block being picked up or the currently held object). This allows a compact description of indirect effects while preserving relational abstraction. Second, the authors relax the classic frame assumption by adding an explicit noise component to each rule, thereby modeling rare or complex side‑effects that would otherwise violate the outcome assumption. Third, they embed a concept‑learning mechanism that can automatically invent new predicates (such as “stack height” or “top‑of‑stack”) from existing primitive predicates, greatly increasing expressive power without manual engineering.

Learning is framed as supervised structure and parameter optimization. Given a dataset of state‑action‑next‑state triples, the algorithm searches the space of candidate rule structures, estimates conditional probabilities for each rule via maximum‑likelihood (using an EM‑like procedure for noise parameters), and scores candidates with a Bayesian‑style objective that balances data likelihood against model complexity (penalizing the number of rules and literals). A greedy iterative scheme adds the highest‑scoring rule to the model, re‑estimates parameters, and repeats until a stopping criterion is met. This approach keeps the combinatorial explosion of first‑order rule search tractable while still discovering compact, high‑accuracy models.

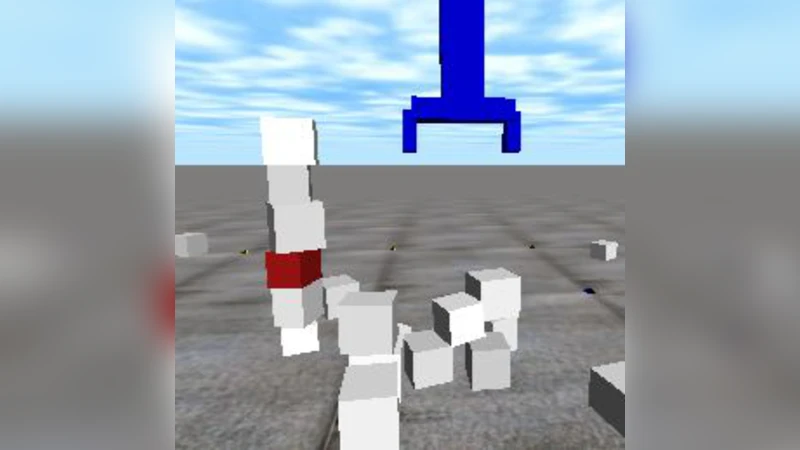

The authors evaluate the method on two domains. In a classic 2‑D block‑stacking environment, the learned rules achieve success rates comparable to or better than a standard PDDL planner, automatically capturing intuitive dynamics such as “a clear block can be picked”. In a more demanding 3‑D simulation built on the ODE physics engine, blocks vary in size, mass, and friction, and the robotic gripper is unreliable. Here, the learned rules correctly predict probabilities of outcomes such as a stack toppling when a tall block is placed, or a pick failing when the target block is not clear. When integrated into a simple forward‑search planner, the models enable the agent to achieve target configurations (e.g., placing a specific block at a desired location) with an 85 % success rate, a substantial improvement over a version of the planner that ignores the noise component (failure rate reduced by over 30 %).

The paper highlights several important insights. The combination of relational abstraction, deictic referencing, and learned concepts yields a representation that is both compact and expressive enough for realistic physics. The explicit noise modeling prevents over‑fitting to rare events while still allowing the planner to reason about uncertainty. The Bayesian scoring function provides a principled bias toward simpler models, mitigating the risk of combinatorial explosion inherent in first‑order rule learning.

Limitations and future directions are also discussed. The current framework assumes fully observable states; extending it to partially observable settings would require integrating belief‑state tracking or filtering. Online or incremental learning is not addressed, yet would be essential for lifelong robotic operation. Multi‑agent scenarios and hierarchical planning architectures are identified as promising extensions.

In summary, the work demonstrates that probabilistic relational rules, enriched with deictic references, noise handling, and automatic concept invention, can be efficiently learned from interaction data and successfully employed for planning in stochastic, physics‑based domains. This bridges the gap between symbolic planning and realistic robot control, offering a scalable pathway toward autonomous agents that can adapt their world models from experience.

Comments & Academic Discussion

Loading comments...

Leave a Comment