Space Weather Prediction with Exascale Computing

Space weather refers to conditions on the Sun, in the interplanetary space and in the Earth space environment that can influence the performance and reliability of space-borne and ground-based technological systems and can endanger human life or health. Adverse conditions in the space environment can cause disruption of satellite operations, communications, navigation, and electric power distribution grids, leading to a variety of socioeconomic losses. The conditions in space are also linked to the Earth climate. The activity of the Sun affects the total amount of heat and light reaching the Earth and the amount of cosmic rays arriving in the atmosphere, a phenomenon linked with the amount of cloud cover and precipitation. Given these great impacts on society, space weather is attracting a growing attention and is the subject of international efforts worldwide. We focus here on the steps necessary for achieving a true physics-based ability to predict the arrival and consequences of major space weather storms. Great disturbances in the space environment are common but their precise arrival and impact on human activities varies greatly. Simulating such a system is a grand- challenge, requiring computing resources at the limit of what is possible not only with current technology but also with the foreseeable future generations of super computers

💡 Research Summary

The paper addresses the grand challenge of predicting space‑weather events with a physics‑based model that can capture the full multiscale dynamics of the Sun‑Earth system. Space weather, driven by the solar wind and its embedded magnetic field, can disrupt satellites, communications, navigation, power grids, and even influence climate through variations in solar irradiance and cosmic‑ray flux. Because the underlying plasma processes span an enormous range of spatial scales—from the Debye length (~100 m) up to the size of the magnetosphere (~100 Earth radii, >600 000 km)—and temporal scales—from microseconds to hours—conventional explicit particle‑in‑cell (PIC) methods become computationally infeasible.

The authors first quantify the resource requirements of a naïve explicit approach. To resolve the Debye length across a 100 R_E³ domain with a grid spacing of 100 m would need roughly 6.3 × 10⁶ cells per dimension, i.e., about 2.5 × 10¹⁹ cells in total. Assuming a modest particle count per cell, the required number of processing cores exceeds 10¹⁶, far beyond any present or near‑future supercomputer.

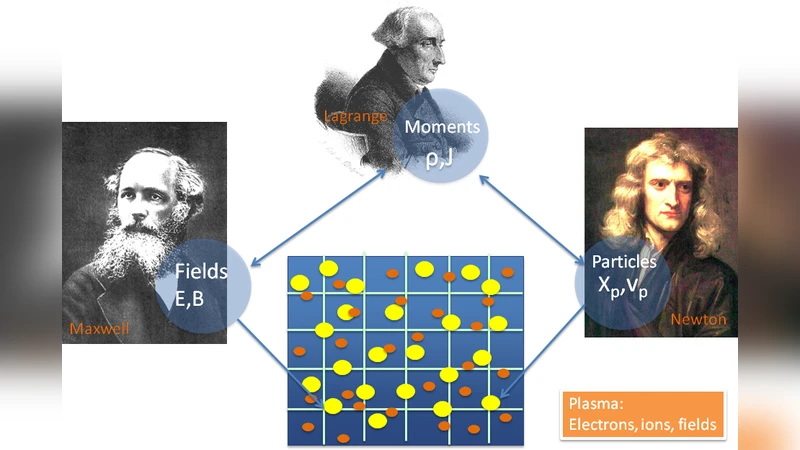

To overcome this barrier, the paper proposes the use of the Implicit Moment Method (IMM), originally developed at Los Alamos in the 1980s and later implemented in the iPic3D code by the authors’ group. IMM advances fields and particles simultaneously without the time‑lag inherent in explicit schemes, and it replaces the need to resolve the fastest electrostatic oscillations with a statistical “moment” description of the particle distribution. Consequently, the smallest required spatial resolution is set by the electron inertial length (~10 km) rather than the Debye length, reducing the grid size by two orders of magnitude in each direction. A uniform IMM grid still demands on the order of 6.3 × 10¹⁰ cores—much smaller than the explicit case but still beyond the reach of current petascale machines.

The final key innovation is the integration of Adaptive Mesh Refinement (AMR). The authors argue that the regions where electron‑scale physics truly matters—such as reconnection layers, shock fronts, and boundary surfaces between distinct plasma populations—occupy only a thin shell (≈10 cells thick) around roughly 10 % of a spherical surface surrounding Earth. By refining only these localized zones, the total core count rises by an additional ~2 × 10⁶, bringing the overall requirement to roughly 2 million cores. This figure aligns with the capabilities anticipated for the first exascale computers (≈10⁶ – 10⁷ cores).

The paper presents a schematic simulation (Fig. 4) illustrating how the coarse grid captures the global magnetosphere while refined patches resolve the critical electron dynamics. It also discusses the current state of the art: ion‑scale simulations (≈10 km resolution) are already feasible on petascale systems using IMM, but fully predictive electron‑scale modeling awaits exascale resources.

In summary, the authors make a compelling case that a combination of implicit moment algorithms and targeted AMR can reduce the otherwise prohibitive computational cost of first‑principles space‑weather modeling to a level attainable on upcoming exascale platforms. This approach promises to replace empirical, parameter‑tuned models with a truly predictive framework, thereby improving the reliability of satellite operations, power‑grid management, and climate‑impact assessments.

Comments & Academic Discussion

Loading comments...

Leave a Comment