Lamarckism and mechanism synthesis: approaching constrained optimization with ideas from biology

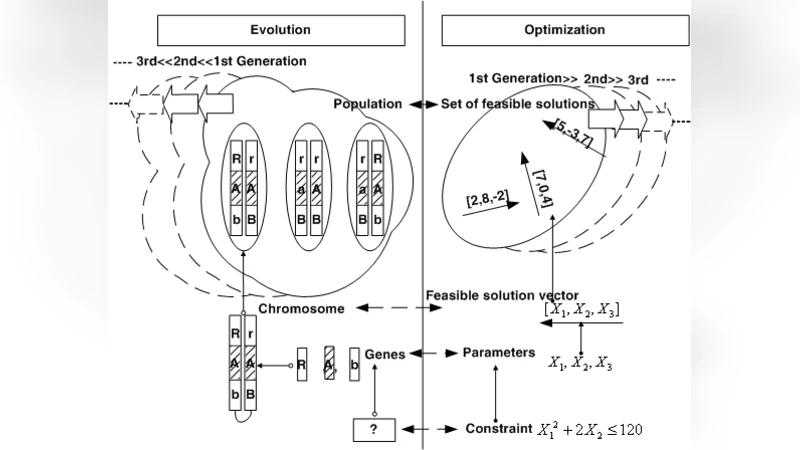

Nonlinear constrained optimization problems are encountered in many scientific fields. To utilize the huge calculation power of current computers, many mathematic models are also rebuilt as optimization problems. Most of them have constrained conditions which need to be handled. Borrowing biological concepts, a study is accomplished for dealing with the constraints in the synthesis of a four-bar mechanism. Biologically regarding the constrained condition as a form of selection for characteristics of a population, four new algorithms are proposed, and a new explanation is given for the penalty method. Using these algorithms, three cases are tested in differential-evolution based programs. Better, or comparable, results show that the presented algorithms and methodology may become common means for constraint handling in optimization problems.

💡 Research Summary

The paper addresses the pervasive problem of handling nonlinear constraints in engineering optimization, using the synthesis of a four‑bar mechanism as a testbed. The authors argue that constraints can be interpreted as a biological “selection of characteristics” and, based on this analogy, they propose four novel constraint‑handling strategies that integrate directly into the evolutionary search process.

-

Natural Selection Improvement (NSI) – This is essentially the classic penalty method reformulated as a “natural law”. Constraint violations are penalized by adding large weighted terms to the objective function (equation 5). The approach is mathematically identical to traditional penalty functions but is presented with a biological metaphor.

-

Artificial Selection Improvement (ASI) – Two variants are introduced. ASI‑IG (initial‑generation) filters the initial population so that every chromosome already satisfies the constraints before the evolutionary loop begins. ASI‑AG (all‑generations) monitors each generation and replaces any infeasible individual with a feasible one, limiting the number of replacements (e.g., six per run) to control computational cost. This mimics artificial breeding where only desirable traits are allowed to propagate.

-

Limited Selection Improvement (LSI) – Here the authors embed constraint satisfaction into the random‑generation process itself. A specialized pseudo‑random generator produces only those individuals that meet the Grashof condition, thereby guaranteeing a high feasibility rate while preserving stochastic diversity.

-

Self‑Selective Improvement (SSI) – Inspired by the (historically disproved) Lamarckian idea that an organism can acquire traits during its lifetime and pass them on, SSI does not create new individuals when a constraint is violated. Instead, it locally modifies the offending individual to satisfy the constraint. The paper provides concrete modification rules (G1‑G6), with rule G4 (equation 6) showing how to adjust link lengths to enforce the Grashof condition.

All four strategies are embedded in a Differential Evolution (DE) framework. The authors test them on three benchmark synthesis problems that have been previously solved in the literature (referred to as CP and KKP). For each case, the same DE parameters (population size, mutation factor, crossover probability, strategy) are used to ensure a fair comparison.

Results

- Case 1 (15 target points): SSI achieved the smallest final error (0.00414 mm) and the highest convergence rate (99.69 %). NSI, ASI‑IG, ASI‑AG, and LSI produced larger errors (0.107 mm to 0.00976 mm) but still comparable to the literature.

- Case 2 (8 target points, tighter bounds): SSI again yielded the lowest error (1.354 × 10⁻⁴ mm), outperforming CP (1.827 × 10⁻⁶ mm in the original paper but with a different setup). All algorithms reached sub‑millimetre precision, with SSI consistently ranking first.

- Case 3 (18 target points, more complex path): SSI’s error (0.0476 mm) was the best among the five methods; the others ranged from 0.05 mm to 0.07 mm.

Statistical tables (Tables 1‑5) show that SSI achieved 100 % of runs with error < 0.1 mm across all cases, while LSI and ASI‑AG also displayed high reliability. The authors also report execution times, indicating that the additional logic for SSI, LSI, or ASI does not dramatically increase computational load.

Critical Assessment

The paper’s main contribution is the biological reinterpretation of constraint handling, which yields a coherent taxonomy of four strategies. SSI, in particular, demonstrates that “repair‑by‑modification” can be more effective than classic penalty or rejection schemes, especially when constraints are easy to correct locally (e.g., adjusting link lengths to satisfy Grashof). The experimental design is solid: the same DE engine, identical parameter settings, and direct comparison with established results provide credibility.

However, several limitations are evident:

- The constraint‑repair rules are handcrafted for the four‑bar problem; their generality to other engineering domains (e.g., mixed integer, logical constraints) is not demonstrated.

- Computational complexity analysis is missing; while run times are reported, a theoretical discussion of overhead introduced by SSI, LSI, or ASI would help assess scalability.

- The study is confined to DE; it remains unclear whether the proposed strategies would retain their advantage with other evolutionary algorithms such as GA, PSO, or CMA‑ES.

- The authors acknowledge that Lamarckism is scientifically discredited yet still use it as a metaphor; this may cause confusion for readers unfamiliar with the historical context.

Future Directions

To strengthen the impact, subsequent work should:

- Extend the repair‑by‑modification concept to a broader class of constraints (inequalities, integer variables, multi‑objective trade‑offs).

- Conduct a systematic complexity analysis and explore parallel implementations to gauge performance on large‑scale problems.

- Test the four strategies within alternative evolutionary frameworks to verify algorithm‑independence.

- Provide a more rigorous statistical treatment (e.g., hypothesis testing, confidence intervals) to substantiate claims of superiority.

Conclusion

By framing constraints as a form of biological selection, the authors introduce a unified set of four algorithms that integrate constraint handling directly into the evolutionary search. Experimental results on three four‑bar synthesis problems show that the Lamarckian‑style self‑selective improvement (SSI) consistently outperforms traditional penalty methods and matches or exceeds recent literature. While the approach is promising, its broader applicability and theoretical underpinnings require further investigation.

Comments & Academic Discussion

Loading comments...

Leave a Comment