High performance cosmological simulations on a grid of supercomputers

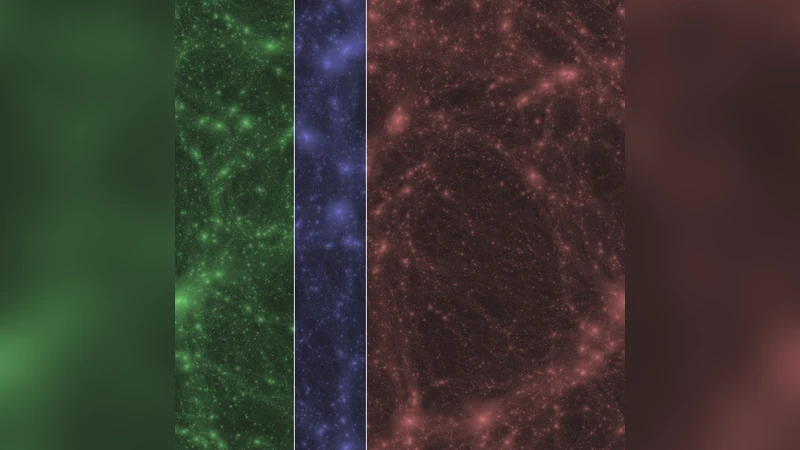

We present results from our cosmological N-body simulation which consisted of 2048x2048x2048 particles and ran distributed across three supercomputers throughout Europe. The run, which was performed as the concluding phase of the Gravitational Billion Body Problem DEISA project, integrated a 30 Mpc box of dark matter using an optimized Tree/Particle Mesh N-body integrator. We ran the simulation up to the present day (z=0), and obtained an efficiency of about 0.93 over 2048 cores compared to a single supercomputer run. In addition, we share our experiences on using multiple supercomputers for high performance computing and provide several recommendations for future projects.

💡 Research Summary

The paper reports on a production‑scale cosmological N‑body simulation that employed 2048³ particles (approximately 8.6 billion particles) to model a 30 Mpc dark‑matter volume. The simulation was executed across three European supercomputers—Huygens (Netherlands, 1024 cores), Louhi (Finland, 512 cores), and HECTOR (Scotland, 512 cores)—for a total of 2048 cores, using the SUSHI integrator enhanced for wide‑area distributed execution.

Key technical contributions include: (1) Parallelization of the particle‑mesh (PM) component using the FFTW2 library with a one‑dimensional slab decomposition, eliminating the previous serial bottleneck; (2) A selective mesh‑cell broadcast scheme that transmits only cells containing particles, reducing inter‑site data volume proportionally to the number of sites; (3) An improved load‑balancing algorithm that accounts for both force‑calculation time and per‑node particle count, mitigating imbalance on heterogeneous architectures (IBM Power6 vs Cray XT4); (4) Adoption of the latest MPWide communication library, configured with 64 parallel TCP streams, 768 kB buffers, and a 10 MB/s pacing limit, to handle high‑latency, high‑bandwidth wide‑area networks.

Performance on a single site (Huygens) was first characterized. With 2048 cores the code achieved a peak of 3.31 × 10¹¹ tree‑force interactions per second and a sustained rate of 2.19 × 10¹¹ interactions per second. Communication overhead on the single site ranged from 5 % (512 cores) to 10–15 % (2048 cores), primarily due to particle migration and tree‑essential data exchange.

In the three‑site run, the mesh resolution was reduced from 512³ to 256³ cells, slightly increasing the range of tree interactions but decreasing intra‑site communication. Despite this, the per‑step wall‑clock time was only about 9 % longer than the single‑site reference. The wide‑area communication overhead remained below 10 % (≈15 s per step), with tree‑structure exchanges accounting for most of the cost and the parallelized PM step adding ≈2.5 s per step. For smaller problem sizes (512³ and 1024³ particles) the communication overhead dropped to <20 % and 6.5 % respectively, demonstrating the scalability of the optimizations.

Operational experience highlighted several non‑technical challenges. Coordinating network path reservations and simultaneous allocations across three national facilities required substantial administrative effort; the authors argue for automated reservation systems to reduce this burden. Software heterogeneity (different compilers, libraries, OS versions) made conventional grid middleware impractical, prompting a modular approach where site‑specific SUSHI binaries were linked with the user‑space MPWide library, avoiding the need for privileged installation. Disk I/O emerged as a secondary bottleneck during snapshot writes; distributing the application across sites could alleviate I/O pressure, though further study is needed.

The authors conclude that high‑performance cosmological simulations can be run efficiently across a network of supercomputers when the application and communication layers are carefully optimized. The added overhead of wide‑area networking is modest for a well‑tuned production code, and the political and administrative effort, while non‑trivial, is the primary barrier to broader adoption. Recommendations include: (i) development of automated, cross‑site resource reservation mechanisms; (ii) adoption of modular, site‑specific software stacks coupled via lightweight communication libraries; (iii) exploration of distributed I/O strategies; and (iv) leveraging such distributed infrastructures for multiscale or multiphysics problems that naturally decompose across heterogeneous resources.

Comments & Academic Discussion

Loading comments...

Leave a Comment