An Application Driven Analysis of the ParalleX Execution Model

Exascale systems, expected to emerge by the end of the next decade, will require the exploitation of billion-way parallelism at multiple hierarchical levels in order to achieve the desired sustained performance. The task of assessing future machine performance is approached by identifying the factors which currently challenge the scalability of parallel applications. It is suggested that the root cause of these challenges is the incoherent coupling between the current enabling technologies, such as Non-Uniform Memory Access of present multicore nodes equipped with optional hardware accelerators and the decades older execution model, i.e., the Communicating Sequential Processes (CSP) model best exemplified by the message passing interface (MPI) application programming interface. A new execution model, ParalleX, is introduced as an alternative to the CSP model. In this paper, an overview of the ParalleX execution model is presented along with details about a ParalleX-compliant runtime system implementation called High Performance ParalleX (HPX). Scaling and performance results for an adaptive mesh refinement numerical relativity application developed using HPX are discussed. The performance results of this HPX-based application are compared with a counterpart MPI-based mesh refinement code. The overheads associated with HPX are explored and hardware solutions are introduced for accelerating the runtime system.

💡 Research Summary

The paper addresses the looming scalability crisis that will confront Exascale systems, where billions of cores must be harnessed efficiently. It argues that the root of the problem lies in the mismatch between modern hardware trends—such as heterogeneous multicore nodes, NUMA memory, and optional accelerators—and the decades‑old Communicating Sequential Processes (CSP) model embodied by MPI, which relies on global barriers, static data distribution, and coarse‑grained synchronization. To overcome these limitations, the authors introduce ParalleX, a novel execution model that replaces CSP’s global, lock‑step paradigm with a message‑driven, fine‑grained, asynchronous approach. ParalleX is built around six core concepts: (1) an Active Global Address Space (AGAS) that gives every object a location‑independent global identifier, enabling dynamic load balancing and seamless data migration; (2) parcels, which are active messages that encapsulate remote function calls and support split‑phase transactions, thereby moving work to data rather than the opposite; (3) Local Control Objects (LCOs) such as futures and data‑flow constructs that provide lightweight synchronization without global barriers; (4) HPX‑threads, user‑mode, cooperatively scheduled lightweight threads that avoid costly OS context switches; (5) ParalleX processes, which decouple virtual processes from physical cores to expose multiple levels of parallelism within a single application; and (6) percolation, a mechanism for pre‑loading code and data to reduce runtime start‑up costs.

The authors present HPX (High Performance ParalleX), a C++‑based runtime that implements the ParalleX model. HPX’s modular architecture includes AGAS, a parcel port/handler subsystem, an action manager that decodes parcels into HPX‑threads, a configurable thread manager with global‑queue and local‑priority schedulers (supporting work‑stealing), a suite of LCOs (futures, data‑flow, mutexes, semaphores, etc.), and a performance‑counter framework for real‑time monitoring. While the current implementation uses TCP/IP for parcel transport, the authors note ongoing work to integrate high‑performance messaging libraries such as GASNet and Conduit.

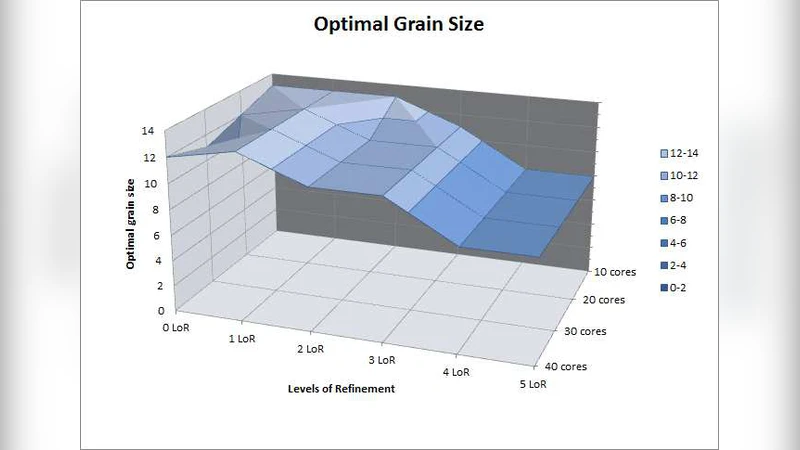

To evaluate the model, the paper ports an Adaptive Mesh Refinement (AMR) application from numerical relativity to HPX and compares it against a traditional MPI version. The AMR code resolves highly dynamic regions of spacetime near singularity formation, a workload that is notoriously scaling‑impaired due to irregular data access patterns and frequent synchronization. Benchmarks on clusters ranging from 256 to 4096 cores show that HPX consistently outperforms MPI, achieving up to 2× higher parallel efficiency and better strong‑scaling behavior. The authors attribute these gains to the elimination of global barriers, the ability of parcels to move computation to the data, and the overlap of communication and computation enabled by data‑flow LCOs.

A detailed overhead analysis reveals that the primary cost contributors in HPX are parcel serialization/deserialization, LCO management, and thread‑scheduling bookkeeping. The paper proposes hardware acceleration strategies—such as FPGA‑based parcel engines and NIC offload for AGAS lookups—to mitigate these overheads. The authors also discuss how HPX’s component‑based design permits dynamic loading of application‑specific modules, facilitating rapid experimentation with new algorithms and hardware features.

In conclusion, the work demonstrates that ParalleX and its HPX implementation provide a viable path toward the fine‑grained, asynchronous execution model required for Exascale computing. By decoupling synchronization from global barriers, exposing a global address space, and leveraging lightweight threads and active messages, ParalleX can unlock the massive concurrency present in modern scientific codes. Future work will focus on refining parcel transport, auto‑tuning LCO policies, and extending the model to a broader set of scientific domains, thereby solidifying ParalleX as a foundational software layer for the next generation of high‑performance computers.

Comments & Academic Discussion

Loading comments...

Leave a Comment