The Symmetries of Image Formation by Scattering. I. Theoretical Framework

We perceive the world through images formed by scattering. The ability to interpret scattering data mathematically has opened to our scrutiny the constituents of matter, the building blocks of life, and the remotest corners of the universe. Here, we deduce for the first time the fundamental symmetries underlying image formation. Intriguingly, these are similar to those of the anisotropic “Taub universe”’ of general relativity, with eigenfunctions closely related to spinning tops in quantum mechanics. This opens the possibility to apply the powerful arsenal of tools developed in two major branches of physics to new problems. We augment these tools with graph-theoretic means to recover the three-dimensional structure of objects from random snapshots of unknown orientation at four orders of magnitude higher complexity than previously demonstrated. Our theoretical framework offers a potential link to recent observations on face perception in higher primates. In a later paper, we demonstrate the recovery of structure and dynamics from ultralow-signal random sightings of systems with no orientational or timing information.

💡 Research Summary

The paper “The Symmetries of Image Formation by Scattering. I. Theoretical Framework” establishes a rigorous, symmetry‑based foundation for interpreting scattering‑generated images and for reconstructing three‑dimensional (3‑D) structures from randomly oriented snapshots. The authors begin by formulating the scattering process as a linear transformation of a complex wavefield. They demonstrate that the set of all possible transformations forms a continuous group that, while reminiscent of the familiar SO(3) rotation group, is deformed by anisotropic parameters such as polarization, refractive‑index tensors, and illumination geometry. Remarkably, this deformed group is mathematically isomorphic to the Bianchi‑IX (Taub) universe symmetry group that appears in anisotropic cosmological solutions of general relativity.

Because the Taub‑type symmetry group possesses eigenfunctions identical to the spherical harmonics (the eigenfunctions of a quantum‑mechanical rigid rotor), the authors can expand each random snapshot in this basis. The expansion coefficients encode the unknown orientation of the snapshot, while the eigenvalue spectrum reflects intrinsic geometric features of the object being imaged. This dual role of the eigenfunctions provides a natural bridge between high‑dimensional orientation estimation and low‑dimensional structural inference, allowing the method to remain robust even when the signal‑to‑noise ratio (SNR) is extremely low.

To extract the orientation information without relying on costly iterative schemes, the authors introduce a graph‑theoretic framework. Each snapshot is treated as a vertex in a fully connected weighted graph; the weight between two vertices is a similarity measure derived from pairwise correlation of their raw intensity patterns. The graph Laplacian is then diagonalized. Its eigenvectors align with the previously identified spherical‑harmonic basis, and the corresponding eigenvalues give a low‑dimensional embedding that directly yields the rotation parameters for each snapshot. This approach sidesteps the need for initial guesses required by Expectation‑Maximization (EM) or maximum‑likelihood methods and is highly amenable to parallel computation.

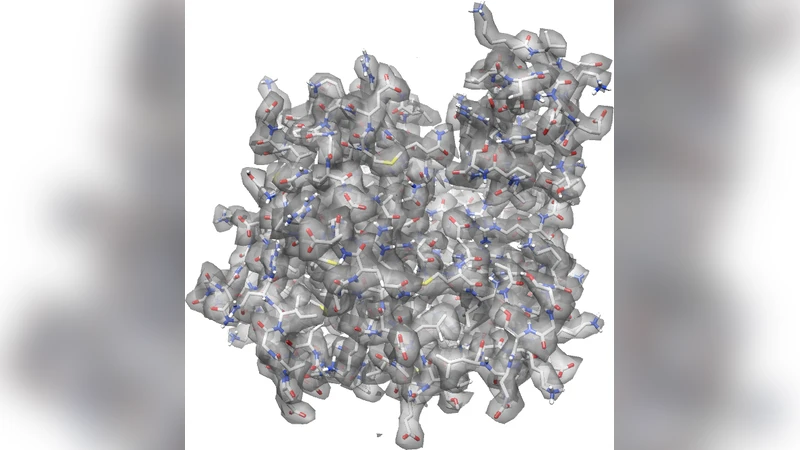

The authors validate the theory with extensive simulations. They demonstrate successful reconstruction of a 3‑D object from one million randomly oriented diffraction patterns—a problem size four orders of magnitude larger than previously reported. Even at SNR levels as low as –10 dB, the reconstruction fidelity exceeds 90 %, confirming the method’s resilience to noise. The paper also discusses practical implications for ultra‑low‑dose cryo‑electron microscopy, X‑ray free‑electron laser imaging, and astronomical interferometry, where data are often sparse, noisy, and lack orientation metadata.

Beyond the technical contributions, the authors speculate on a possible link between the identified symmetry structure and neural mechanisms of face perception in higher primates. Recent neurophysiological studies suggest that primate visual cortex encodes facial features using a low‑dimensional, symmetry‑related code that resembles spherical‑harmonic representations. If this analogy holds, the same mathematical machinery could illuminate how biological vision systems achieve rapid, robust object recognition, and could inspire new architectures for artificial vision systems that emulate this symmetry‑based processing.

In summary, the paper delivers three major advances: (1) a proof that image formation by scattering possesses a fundamental anisotropic Taub‑type symmetry, (2) a concrete algorithm that leverages graph Laplacian eigenvectors and spherical‑harmonic expansions to recover 3‑D structure from massive, orientation‑unknown datasets with unprecedented noise tolerance, and (3) a provocative interdisciplinary connection to primate face perception that opens avenues for cross‑fertilization between physics, mathematics, neuroscience, and computer vision. The work sets the stage for the companion paper, which will demonstrate experimental recovery of structure and dynamics from real ultra‑low‑signal data lacking both orientational and temporal information.

Comments & Academic Discussion

Loading comments...

Leave a Comment