Empirical study of sensor observation services server instances

The number of Sensor Observation Service (SOS) instances available online has been increasing in the last few years. The SOS specification standardises interfaces and data formats for exchanging sensor-related in-formation between information providers and consumers. SOS in conjunction with other specifications in the Sensor Web Enablement initiative, at-tempts to realise the Sensor Web vision, a worldwide system where sensor networks of any kind are interconnected. In this paper we present an empirical study of actual instances of servers implementing SOS. The study focuses mostly in which parts of the specification are more frequently included in real implementations, and how exchanged messages follows the structure defined by XML Schema files. Our findings can be of practical use when implementing servers and clients based on the SOS specification, as they can be optimized for common scenarios.

💡 Research Summary

This paper presents an empirical investigation of publicly available Sensor Observation Service (SOS) server instances, focusing on how closely real‑world implementations follow the OGC SOS specification. The authors identified 56 SOS servers that claim compliance with version 1.0.0, collected XML responses for the three core operations (GetCapabilities, DescribeSensor, GetObservation), and evaluated them against the official XML Schemas.

Validation results reveal that 34 out of 56 (approximately 60 %) capabilities documents are non‑conforming. The most frequent validation errors are element‑name mismatches (cvc‑complex‑type.2.4.a) and datatype violations (cvc‑datatype‑valid). A typical example is the substitution of the standard element “sos:Time” with “sos:eventTime”, likely inherited from earlier draft versions. Other common problems include malformed time strings, IDs containing whitespace or colons, and duplicate ID values. Although many errors are syntactic, they rarely prevent parsing, but they impose additional effort on client developers.

The study then examines which operations and profiles are actually supported. All servers implement the mandatory GetCapabilities request via HTTP GET, and most also expose it via POST. GetObservation is widely available, with a clear preference for POST because the specification does not define a KVP encoding for this operation. However, 10 servers omit DescribeSensor entirely from their capabilities, indicating that sensor metadata exposure is not universally regarded as essential. Transactional operations (RegisterSensor, InsertObservation) appear in only two servers, and Enhanced profile operations (e.g., GetFeatureOfInterest, GetResult) are sparsely implemented, suggesting that the richer feature set envisioned by SOS is rarely needed in practice or is considered too costly to maintain.

Filter support is another focal point. Only 16 capabilities documents explicitly list supported filters, yet most servers implicitly allow the basic spatial BBOX filter and the temporal TM_During filter. The authors catalogued the exact filter operands (e.g., gml:Envelope, gml:Point) and operators, noting that scalar filters (Between, EqualTo, etc.) and identifier filters (eID, fID) are also present in a subset of implementations. This pattern reflects a pragmatic approach: providing enough filtering capability to limit query size without implementing the full filter language defined by OGC.

Regarding response formats, the dominant encoding for observations is O&M 1.0.0, the default defined by SOS. Alternative encodings such as SensorML are rarely used, indicating that implementers tend to stick with the simplest, most widely supported format.

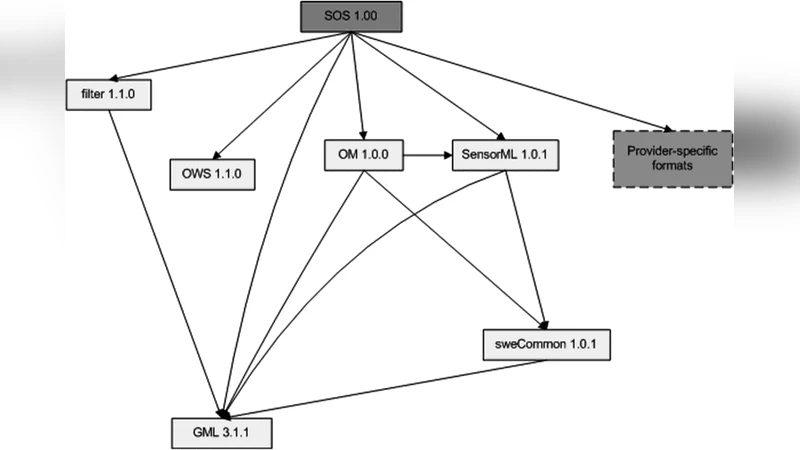

The authors also comment on schema usage: despite the extensive SOS schema set (which imports numerous other OGC schemas), actual deployments only employ a small subset of elements. This selective adoption reduces development complexity but also leads to the observed schema violations when developers rename or omit elements.

Limitations of the study include incomplete data retrieval (especially for non‑core operations), inability to identify the underlying server software for many instances, and the focus on XML instance validation rather than full conformance testing.

Overall, the paper provides valuable, data‑driven insights for developers of SOS servers and clients. It highlights common pitfalls—such as element‑name drift and incomplete profile support—and suggests that robust implementations should fully realize the Core profile, carefully validate XML against the official schemas, and consider adding basic spatial/temporal filters and O&M encoding to meet the expectations of most clients. The findings can guide future SOS implementations toward better interoperability and reduced integration effort.

Comments & Academic Discussion

Loading comments...

Leave a Comment