Complex dynamics in learning complicated games

Game theory is the standard tool used to model strategic interactions in evolutionary biology and social science. Traditional game theory studies the equilibria of simple games. But is traditional game theory applicable if the game is complicated, and if not, what is? We investigate this question here, defining a complicated game as one with many possible moves, and therefore many possible payoffs conditional on those moves. We investigate two-person games in which the players learn based on experience. By generating games at random we show that under some circumstances the strategies of the two players converge to fixed points, but under others they follow limit cycles or chaotic attractors. The dimension of the chaotic attractors can be very high, implying that the dynamics of the strategies are effectively random. In the chaotic regime the payoffs fluctuate intermittently, showing bursts of rapid change punctuated by periods of quiescence, similar to what is observed in fluid turbulence and financial markets. Our results suggest that such intermittency is a highly generic phenomenon, and that there is a large parameter regime for which complicated strategic interactions generate inherently unpredictable behavior that is best described in the language of dynamical systems theory

💡 Research Summary

The paper investigates how learning dynamics behave in “complicated” two‑player games—games with a large number of possible moves (N = 50 in the simulations) and consequently a high‑dimensional payoff structure. The authors model learning with the Experience‑Weighted Attraction (EWA) rule, a reinforcement‑learning scheme that updates the probability of choosing each move according to a soft‑max of “attractions” Q. The attractions evolve as a weighted average of past payoffs, controlled by two key parameters: α (memory‑loss rate) and β (intensity of choice). α = 1 corresponds to no memory (only the most recent outcome matters), while α = 0 gives equal weight to the entire history; β = 0 yields random play, and β → ∞ makes the player deterministically choose the move with the highest attraction.

Payoff matrices ΠA and ΠB are drawn randomly from a Gaussian ensemble with zero mean, variance 1/N, and a cross‑correlation parameter Γ/N that measures how far the game is from being zero‑sum (Γ = −1) or completely uncorrelated (Γ = 0). By varying (α, β, Γ) the authors explore a broad region of parameter space.

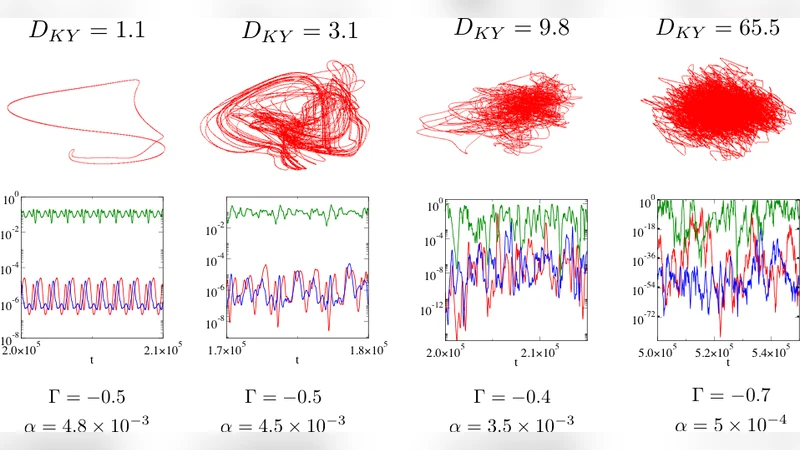

The main empirical findings, obtained from extensive simulations, fall into three distinct dynamical regimes:

-

Fixed‑point convergence – When the game is close to zero‑sum (Γ ≈ −1), memory is short (large α), and the intensity of choice is modest (small β), both players’ strategies converge to a single fixed point. This fixed point corresponds to a Nash equilibrium and mirrors the predictions of classical game theory.

-

Limit‑cycle behavior – For intermediate values of Γ (near zero) and moderate memory (α) together with sufficiently large β, the learning trajectories settle onto stable periodic orbits. The system exhibits regular oscillations in the strategy simplex, indicating that learning does not settle on a static equilibrium but rather on a predictable cycle.

-

High‑dimensional chaos – The most striking regime occurs when the game is moderately anti‑correlated (Γ ≈ −0.6), memory is long (α ≈ 0), and the intensity of choice is high. Here the Lyapunov spectrum contains many positive exponents, yielding a chaotic attractor whose Kaplan‑Yorke dimension D can reach 10–30 (out of a 98‑dimensional phase space). Strategies fluctuate erratically, with occasional “bursts” where a particular move becomes highly probable (probabilities as low as 10⁻⁷² at other times). Payoffs display intermittent bursts of large changes separated by quiescent periods, a pattern reminiscent of turbulence intermittency and clustered volatility in financial markets. The distribution of payoff changes exhibits heavy tails.

To complement the simulations, the authors apply path‑integral techniques from the statistical physics of disordered systems to derive an analytical stability boundary in the limit N → ∞. In a continuous‑time approximation the stability depends only on the ratio α/β, and the analytically obtained boundary matches the numerically observed transition between fixed‑point/limit‑cycle and chaotic regimes.

Several broader implications are drawn:

- Zero‑sum vs. non‑zero‑sum – Games that are close to zero‑sum tend to be learnable (stable fixed points), whereas non‑zero‑sum games, especially with long memory, are prone to complex dynamics.

- Predictability limits – High‑dimensional chaotic attractors render the learning process effectively random. The curse of dimensionality means that no amount of finite data can reliably infer the underlying dynamics, suggesting the existence of “unlearnable” games for any reinforcement‑learning algorithm.

- Relevance to real systems – The intermittent payoff fluctuations and heavy‑tailed distributions observed in the chaotic regime provide a generic mechanistic explanation for similar statistical features in financial markets, ecological interactions, and other social systems where agents adapt in high‑dimensional strategic environments.

- Methodological shift – For many complicated strategic interactions, equilibrium concepts from classical game theory are insufficient. Dynamical‑systems tools (Lyapunov analysis, attractor dimension, intermittency statistics) are more appropriate for describing long‑run behavior.

- Future extensions – The framework can be generalized to multiplayer games, networked interactions, and alternative learning rules (e.g., Q‑learning, Bayesian updating). Understanding how these extensions affect the stability diagram is an open research direction.

In summary, the paper demonstrates that when games become “complicated” in the sense of having many actions and non‑zero‑sum payoffs, reinforcement‑learning dynamics can naturally give rise to limit cycles or high‑dimensional chaos. The chaotic regime is characterized by intermittent, bursty payoff fluctuations and a loss of predictability that parallels phenomena observed in turbulence and financial markets. These findings argue for a paradigm shift from equilibrium‑centric game theory toward a dynamical‑systems perspective when analyzing complex adaptive strategic interactions.

Comments & Academic Discussion

Loading comments...

Leave a Comment